llm.create_chat_completion(

messages = "No input example has been defined for this model task."

)t2sql-gguf

activate backend (with gguf-connector)

ggc e4

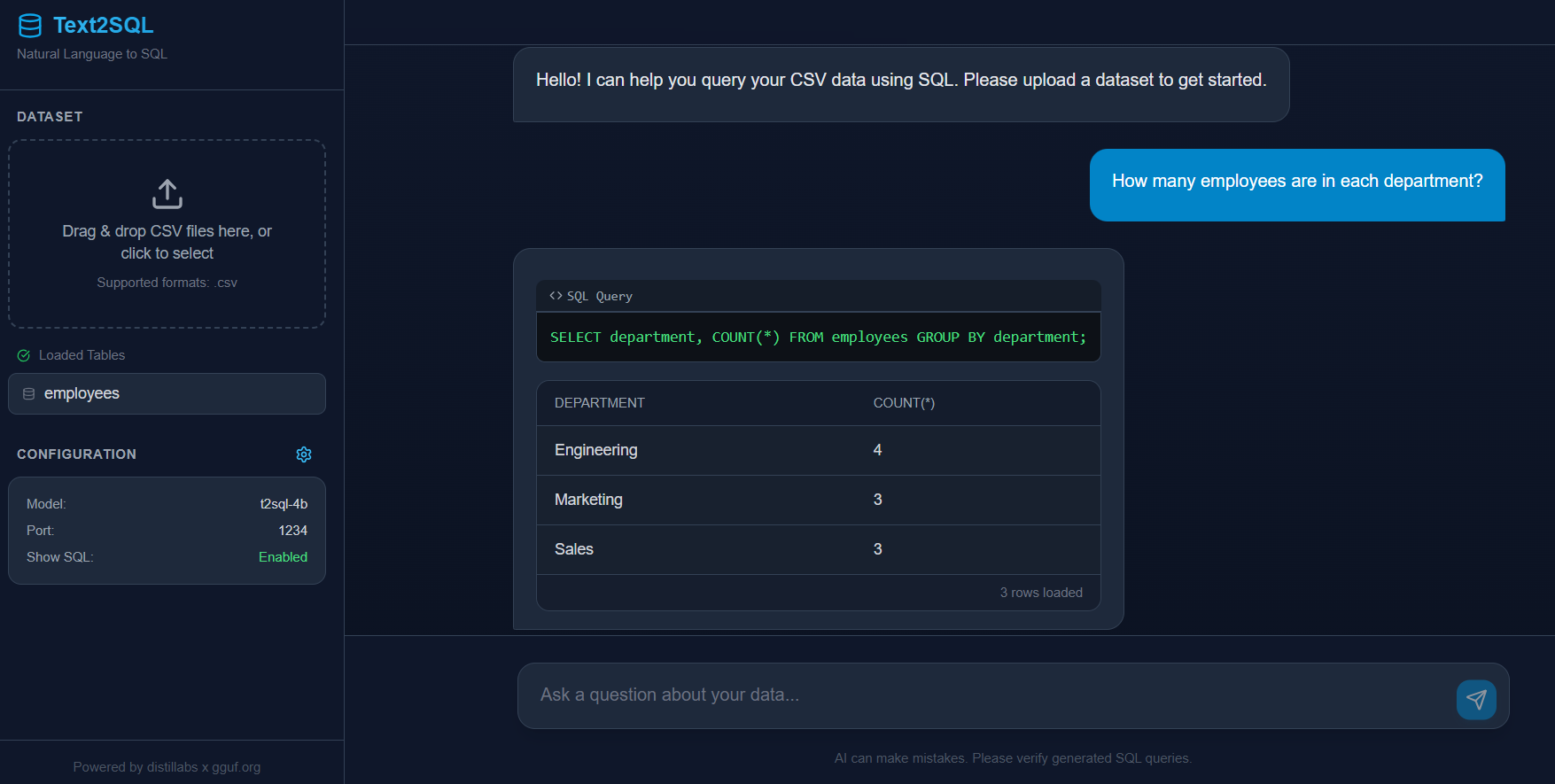

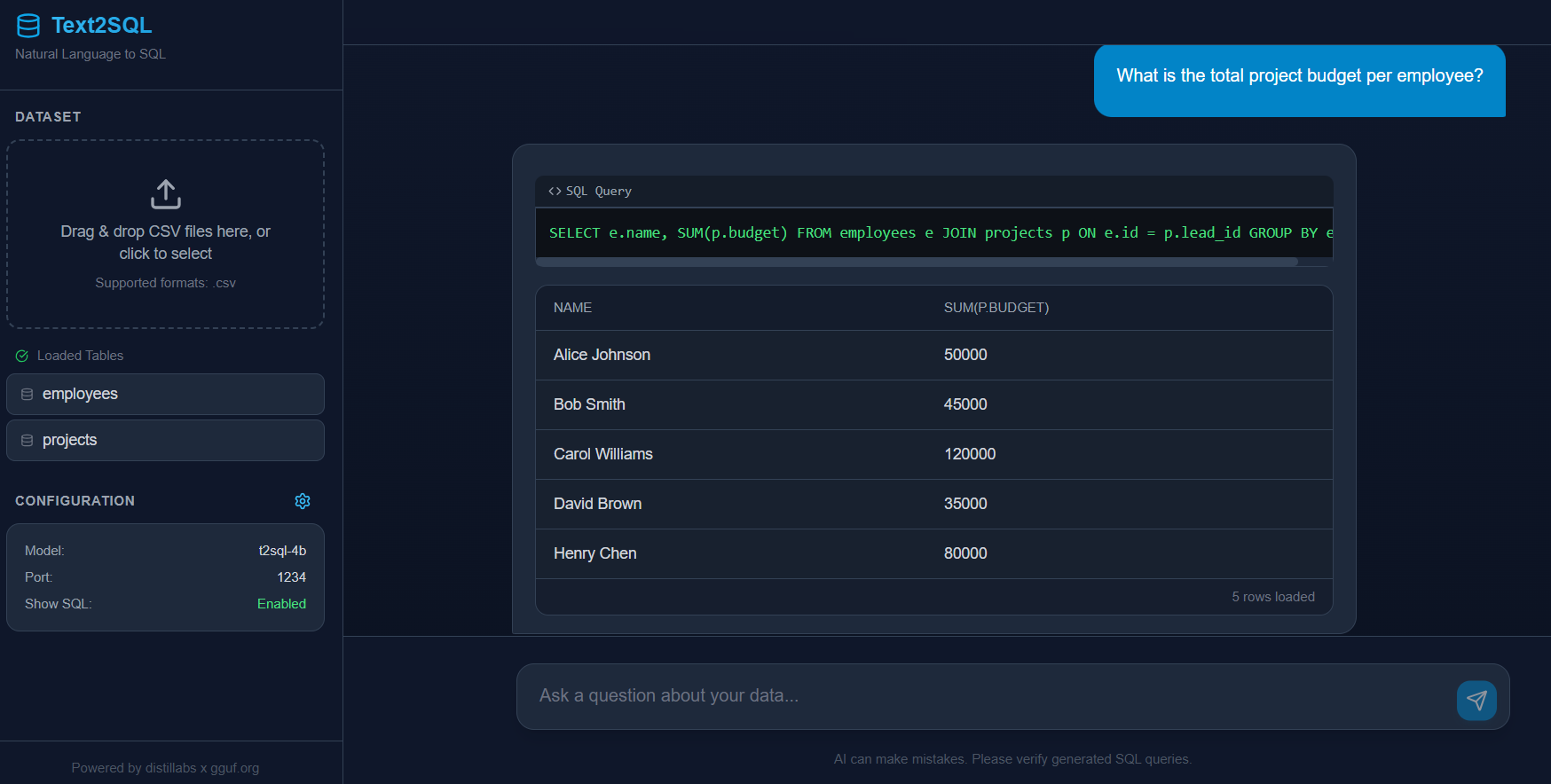

frontend please refer to text2sql (including sample data)

- Downloads last month

- 56

Hardware compatibility

Log In to add your hardware

2-bit

3-bit

4-bit

5-bit

6-bit

8-bit

16-bit

Inference Providers NEW

This model isn't deployed by any Inference Provider. 🙋 Ask for provider support

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="gguf-org/t2sql-gguf", filename="", )