text stringlengths 256 16.4k |

|---|

What is the difference between logistic and logit regression? I understand that they are similar (or even the same thing) but could someone explain the difference(s) between these two? Is one about odds?

The logit is a link function / a transformation of a parameter. It is the logarithm of the odds. If we call the parameter $\pi$, it is defined as follows:

$$ {\rm logit}(\pi) = \log\bigg(\frac{\pi}{1-\pi}\bigg) $$ The logistic function is the inverse of the logit. If we have a value, $x$, the logistic is: $$ {\rm logistic}(x) = \frac{e^x}{1+e^x} $$ Thus (using matrix notation where $\boldsymbol X$ is an $N\times p$ matrix and $\boldsymbol\beta$ is a $p\times 1$ vector), logit regression is: $$ \log\bigg(\frac{\pi}{1-\pi}\bigg) = \boldsymbol{X\beta} $$ and logistic regression is: $$ \pi = \frac{e^\boldsymbol{X\beta}}{1+e^\boldsymbol{X\beta}} $$ For more information about these topics, it may help you to read my answer here: Difference between logit and probit models.

The odds of an event is the probability of the event divided by the probability of the event not occurring. Exponentiating the logit will give the odds. Likewise, you can get the odds by taking the output of the logistic and dividing it by 1 minus the logistic. That is:

$$ {\rm odds} = \exp({\rm logit}(\pi)) = \frac{{\rm logistic}(x)}{1-{\rm logistic}(x)} $$ For more on probabilities and odds, and how logistic regression is related to them, it may help you to read my answer here: Interpretation of simple predictions to odds ratios in logistic regression. |

Today is the first in our series of blogs that will focus on different problem-solving strategies in mathematics. The strategy that we’ll look at now is simple enough at its core: if you’re stuck on a problem that involves unknowns or variables, try substituting, or “plugging”, some numbers into those letters! What numbers you plug in, what you’re plugging into, and how this helps you find the solution varies from problem to problem.

This strategy is especially useful on standardized tests that involve multiple-choice questions, like the SAT. Substituting specific numbers into unknowns or variables can not only give you the ideas you need to eliminate a few wrong answers, but can often steer you to the right answer right away!

Some SAT questions seem to demand that you solve complicated equations, like this one:

Question: What values of \(x\) satisfy the following equation?

$$\sqrt{5x+24}=x+2$$

A. 5 and \(-\)4

B. \(-\)5

C. 4

D. 5

There is a procedure to solve this equation: square both sides, simplify until you get a quadratic equation, factor that quadratic, find the possible solutions, and eliminate extraneous solutions. But what if you’re running out of time, and need an answer,

fast? Well, given that there are answer choices here, do NOT solve the equation! Rather, just plug in your answer choices to see which ones satisfy that equation. Then you’ll have the solution at your fingertips, while avoiding unnecessary work.

Here, it’s helpful to remember that the square root function is

never negative. So, if you plug in \(-\)4 or \(-\)5 for x, you see that the square root is equal to a negative number, which can’t happen. So that immediately eliminates answer choices A and B. Now try x \(=\) 4: \(\sqrt{44}\) is definitely not equal to 6, so C is eliminated as the answer. That leaves D as the only possible option, but check it to be sure: plugging in x \(=\) 5 gives us \(\sqrt{49}=7\), correct! So the correct answer is D.

We found the answer not by tediously solving the equation step-by-step, but by taking advantage of the potential answer choices available to us!

Sometimes the multiple-choice answers may look fairly complicated. Then you won’t be able to plug the answer choices into anything, but you may be able to plug into the answer choices themselves! To see what we’re talking about, let’s try another problem.

Question: A population of bacteria doubles in size every 3 hours. If the initial population is 500, which of the following functions gives the population after \(t\) hours?

A. \(500\times 2^{3t}\)

B. \(500\times 2^{\frac{t}{3}}\)

C. \(500 + 2^{3t}\)

D. \(3\times 500^{\frac{t}{2}}\)

Well, if you know your exponential models, you’ll be able to figure this out. But suppose you blank out on the test? Then what? Plugging in comes to the rescue!

The initial population is 500; that means that when the experiment starts, at time

t \(=\) 0, we have 500 bacteria. So, if we plug in t \(=\) 0 into the correct answer above, we should get 500 back. Of course we don’t know what the correct answer is yet, so we try all of them! When plugging in t \(=\) 0 to choices A and B, we get 500, which means that A and B are possible answers. Answer choice C simplifies to 501, and D simplifies to 3, not what we’re looking for, so we can eliminate them immediately.

So which of A and B is correct? Keep using the given information. The bacteria double every 3 hours, so at time

t \(=\) 3, we should have 2 \(\times\) 500 \(=\) 1000 bacteria. Plug in t \(=\) 3 to answer choices A and B; we’re looking for the expression that simplifies to 1000. Choice A fails (check it… it’s way too big!), and only B works out. The answer is B!

The moral here is that when we happen to forget higher level math (how to create exponential functions that model a given physical situation), we might be able to succeed by remembering some more basic math (evaluating functions), and by using our answer choices to our advantage.

Not all SAT questions are multiple choice. But even open-ended questions can be solved by some judicious substitutions. Try this next one.

Question: The following equation is true for all values of \(x\). Find the value of \(a\).

$$\frac{3x^2+2x-5}{x-a}=3x+23+\frac{156}{x-a}$$

This one seems pretty tough. You might begin by multiplying both sides by \(x-a\) to clear those fractions, and then see what happens. Doing that isn’t all that terrible, but students tend to be put off by ugly expressions like this. Is there another way to do this that might be a little easier, or at least make us feel like we’re in more comfortable territory?

Well, reread the question: this is true for

all values of x. So, any value you plug in for x will give you a true expression. What’s your favorite value of x? Our favorite value is 0, because substituting 0 will often simplify things greatly! Let’s do just that:

$$\frac{3\times 0^2+2\times 0-5}{0-a}=3\times 0+23+\frac{156}{0-a}$$

$$\rightarrow \frac{5}{a}=23+\frac{156}{-a}$$Oh wow, now we can just solve for

a! Start by multiplying both sides by a.

$$5=23a-156$$

$$\rightarrow 23a=161$$

$$\rightarrow a=7$$There was still a little bit of work to do after we plugged in 0, but that was simple algebra compared to what we might have been facing otherwise.

Of course, this technique may not be helpful for every problem. But when you have a few variables or unknowns bugging you in a problem, try plugging in some simple numbers. You might be able to eliminate wrong answers, or reduce the problem to something more manageable, and friendlier!

Find this post interesting? Follow the blog using the link at the top of the page to get notified when new posts appear!

Want awesome tips and a mini-challenge, all designed to help you build vital problem-solving and critical thinking skills in your child? Click here to sign up for our monthly newsletter! |

We consider a smooth manifold $M$ of dimension $d$, a $C^{\infty}$ function $f:M\rightarrow \mathbb{R}$ and $p\in M$. Two charts in a neighborhood $Z$ of $p$: $\phi:U\subset \mathbb{R}^d\rightarrow Z,\phi(u)=p,\psi:V\subset \mathbb{R}^d\rightarrow Z,\psi(v)=p$ are s.t. the transition map $\tau=\phi^{-1}\circ \psi$ is a diffeomorphism. Note that $f$ is smooth iff $g=f\circ \phi$ or $g\circ\tau$ are smooth. Remark that $D(g\circ\tau)_v=Dg_u\circ D\tau_v$ and $Dg_u=0$ is equivalent to $D(g\circ \tau)_v=0$; in the previous case, one says that $p$ is a critical point of $f$.

Proposition 1. The signature of the Hessian of $f$ can be defined in $p\in M$ when $p$ is a critical point of $f$.

Proof. $D^2(g\circ\tau)_v(h,k)=D^2g_u(D\tau_v(h),D\tau_v(k))+Dg_u(D^2\tau_v(h,k))$. Since $Dg_u=0$, $D^2(g\circ\tau)_v(h,k)=D^2g_u(D\tau_v(h),D\tau_v(k))$. Let $K$ be the symmetric matrix associated to $D^2g_u$ and $P$ be the invertible matrix of the linear isomorphism $D\tau_v$; then the symmetric matrix associated to $D^2(g\circ\tau)_v$ is $P^TKP$. Clearly $K$ and $P^TKP$ have same signature and we are done.

Note that, in general, the Hessian of $f$ depends on the chosen chart!! In particular, its eigenvalues vary with the chosen chart.

According to Morse theory,

(*) We can choose a transition map $\tau$ s.t. $D\tau_v$ diagonalizes $K$, that is s.t. $P$ is orthogonal and $P^TKP=diag(\lambda_1\cdots,\lambda_q,\lambda_{q+1},\cdots,\lambda_d)$ where $\lambda_i<0$ for $i\leq q$ and otherwise, $\lambda_i\geq 0$.

Recall that $D\tau_v$ is an isomorphism between two representations of $TM_p$ and that $D^2(g\circ\tau)_v$ is a symmetric bilinear form defined on a representation of $TM_p$.

EDIT. I write the details of the second part. An element $h\in TM_p$ admits, as representative, a smooth curve $\gamma$ s.t. $\gamma(0)=p$; modulo the chart $\phi$, $h$ is identified to the unique vector $(\phi^{-1}\circ \gamma)'(0)\in\mathbb{R}^d$.

Proposition 2. The maximal dimension of the subspaces of the tangent space $TM_p$ of $M$ at $p$, on which $D^2g_v$ is negative definite, is $q$. This result does not depend on the chosen chart.

Proof. According to (*), the maximum is $\geq q$. Now, let $E$ be a subspace of $TM_p$ of dimension $r$ on which $D^2g_v$ is negative definite. There is a transition map $\tau$ associated to the decomposition $E\oplus E^{\perp}$; then $P^TKP$ is in the form $diag(X_r,Y_{n-r})$ where $X_r$ is symmetric $<0$. Note that $X_r$ has $r$ negative eigenvalues and, consequently, $r\leq q$. |

QML Tutorial¶

This tutorial is a general introduction to kernel-ridge regression with QML.

Theory¶

Regression model of some property, \(y\), for some system, \(\widetilde{\mathbf{X}}\) - this could correspond to e.g. the atomization energy of a molecule:

\(y\left(\widetilde{\mathbf{X}} \right) = \sum_i \alpha_i \ K\left( \widetilde{\mathbf{X}}, \mathbf{X}_i\right)\)

E.g. Using Gaussian kernel function with Frobenius norm:

\(K_{ij} = K\left( \mathbf{X}_i, \mathbf{X}_j\right) = \exp\left( -\frac{\| \mathbf{X}_i - \mathbf{X}_j\|_2^2}{2\sigma^2}\right)\)

Regression coefficients are obtained through kernel matrix inversion and multiplication with reference labels

\(\boldsymbol{\alpha} = (\mathbf{K} + \lambda \mathbf{I})^{-1} \mathbf{y}\) Tutorial exercises¶

git clone https://github.com/qmlcode/tutorial.git

Additionally, the repository contains Python3 scripts with the solutions to each exercise.

Exercise 1: Representations¶

In this exercise we use qml~to generate the Coulomb matrix and Bag of bonds (BoB) representations. [3]In QML data can be parsed via the

Compound class, which stores data and generates representations in Numpy’s ndarray format.If you run the code below, you will read in the file

qm7/0001.xyz (a methane molecule) and generate a coulomb matrix representation (sorted by row-norm) and a BoB representation.

import qml# Create the compound object mol from the file qm7/0001.xyz which happens to be methanemol = qml.Compound(xyz="qm7/0001.xyz")# Generate and print a coulomb matrix for compound with 5 atomsmol.generate_coulomb_matrix(size=5, sorting="row-norm")print(mol.representation)# Generate and print BoB bags for compound containing C and Hmol.generate_bob(asize={"C":2, "H":5})print(mol.representation)

The representations are simply stored as 1D-vectors.Note the keyword

size which is the largest number of atoms in a molecule occurring in test or training set.Additionally, the coulomb matrix can take a sorting scheme as keyword, and the BoB representations requires the specifications of how many atoms of a certain type to make room for in the representations.

Lastly, you can print the following properties which is read from the XYZ file:

# Print other properties stored in the objectprint(mol.coordinates)print(mol.atomtypes)print(mol.nuclear_charges)print(mol.name)print(mol.unit_cell)

Exercise 2: Kernels¶

In this exercise we generate a Gaussian kernel matrix, \(\mathbf{K}\), using the representations, \(\mathbf{X}\), which are generated similarly to the example in the previous exercise:

\(K_{ij} = \exp\left( -\frac{\| \mathbf{X}_i - \mathbf{X}_j\|_2^2}{2\sigma^2}\right)\)

QML supplies functions to generate the most basic kernels (E.g. Gaussian, Laplacian). In the exercise below, we calculate a Gaussian kernel for the QM7 dataset.In order to save time you can import the entire QM7 dataset as

Compound objects from the file

tutorial_data.py found in the tutorial GitHub repository.

# Import QM7, already parsed to QMLfrom tutorial_data import compoundsfrom qml.kernels import gaussian_kernel# For every compound generate a coulomb matrix or BoBfor mol in compounds: mol.generate_coulomb_matrix(size=23, sorting="row-norm") # mol.generate_bob(size=23, asize={"O":3, "C":7, "N":3, "H":16, "S":1})# Make a big 2D array with all the representationsX = np.array([mol.representation for mol in compounds])# Print all representationsprint(X)# Run on only a subset of the first 100 (for speed)X = X[:100]# Define the kernel widthsigma = 1000.0# K is also a Numpy arrayK = gaussian_kernel(X, X, sigma)# Print the kernelprint K

Exercise 3: Regression¶

With the kernel matrix and representations sorted out in the previous two exercise, we can now solve the \(\boldsymbol{\alpha}\) regression coefficients:

\(\boldsymbol{\alpha} = (\mathbf{K} + \lambda \mathbf{I})^{-1} \mathbf{y}\label{eq:inv}\)

One of the most efficient ways of solving this equation is using a Cholesky-decomposition.QML includes a function named

cho_solve() to do this via the math module

qml.math.In this step it is convenient to only use a subset of the full dataset as training data (see below).The following builds on the code from the previous step.To save time, you can import the PBE0/def2-TZVP atomization energies for the QM7 dataset from the file

tutorial_data.py.This has been sorted to match the ordering of the representations generated in the previous exercise.Extend your code from the previous step with the code below:

from qml.math import cho_solvefrom tutorial_data import energy_pbe0# Assign 1000 first molecules to the training setX_training = X[:1000]Y_training = energy_pbe0[:1000]sigma = 4000.0K = gaussian_kernel(X_training, X_training, sigma)print(K)# Add a small lambda to the diagonal of the kernel matrixK[np.diag_indices_from(K)] += 1e-8# Use the built-in Cholesky-decomposition to solvealpha = cho_solve(K, Y_training)print(alpha)

Exercise 4: Prediction¶

With the \(\boldsymbol{\alpha}\) regression coefficients from the previous step, we have (successfully) trained the machine, and we are now ready to do predictions for other compounds. This is done using the following equation:

\(y\left(\widetilde{\mathbf{X}} \right) = \sum_i \alpha_i \ K\left( \widetilde{\mathbf{X}}, \mathbf{X}_i\right)\)

In this step we further divide the dataset into a training and a test set. Try using the last 1000 entries as test set.

# Assign 1000 last molecules to the test setX_test = X[-1000:]Y_test = energy_pbe0[-1000:]# calculate a kernel matrix between test and training data, using the same sigmaKs = gaussian_kernel(X_test, X_training, sigma)# Make the predictionsY_predicted = np.dot(Ks, alpha)# Calculate mean-absolute-error (MAE):print np.mean(np.abs(Y_predicted - Y_test))

Exercise 5: Learning curves¶

Repeat the prediction from Exercise 2.4 with training set sizes of 1000, 2000, and 4000 molecules. Note the MAE for every training size. Plot a learning curve of the MAE versus the training set size. Generate a learning curve for the Gaussian and Laplacian kernels, as well using the coulomb matrix and bag-of-bonds representations. Which combination gives the best learning curve? Note you will have to adjust the kernel width (sigma) underway.

Exercise 6: Delta learning¶

A powerful technique in machine learning is the delta learning approach. Instead of predicting the PBE0/def2-TZVP atomization energies, we shall try to predict the difference between DFTB3 (a semi-empirical quantum method) and PBE0 atomization energies.Instead of importing the

energy_pbe0 data, you can import the

energy_delta and use this instead

from tutorial_data import energy_deltaY_training = energy_delta[:1000]Y_test = energy_delta[-1000:]

Finally re-draw one of the learning curves from the previous exercise, and note how the prediction improves. |

I'm currently studying Particle Physics and HEP and this acronym is omnipresent. I know it means

next-to-leading-order but, what is exactly the physical meaning of LO and NLO?

You say you know about Feynman diagrams, at least at tree level. So you might have seen loop diagrams, i.e. diagrams that contain a loop of internal lines (see e.g. https://en.wikipedia.org/wiki/One-loop_Feynman_diagram).

Now, the whole point of Feynman diagrams is to expand the phyisical quantity we are interested in as a power series, $$\sigma= a_0 + \alpha\cdot a_1 + \alpha^2 \cdot a_2 +\dotsm$$ For this to make sense, the expansion parameter $\alpha$, which usually is (related to) some coupling constant, needs to be small -- for example, in QED, $\alpha\approx 1/137$. Then, the higher-order terms, i.e. those with higher powers of $\alpha$, are suppressed, and the lower-order terms dominate (note that I'm glossing over quite a number of issues here to get the basic picture across).

In other words, the lowest-power of $\alpha$ with a nonzero term $a_i$ gives the most important contribution, called

leading order, and the other ones are higher-order. In particular, the next one is the next-to-leading order (NLO), contribution and so on (for example, this paper computes NNNNLO contributions: https://arxiv.org/abs/hep-ph/0610143).

This notation is used in other fields as well: Whenever you have a approximation scheme with a most important term and successively smaller corrections, it makes sense to speak of leading-order, NLO etc. |

Convergence of Limsup and Liminf Theorem

Let $\sequence {x_n}$ be a sequence in $\R$.

Let the limit superior of $\sequence {x_n}$ be $\overline l$.

Let the limit inferior of $\sequence {x_n}$ be $\underline l$.

Proof Sufficient Condition

First, suppose that $\overline l = \underline l = l$.

Let $\epsilon > 0$.

$\exists N_1: \forall n > N_1: x_n < l + \epsilon$

Similarly:

$\exists N_2: \forall n > N_2: x_n > l - \epsilon$

So take $N = \max \set {N_1, N_2}$.

If $n > N$, both the above inequalities hold at the same time.

So $l - \epsilon < x_n < l + \epsilon$ and so by Negative of Absolute Value:

$\size {x_n - l} < \epsilon$

Thus $x_n \to l$ as $n \to \infty$.

$\Box$

Necessary Condition

Then by Limit of Subsequence equals Limit of Real Sequence, all subsequences have a limit of $l$ and the result follows.

$\blacksquare$

Sources 1962: Bert Mendelson: Introduction to Topology... (previous) ... (next): $\S 2.5$: Limits: Exercise $5$ 1977: K.G. Binmore: Mathematical Analysis: A Straightforward Approach... (previous) ... (next): $\S 5$: Subsequences: Exercise $\S 5.15 \ (5)$ 2005: René L. Schilling: Measures, Integrals and Martingales... (previous) ... (next): $\S 8$ |

The Markov chain $(Xn; n\geq)$ has state-space $S = (0, 1, 2, . . .)$, with

$p_{i,0} = \frac{1}{4}$ and $p_{i,i+1} = \frac{3}{4}$ $\forall i \geq 0$, so that the transition matrix is

P =$\begin{pmatrix} \frac{1}{4} & \frac{3}{4} & 0 & 0 & ...\\\ \frac{1}{4} & 0 & \frac{3}{4} & 0 & ... \\\ \frac{1}{4} & 0 & 0 & \frac{3}{4} &... \\\ \vdots & \vdots&\vdots&\vdots& \ddots \end{pmatrix}$

Find the irreducible classes of intercommunicating states. For each class, state:

(a) whether it is

transient, positive recurrent or null recurrent(hint - think about the distribution of the return times - say to state 0 - in this case. From there, you can work out whether the states have a finite or infinite expected return time. Can you work out what sort of states you have here?)

(b) its

periodicity.

I have tried to approach the first part with a state space diagram and have found that only state 0 and 1 intercommunicate and that the rest of the states do not. But, it is possible to return to every state at some point (say we got to 3, we can then go to state 4, then state 0, 1, 2 and end up at 3 again. So would I put {0,1,2,3,4, ...} into one class?

I guess I am also not 100% sure about how to classify the states. |

Examples of solving linear discrete dynamical systems

The solution to a linear discrete dynamical system is an exponential because in each time step, we multiply by a fixed number. It is easy to see what number we multiply in each time step when the dynamical system is in function iteration form. When the dynamical system is given in difference form, we must first transform the dynamical system into function iteration form. These examples illustrate the process.

Example 1

A example of the simplest form is \begin{align*} z_{n+1} &= 0.5 z_n\\ z_0 &= 1024. \end{align*} The dynamical system is given function iteration form and everything is in terms of numbers. Solve the dynamical system and use it to compute $z_{10}$.

Solution: By solution, we mean a formula for $z_n$ just in terms of the initial condition and the time index $n$. In each time step, we multiply by 0.5. To go from time step zero to time step $n$, we must multiply by 0.5 a total of $t$ times. The solution is therefore

\begin{align*}

z_n = (0.5)^n z_0 = (0.5)^n1024

\end{align*}

Using the formula, it is simple to calculate $z_{10}$. It is $z_{10} = (0.5)^{10}1024 =1.$ Example 2

We can make the example slightly more complicated by using a parameter, let's call it $R$, as the number we must multiply by each time step. We'll also use $t$ rather than $n$ for the time step and let the initial condition be another parameter, let's use $d$. Choosing $p$ for the state variable, the dynamical system is \begin{align*} p_{t+1} &= Rp_t\\ p_0 &= d. \end{align*} Solve the dynamical system.

Solution: The system really isn't much harder than the previous. Our solution must be a formula for $p_t$ just in terms of the initial condition and the time index $t$. The solution will also contain the parameters $R$ and $d$ rather than just numbers like the previous example. The main point for the solution is that it can contain the value of the state variable only at the initial time point $t=0$.

Starting with $p_0=d$ at $t=0$, to get $p_t$, we must multiply by $R$ a total of $t$ times. The solution is $$p_t = R^t d.$$

Example 3

Let's mix things up a little bit by writing the dynamical system in difference form. Using $z_n$ as the state variable and keeping every else in terms of numbers, we'll examine the linear discrete dynamical system \begin{align*} z_{n+1} - z_n &= -0.5 z_n\\ z_0 &= 1024. \end{align*} Solve the dynamical system and use it to compute $z_{10}$.

Solution:In this example, the change in $z$ at each time step is half of the value of $z$, but with a negative sign. We subtract off half of $z$ at each time step, but it isn't clear how to write a formula that gives the result of subtracting off half $z$ for a total of $n$ times in a row. The reason the answer isn't so obvious is because the dynamical system is written in difference form, with the change is $z$ on the left side of the equation. If we rewrite the dynamical system in function iteration form by solving the evolution rule for $z_{n+1}$, then it will be clearer how to proceed.

To convert the evolution rule $z_{n+1} - z_n = -0.5 z_n$ to function iteration form, we solve for $z_{n+1}$ by adding $z_{n}$ to both sides of the equation. \begin{align*} z_{n+1} - z_n +z_n &= -0.5 z_n + z_n\\ z_{n+1} &= 0.5 z_n. \end{align*} Now $z_{n+1}$ is written as a function of $z_n$, i.e., $z_{n+1}=f(z_n)$ for the function $f(z)=0.5 z_n$. We must apply the function “multiply by 0.5” at each time step.

Combining the evolution rule with the initial condition, the dynamical system in function iteration form is \begin{align*} z_{n+1} &= 0.5 z_n\\ z_0 &= 1024. \end{align*} The dynamical system is identical to the one from the first example. The solution is that we must apply the function “multiply by 0.5” to $z_0$ a total of $n$ times to reach $z_n$: \begin{align*} z_n = (0.5)^n z_0 = (0.5)^n1024 \end{align*} After 10 time steps, $z_{10} = (0.5)^{10}1024 =1.$

Example 4

Let's try an example in difference form but with parameters. \begin{align*} p_{t+1} - p_t &= rp_t\\ p_0 &= d. \end{align*} Now, starting with the initial condition $d$, we add $rp_t$ at each time step, where $r$ and $d$ are parameters. Solve the dynamical system.

Solution: The system is given in difference form. To solve the dynamical system, we must rewrite it in function iteration form. We add $p_{t}$ to both sides of the evolution rule.

\begin{align*}

p_{t+1} - p_t + p_t &= rp_t + p_t\\

p_{t+1} &= (r+1)p_t.

\end{align*}

Combining this new form of the evolution rule with the initial condition, we can write the dynamical system in function iteration form as

\begin{align*}

p_{t+1} &= (r+1)p_t\\

p_0 &= d.

\end{align*}

At each time step we apply the function $p_{t+1} = f(p_t)$, where $f(p)=(r+1)p$. In other words, we apply the function “multiply by $r+1$.” To go from $p_0$ to $p_t$, we must apply this function $t$ times, or multiply by $r+1$ for a total of $t$ times. This is the same thing as multiplying by $(r+1)^t$. The solution is

$$p_t = (r+1)^t p_0.$$

Using the the initial condition, $p_0=d$, we could also write the solution as

$$p_t = (r+1)^t d.$$

This example is only slightly different from example 2. In fact, if we wanted to make it look exactly like example 2, we could define a new parameter $R$ by setting $R=r+1$. If we were to replace $r+1$ by the symbol $R$, then the dynamical system would be \begin{align*} p_{t+1} &= Rp_t\\ p_0 &= d. \end{align*} with solution $$p_t = R^t d.$$ That looks a little simpler. But in either case, the solution is pretty simple. We just multiply the initial condition $d$ by the number $r+1$, which we could also define as $R$, a total of $t$ times to get to $p_t$.

Example 5

Let's continue the moose example of the discrete dynamical system introduction. In that example, a moose population grew by 8% each year, starting with an initial population size of 1000 moose. If we let the state variable $m_t$ be the number of moose in a population in year $t$, then we can write the dynamical system as \begin{align*} m_{t+1}-m_t &= 0.08 m_t\\ m_0 &= 1000. \end{align*} Solve this dynamical system. Use the solution to calculate the moose population size every ten years up to year 50.

Solution: The dynamical system is written in difference form, as we derived the model thinking about the change in the moose population size. To rewrite it in function iteration form, we add $m_t$ to both sides to the evolution rule, obtaining

\begin{align*}

m_{t+1} &= 1.08 m_t\\

m_0 &= 1000.

\end{align*}

Since we start with a population size of 1000, and in each time step, we multiply the 1.08, the solution to the dynamical system is

$$m_t = 1000 \cdot (1.08)^t.$$

From this solution, we calculate the population size every 10 years.

\begin{align*}

m_{10} &= 1000 (1.08)^{10} \approx 2158.92\\

m_{20} &= 1000 (1.08)^{20} \approx 4660.96\\

m_{30} &= 1000 (1.08)^{30} \approx 10062.66\\

m_{40} &= 1000 (1.08)^{40} \approx 21724.52\\

m_{50} &= 1000 (1.08)^{50} \approx 46901.61

\end{align*} |

Because this is a diatomic molecule, there are no group orbitals. Put another way, the group orbitals

are the molecular orbitals. Knowing the nitrogen atomic orbitals (AOs) and their irreducible representation (irrep) labels is enough.

Since we'll work in the $D_{\mathrm{2h}}$ point group, we need its character table:

$$\begin{array}{c|cccccccc|cc} \hlineD_\mathrm{2h} & E & C_2(z) & C_2(y) & C_2(x) & i & \sigma(xy) & \sigma(xz) & \sigma(yz) & & \\ \hline\mathrm{A_g} & 1 & 1 & 1 & 1 & 1 & 1 & 1 & 1 & & x^2,y^2,z^2 \\\mathrm{B_{1g}} & 1 & 1 & -1 & -1 & 1 & 1 & -1 & -1 & R_z & xy \\\mathrm{B_{2g}} & 1 & -1 & 1 & -1 & 1 & -1 & 1 & -1 & R_y & xz \\\mathrm{B_{3g}} & 1 & -1 & -1 & 1 & 1 & -1 & -1 & 1 & R_x & yz \\\mathrm{A_u} & 1 & 1 & 1 & 1 & -1 & -1 & -1 & -1 & & \\\mathrm{B_{1u}} & 1 & 1 & -1 & -1 & -1 & -1 & 1 & 1 & z & \\\mathrm{B_{2u}} & 1 & -1 & 1 & -1 & -1 & 1 & -1 & 1 & y & \\\mathrm{B_{3u}} & 1 & -1 & -1 & 1 & -1 & 1 & 1 & -1 & x & \\ \hline\end{array}$$

Using the nitrogen atom's electron configuration $\ce{1s^{2} 2s^{2} 2p^{3}}$ as our minimal basis, no d-orbitals will be present, so we can assign irreps to each AO right away, in part because we assume the principal rotation axis (the one of highest order) is aligned along the z-axis:

$$\begin{array}{cccc}\hline\mathrm{s} & \mathrm{p}_x & \mathrm{p}_y & \mathrm{p}_z \\\hline\mathrm{A_{g}} & \mathrm{B_{3u}} & \mathrm{B_{2u}} & \mathrm{B_{1u}} \\\hline\end{array}$$

Then, proceed by starting to form the standard MO diagram for a diatomic, but add the irrep labels to each AO:

Note that I haven't spaced the energy levels properly; the $\ce{1s}$ should be much lower than it is relative to the $\ce{2s}$. More on this later. Regardless, because this is a homodiatomic, all AOs will mix at each energy level, even the core $\ce{1s}$ AOs. Then, attempt to form the MOs:

This is probably wrong, but it's a starting point. Again, energy levels will be discussed later. Finally, add symmetry labels to each MO, remembering that

MO symmetry is derived from AO symmetry, numbering is consecutive within each irrep, not with respect to the set of all MOs, and lowercase is used to signify that these are MOs; uppercase is for the irreps themselves.

This is our final MO diagram for $\ce{N2}$. To form the diagram for $\ce{N2^+}$, remove an electron from $\mathrm{4a_g}$. This ignores orbital relaxation effects, but for the purposes of working this out on paper, it should be fine.

Now for the matter of relative and absolute energy levels. It is probably possible to get the correct relative ordering of the MO energy levels. Here, I assume that since AO mixes with an identical partner on the other atom, the splitting for $\ce{1s}$ would be the same as $\ce{2s},~\ce{2p_z}$, etc. Since I drew the $\ce{1s}$ too high, the $\mathrm{3a_g}$ is almost certainly too high, and perhaps should even go below what is labeled as $\mathrm{2a_g}$. The way to confirm this, and the only way to get absolute energy levels, is to perform a quantum chemical calculation. Since we've used a minimal basis for the drawing, we'll stick a minimal basis in the calculation. Here is a Psi4 input file:

molecule {

N 0.0 0.0 0.0

N 0.0 0.0 1.0975

}

set {

basis sto-3g

scf_type direct

df_scf_guess false

cubeprop_orbitals [1, 2, 3, 4, 5, 6, 7, 8, 9, 10]

}

e, wfn = energy('hf', return_wfn=True)

cubeprop(wfn)

and from its output:

Orbital Energies (a.u.)

-----------------------

Doubly Occupied:

1Ag -15.518067 1B1u -15.516124 2Ag -1.442840

2B1u -0.722491 1B2u -0.573123 1B3u -0.573123

3Ag -0.539495

Virtual:

1B2g 0.281319 1B3g 0.281319 3B1u 1.123476

It looks like I might have messed up the diagram, because the ordering isn't what's expected (why isn't $\mathrm{B_{1u}}$ degenerate with the other two p-orbitals?), and the virtual degenerate p-orbitals have gerade symmetry. Time to plot!

In my haste, I forgot an important point. When you make the MOs from AOs, you're making two linear combinations:

\begin{align}\psi_{s} &= \frac{1}{\sqrt{2}} (\chi_{l} + \chi_{r}) \\\psi_{a} &= \frac{1}{\sqrt{2}} (\chi_{l} - \chi_{r}),\end{align}

which means that an antisymmetric combination of two s orbitals will look like a $\mathrm{p}_z$ orbital. This doesn't fully explain everything, but is a good starting point. The lesson up to now is that while drawing the diagrams by hand is a useful exercise, it is not enough to do only that when the goal is to perform a correlated

ab initio electronic structure calculation. |

Quantitative Modeling for Algorithmic Traders – Primer Quantitative Modeling techniques enable traders to mathematically identify, what makes data “tick” – no pun intended 🙂 .

They rely heavily on the following core attributes of any sample data under study:

Expectation– The mean or average value of the sample Variance– The observed spread of the sample Standard Deviation– The observed deviation from the sample’s mean Covariance– The linear association of two data samples Correlation– Solves the dimensionality problem in Covariance Why a dedicated primer on Quantitative Modeling?

Understanding how to use the five core attributes listed above in practice, will enable you to:

Construct diversified DARWIN portfolios using Darwinex’ proprietary Analytical Toolkit. Conduct mean-variance analysisfor validating your DARWIN portfolio’s composition. Build a solid foundation for implementing more sophisticated quantitative modeling techniques. Potentially improve the robustnessof trading strategies deployed across multiple assets.

Hence, a post dedicated to defining these core attributes, with practical examples in R (statistical computing language) should hopefully serve as good reference material to accompany existing and future posts.

Why R? It facilitates the analysis of large price datasets in short periods of time. Calculations that would otherwise require multiple lines of code in other languages, can be done much faster as R has a mature base of libraries for many quantitative finance applications. It’s free to download here.

* Sample data ( EUR/USD and GBP/USD End-of-Day Adjusted Close Price) used in this post was obtained from Yahoo, where it is freely available to the public.

Before progressing any further, we need to download EUR/USD and GBP/USD sample data from Yahoo Finance (time period: January 01 to March 31, 2017)

In R, this can be achieved with the following code:

library(quantmod)

getSymbols("EUR=X",src="yahoo",from="2017-01-01", to="2017-03-31")

getSymbols("GBP=X",src="yahoo",from="2017-01-01", to="2017-03-31")

Note: “EUR=X” and “GBP=X” provided by Yahoo are in terms of US Dollars, i.e. the data represents USD/EUR and USD/GBP respectively. Hence, we will need to convert base currencies first.

To achieve this, we will first extract the

Adjusted Close Price from each dataset, convert base currency and merge both into a new data frame for use later:

eurAdj = unclass(`EUR=X`$`EUR=X.Adjusted`)

# Convert to EUR/USD

eurAdj = 1/eurAdj

gbpAdj <- unclass(`GBP=X`$`GBP=X.Adjusted`)

# Convert to GBP/USD

gbpAdj <- 1/gbpAdj

# Extract EUR dates for plotting later.

eurDates = index(`EUR=X`)

# Create merged data frame.

eurgbp_merged <- data.frame(eurAdj,gbpAdj)

Finally, we merge the prices and dates to form one single dataframe, for use in the remainder of this post:

eurgbp_merged = data.frame(eurDates, eurgbp_merged)

colnames(eurgbp_merged) = c("Dates", "EURUSD", "GBPUSD")

The mean is its average value. μ of a price series μof a price series

It is calculated by adding all elements of the series, then dividing this sum by the total number of elements in the series.

Mathematically, the mean

μ of a price series P, where elements p ∈ P, with n number of elements in P, is expressed as: \(μ = E(p) = \frac{1}{n} ∑ (p_1 + p_2 + p_3 + … + p_n)\)

In R, the

mean of a sample can be calculated using the mean() function.

For example, to calculate the mean price observed in our sample of EUR/USD data, ranging from January 01 to March 31, 2017, we execute the following code to arrive at mean 1.065407:

mean(eurgbp_merged$EURUSD)

[1] 1.065407

Using the

plotly library in R, here’s the mean overlayed graphically on this EUR/USD sample:

library(plotly)

plot_ly(name="EUR/USD Price", x = eurgbp_merged$Dates, y = as.numeric(eurgbp_merged$EURUSD), type="scatter", mode="lines") %>%

add_trace(name="EUR/USD Mean", y=(as.numeric(mean(eurgbp_merged$EURUSD))), mode="lines")

The variance of a price series is simply the mean or expectation, of the square of (how much price deviates from the mean). σ² σ²

It characterises the range of movement around the mean, or “spread” of the price series.

Mathematically, the

variance σ² of a price series P, with elements p ∈ P, and mean μ, is expressed as: \(σ²(p) = E[(p – μ)²]\) Standard Deviation is simply the square root of variance, expressed as σ: \(σ = \sqrt{σ²(p)} = \sqrt{E[(p – μ)²]}\)

In R, the

standard deviation of a sample can be calculated using the sd() function.

For example, to calculate the standard deviation observed in our sample of EUR/USD data, ranging from January 01 to March 31, 2017, we execute the following code to arrive at s.d. 0.00996836:

sd(eurgbp_merged$EURUSD)

[1] 0.00996836

Using the

plotly library in R again, we can overlay a single (or more) positive and negative standard deviation from the mean, as follows:

plot_ly(name="EUR/USD Price", x = eurgbp_merged$Dates, y = as.numeric(eurgbp_merged$EURUSD), type="scatter", mode="lines") %>%

add_trace(name="+1 S.D.", y=(as.numeric(mean(eurgbp_merged$EURUSD))+sd(eurgbp_merged$EURUSD)), mode="lines", line=list(dash="dot")) %>%

add_trace(name="-1 S.D.", y=(as.numeric(mean(eurgbp_merged$EURUSD))-sd(eurgbp_merged$EURUSD)), mode="lines", line=list(dash="dot")) %>%

add_trace(name="EUR/USD Mean", y=(as.numeric(mean(eurgbp_merged$EURUSD))), mode="lines")

The sample covariance of two price series, in this case EUR/USD and GBP/USD, each with its respective sample mean, describes their linear association, i.e. how they move together in time.

Let’s denote EUR/USD by variable ‘

e’ and GBP/USD by variable ‘ g‘.

These price series will then have respective sample means of \(\overline{e}\) and \(\overline{g}\) respectively.

Mathematically, their

sample covariance, Cov(e, g), where both have n number of data points \((e_i, g_i)\), can be expressed as: \(Cov(e,g) = \frac{1}{n-1}\sum_{i=1}^{n}(e_i – \overline{e})(g_i – \overline{g})\)

In R,

sample covariance can be calculated easily using the cov() function.

Before we calculate covariance, let’s first use the

plotly library to draw a scatter plot of EUR/USD and GBP/USD.

To visualize linear association, we will also perform a

linear regression on the two price series, followed by drawing this as a line of best fit on the scatter plot.

This can be achieved in R using the following code:

# Perform linear regression on EUR/USD and GBP/USD

fit <- lm(EURUSD ~ GBPUSD, data=eurgbp_merged)

# Draw scatter plot with line of best fit

plot_ly(name="Scatter Plot", data=eurgbp_merged, y=~EURUSD, x=~GBPUSD, type="scatter", mode="markers") %>%

add_trace(name="Linear Regression", data=eurgbp_merged, x=~GBPUSD, y=fitted(fit), mode="lines")

Based on this plot, EUR/USD and GBP/USD have a positive linear association.

To

calculate the sample covariance of EUR/USD and GBP/USD between January 01 and March 31, 2017, we execute the following code to arrive at covariance 7.629787e-05:

cov(eurgbp_merged$EURUSD, eurgbp_merged$GBPUSD)

[1] 7.629787e-05

Problem: Being dimensional in nature, calculating just Covariance makes it difficult to compare price series with significantly different variances. Solution: Calculate Correlation, which is Covariance normalized by the standard deviations of each price series, hence making it dimensionless and a more interpretable ratio of linear association between two price series.

Mathematically, Correlation ρ(e,g) of EUR/USD and GBP/USD, where \(σ_e\) and \(σ_g\) are their respective standard deviations, can be expressed as:

\(ρ(e,g) = \frac{Cov(e,g)}{σ_e σ_g} = \frac{\frac{1}{n-1}\sum_{i=1}^{n}(e_i – \overline{e})(g_i – \overline{g})}{σ_e σ_g}\) Correlation = +1 indicates EXACT positive association. Correlation = -1 indicates EXACT negative association. Correlation = 0 indicates NO linear association.

In R,

correlation can be calculated easily using the cor() function.

For example, to calculate the correlation between EUR/USD and GBP/USD, from January 01 to March 31, 2017, we execute the following code to arrive at 0.5169411:

cor(eurgbp_merged$EURUSD, eurgbp_merged$GBPUSD)

[1] 0.5169411

0.5169411 implies reasonable positive correlation between EUR/USD and GBP/USD, which is what we visualized earlier with our scatter plot and line of best fit.

In future blog posts, we will examine how to construct diversified DARWIN Portfolios using the information above in practice.

Trade safe,

The Darwinex Team

—

Additional Resource: Learn more about DARWIN Portfolio Risk (VIDEO) * please activate CC mode to view subtitles. Do you have what it takes? – Join the Darwinex Trader Movement! |

I'm currently reading some papers about Markov chain lumping and I'm failing to see the difference between a Markov chain and a plain directed weighted graph.

For example in the article Optimal state-space lumping in Markov chains they provide the following definition of a CTMC (continuous time Markov chain):

We consider a finite CTMC $(\mathcal{S}, Q)$ with state space $\mathcal{S} = \{x_1, x_2, \ldots, x_n\}$ by a transition rate matrix $Q: \mathcal{S} \times \mathcal{S} \to \mathbb{R}^+$.

They don't mention the Markov property at all, and, in fact, if the weight on the edges represents a probability I believe the Markov property trivially holds since the probability depends only on the current state of the chain and not the path that lead to it.

In an other article On Relational Properties of Lumpability Markov chains are defined similarly:

A Markov chain $M$ will be represented as a triplet $(S, P, \pi)$ where $S$ is the finite set of states of $M$, $P$ the transition probability matrix indicating the probability of getting from one state to another, and $\pi$ is the initial probability distribution representing the likelyhood for the system to start in a certain state.

Again, no mention of past or future or independence.

There's a third paper Simple O(m logn) Time Markov Chain Lumping where they not only never state that the weights on the edges are probabilities, but they even say:

In many applications, the values $W(s, s')$ are non-negative. We do not make this assumption, however, because there are also applications where $W(s, s)$ is deliberately chosen as $-W(s, S \setminus \{s\})$, making it usually negative.

Moreover, it's stated that lumping should be a way to reduce the number of states while maintaining the Markov property (by aggregating "equivalent" state into a bigger state). Yet, to me, it looks like it's simply summing probabilities and it shouldn't even guarantee that the resulting peobabilities of the transitions to/from the aggregated states are in the range $[0,1]$. What does the lumping actually preserve then?

So, there are two possibilities that I see:

I didn't understand what a Markov chain is, or The use of the term Markov chain in those papers is bogus

Could someone clarify the situation?

It really looks like there are different communities using that term and they mean widely different things. From these 3 articles that I'm considering it looks like the Markov property is either trivial or useless, while looking at a different kind of papers it looks fundamental. |

Countable models of PA fall into two categories: the standard one $(\omega, S)$ and the nonstandard ones (all the rest). The only way I've seen to construct a nonstandard model is through taking an ultraproduct or, equivalently, using the compactness theorem. My question is wether or not these are all the models there are? There are continuum many ultrafilters and continuum many nonstandard, countable models, but I don't know if there's a surjective correspondence.

An ultrapower will never yield a countable nonstandard model of PA --- either you will recover the standard model or the result will be uncountable.

As far as the construction of a model is concerned, due to Tennenbaum's theorem (see http://en.wikipedia.org/wiki/Tennenbaum's_theorem) you will never see a recursive nonstandard model of PA. Hence, in some sense, you will never construct a countable model of PA other than the standard model.

On the other hand, if you consider the Henkin construction to be constructive enough for you, then by running his construction relative to the theory in ${\mathcal L}(+,\times,0,1,c,<)$ consisting of PA together with all the assertions $c > n$ for each $n \in {\mathbb N}$, then you would obtain a nonstandard model of PA.

It could be mentioned perhaps that Skolem's non-standard model of arithmetic (1933-1934) is countable and is a kind of a "definable" version of the ultraproduct construction. Namely, Skolem only uses definable sequences in his construction. The advantage of his model is that it is constructed without using the axiom of choice. |

According to my revision guide baryon and mesons always interact via the strong interaction.

Does this hold for baryon-baryon interactions? meson-meson?

Thanks

Physics Stack Exchange is a question and answer site for active researchers, academics and students of physics. It only takes a minute to sign up.Sign up to join this community

Quarks, the constituents of hadrons/mesons, interact via the strong, weak and electromagnetic force. So hadrons/mesons do interact via all this forces, too. Even if the total net-carge is zero. Take for instance the neutron, which has zero electric charge. Still it has a magnetic moment which gives rise to electromagnetic interactions. It can also decay via a weak process, which is commonly known as beta-radiation.

Charged hadrons, and neutral hadrons with nonzero magnetic moment, interact electromagnetically. A spinless, neutral hadron would not couple to the electromagnetic field at tree level, but the most obvious example of such a particle is the $\pi^0$, which

decays electromagnetically to two photons.

All particles with flavor participate in the weak interaction. You mostly hear about this in terms of decays, because for ordinary interaction energies the weak interaction is too feeble to contribute much to the dynamics. You can consider the weak interaction between strongly- or electromagnetically-interacting particles as a Yukawa-type force, $$ V \propto \frac{e^{-r/r_0}}{r}, $$ where the length scale is set by the mass of the weak boson, $r_0 \approx (\hbar c) / (m_Wc^2)$.

However, the weak interaction has a different set of symmetries than the strong and electromagnetic forces. Specifically, the weak interaction is broken under parity transformations, while the strong and E&M transitions are not. You can therefore peer down into the short-distance physics of low-energy interactions by looking for parity-violating observables. The most common method is to look for an asymmetry in a scalar quantity, like reaction rate, that depends on the angle between a spin and a momentum, $\vec\sigma\cdot\vec p$.

The purely hardronic weak interaction (by which I mean, without any leptonic decays involved) is a hard thing to suss out theoretically, because the strong force is both (a) strong, and (b) complicated. In many-body systems, you may have opposite-parity excited states which happen to be nearly degenerate in energy and are mixed by the weak interaction. The largest known enhancement of this type, to my knowledge, occurs in some of the excited states probed by neutron capture on lanthanum: there is a correlation between the incoming neutron's spin $\vec\sigma_\text{n}$ and the outgoing photon's direction $\vec k_\gamma$ that turns out to be a 10% asymmetry. But lanthanum is an enormously complicated nucleus. In neutron capture on hydrogen the same asymmetry is about ten parts per billion.

So, while hadrons

always interact via the strong interaction, theycertainly do not only interact via the strong interaction. |

In the above example, how is it that $f^{-1}((1,3)) = (2,3]$ ? Here is my understanding, kindly correct the misconceptions. The inverse for $(2,4]$ is not defined. The inverse is as below. $$f^{-1}(y)=\begin{cases} y+1 & \text { if } y \le 2\\ 2y-5 & \text{ if } y \gt 4\\ \end{cases}$$ So, if I have to find out for example, $f^{-1}(2\frac{1}{2})$, how do I do it? When does $f^{-1}(y)$ give me $3$ (to justify the $3$ in $(2,3]$ ) ?

The inverse image $f^{-1}(S)$ refers to the set $$\{x \in \Bbb{R} : f(x) \in S\}$$ This would mean that $$f^{-1}(\{2\frac{1}{2}\}) = \emptyset$$ We also have, $$f^{-1}(1, 3) = \{x \in S : 1 < f(x) < 3\},$$ which is true precisely for $2 < x \le 3$. There's no requirement that there be some $x$ such that $f(x) = 2.5$; just so long as it's less than $3$.

If $x>3$ we have that $f(x) = \frac{1}{2}(x+5) > \frac{1}{2} \cdot 8 = 4$ and so $f(x) \notin (1,3)$.

If $2< x \le 3$ we have that $f(x)=x-1 \in (1,2] \subseteq (1,3)$ and

If $x \le 2$, $f(x)=x-1 \le 1$,so $f(x) \notin (1,3)$

Hence $f^{-1}((1,3) = \{x: f(x) \in (1,3) \} = (2,3]$, as we covered all options for $x$.

Inverse image is not "image under a (non-existent) inverse function". For example: if $f: \mathbb{R} \to \mathbb{R}$ is the function that is constant with value $2$, then $f^{-1}(\{2\}) = \mathbb{R}$ and $f^{-1}(\{1\}) = \emptyset$. We are talking about inverse images of sets, not of points. |

It looks like you're new here. If you want to get involved, click one of these buttons!

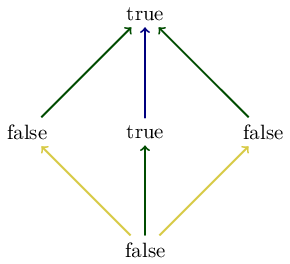

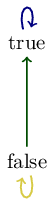

Show that the map \(\Phi\) from the Introduction, which was roughly given by ‘Is • connected to ∗?’ is a monotone map from the preorder shown in Eq. (1.2) to \( \mathbb{B}.\)

If we apply the map "is \(\bullet\) connected to \(\star\)" to the diagram on the left we obtain the following diagram:

All the paths above are valid since they exist in the Boolean poset (as indicated by the colors in the diagram below); hence the map \(\phi\) is a monotone map.

If we apply the map "is \\(\bullet\\) connected to \\(\star\\)" to the diagram on the left we obtain the following diagram:

All the paths above are valid since they exist in the Boolean poset (as indicated by the colors in the diagram below); hence the map \\(\phi\\) is a monotone map.

Since \(\mathrm{false} \le a\) for all \(a \in \mathbb{B}\) and \(\mathrm{true} \le \mathrm{true}\), it is sufficient to check that if \(a \le a'\) and \(f(a) = \mathrm{true}\), then \(f(a') = \mathrm{true}\). Because \(a\) is finer than \(a'\), every part in \(a\) is a subset of a part in \(b\). Therefore, since \(\bullet\) and \(\ast\) are in the same part in \(a\), they will also be in the same part in \(a'\). Hence, \(f(a') = \mathrm{true}\).

Since \\(\mathrm{false} \le a\\) for all \\(a \in \mathbb{B}\\) and \\(\mathrm{true} \le \mathrm{true}\\), it is sufficient to check that if \\(a \le a'\\) and \\(f(a) = \mathrm{true}\\), then \\(f(a') = \mathrm{true}\\). Because \\(a\\) is finer than \\(a'\\), every part in \\(a\\) is a subset of a part in \\(b\\). Therefore, since \\(\bullet\\) and \\(\ast\\) are in the same part in \\(a\\), they will also be in the same part in \\(a'\\). Hence, \\(f(a') = \mathrm{true}\\).

We want to show that x≤y implies Φ(x)≤ Φ(y). If x≤y, that means that if • and ∗ are connected in x then they are connected in y. So • and ∗ are either connected in both x and y, disconnected in x and y, or disconnected in x and connected in y. Then (Φ(x), Φ(y)) is either (true, true), (false, false), or (false, true). In all cases Φ(x)≤Φ(y). |

It looks like you're new here. If you want to get involved, click one of these buttons!

In this chapter we learned about left and right adjoints, and about joins and meets. At first they seemed like two rather different pairs of concepts. But then we learned some deep relationships between them. Briefly:

Left adjoints preserve joins, and monotone functions that preserve enough joins are left adjoints.

Right adjoints preserve meets, and monotone functions that preserve enough meets are right adjoints.

Today we'll conclude our discussion of Chapter 1 with two more bombshells:

Joins

are left adjoints, and meets are right adjoints.

Left adjoints are right adjoints seen upside-down, and joins are meets seen upside-down.

This is a good example of how category theory works. You learn a bunch of concepts, but then you learn more and more facts relating them, which unify your understanding... until finally all these concepts collapse down like the core of a giant star, releasing a supernova of insight that transforms how you see the world!

Let me start by reviewing what we've already seen. To keep things simple let me state these facts just for posets, not the more general preorders. Everything can be generalized to preorders.

In Lecture 6 we saw that given a left adjoint \( f : A \to B\), we can compute its right adjoint using joins:

$$ g(b) = \bigvee \{a \in A : \; f(a) \le b \} . $$ Similarly, given a right adjoint \( g : B \to A \) between posets, we can compute its left adjoint using meets:

$$ f(a) = \bigwedge \{b \in B : \; a \le g(b) \} . $$ In Lecture 16 we saw that left adjoints preserve all joins, while right adjoints preserve all meets.

Then came the big surprise: if \( A \) has all joins and a monotone function \( f : A \to B \) preserves all joins, then \( f \) is a left adjoint! But if you examine the proof, you'l see we don't really need \( A \) to have

all joins: it's enough that all the joins in this formula exist:

$$ g(b) = \bigvee \{a \in A : \; f(a) \le b \} . $$Similarly, if \(B\) has all meets and a monotone function \(g : B \to A \) preserves all meets, then \( f \) is a right adjoint! But again, we don't need \( B \) to have

all meets: it's enough that all the meets in this formula exist:

$$ f(a) = \bigwedge \{b \in B : \; a \le g(b) \} . $$ Now for the first of today's bombshells: joins are left adjoints and meets are right adjoints. I'll state this for binary joins and meets, but it generalizes.

Suppose \(A\) is a poset with all binary joins. Then we get a function

$$ \vee : A \times A \to A $$ sending any pair \( (a,a') \in A\) to the join \(a \vee a'\). But we can make \(A \times A\) into a poset as follows:

$$ (a,b) \le (a',b') \textrm{ if and only if } a \le a' \textrm{ and } b \le b' .$$ Then \( \vee : A \times A \to A\) becomes a monotone map, since you can check that

$$ a \le a' \textrm{ and } b \le b' \textrm{ implies } a \vee b \le a' \vee b'. $$And you can show that \( \vee : A \times A \to A \) is the left adjoint of another monotone function, the

diagonal

$$ \Delta : A \to A \times A $$sending any \(a \in A\) to the pair \( (a,a) \). This diagonal function is also called

duplication, since it duplicates any element of \(A\).

Why is \( \vee \) the left adjoint of \( \Delta \)? If you unravel what this means using all the definitions, it amounts to this fact:

$$ a \vee a' \le b \textrm{ if and only if } a \le b \textrm{ and } a' \le b . $$ Note that we're applying \( \vee \) to \( (a,a') \) in the expression at left here, and applying \( \Delta \) to \( b \) in the expression at the right. So, this fact says that \( \vee \) the left adjoint of \( \Delta \).

Puzzle 45. Prove that \( a \le a' \) and \( b \le b' \) imply \( a \vee b \le a' \vee b' \). Also prove that \( a \vee a' \le b \) if and only if \( a \le b \) and \( a' \le b \).

A similar argument shows that meets are really right adjoints! If \( A \) is a poset with all binary meets, we get a monotone function

$$ \wedge : A \times A \to A $$that's the

right adjoint of \( \Delta \). This is just a clever way of saying

$$ a \le b \textrm{ and } a \le b' \textrm{ if and only if } a \le b \wedge b' $$ which is also easy to check.

Puzzle 46. State and prove similar facts for joins and meets of any number of elements in a poset - possibly an infinite number.

All this is very beautiful, but you'll notice that all facts come in pairs: one for left adjoints and one for right adjoints. We can squeeze out this redundancy by noticing that every preorder has an "opposite", where "greater than" and "less than" trade places! It's like a mirror world where up is down, big is small, true is false, and so on.

Definition. Given a preorder \( (A , \le) \) there is a preorder called its opposite, \( (A, \ge) \). Here we define \( \ge \) by

$$ a \ge a' \textrm{ if and only if } a' \le a $$ for all \( a, a' \in A \). We call the opposite preorder\( A^{\textrm{op}} \) for short.

I can't believe I've gone this far without ever mentioning \( \ge \). Now we finally have really good reason.

Puzzle 47. Show that the opposite of a preorder really is a preorder, and the opposite of a poset is a poset. Puzzle 48. Show that the opposite of the opposite of \( A \) is \( A \) again. Puzzle 49. Show that the join of any subset of \( A \), if it exists, is the meet of that subset in \( A^{\textrm{op}} \). Puzzle 50. Show that any monotone function \(f : A \to B \) gives a monotone function \( f : A^{\textrm{op}} \to B^{\textrm{op}} \): the same function, but preserving \( \ge \) rather than \( \le \). Puzzle 51. Show that \(f : A \to B \) is the left adjoint of \(g : B \to A \) if and only if \(f : A^{\textrm{op}} \to B^{\textrm{op}} \) is the right adjoint of \( g: B^{\textrm{op}} \to A^{\textrm{ op }}\).

So, we've taken our whole course so far and "folded it in half", reducing every fact about meets to a fact about joins, and every fact about right adjoints to a fact about left adjoints... or vice versa! This idea, so important in category theory, is called

duality. In its simplest form, it says that things come on opposite pairs, and there's a symmetry that switches these opposite pairs. Taken to its extreme, it says that everything is built out of the interplay between opposite pairs.

Once you start looking you can find duality everywhere, from ancient Chinese philosophy:

to modern computers:

But duality has been studied very deeply in category theory: I'm just skimming the surface here. In particular, we haven't gotten into the connection between adjoints and duality!

This is the end of my lectures on Chapter 1. There's more in this chapter that we didn't cover, so now it's time for us to go through all the exercises. |

X

Search Filters

Format

Subjects

Language

Publication Date

Click on a bar to filter by decade

Slide to change publication date range

Physics of Particles and Nuclei Letters, ISSN 1547-4771, 12/2018, Volume 15, Issue 7, pp. 720 - 723

Journal Article

2. Study of the process e + e − → π + π − π 0 η in the c.m. energy range 1394–2005 MeV with the CMD-3 detector

Physics Letters, Section B: Nuclear, Elementary Particle and High-Energy Physics, ISSN 0370-2693, 10/2017, Volume 773, pp. 150 - 158

Journal Article

3. Study of the process e+e−→K+K− in the center-of-mass energy range 1010–1060 MeV with the CMD-3 detector

Physics Letters B, ISSN 0370-2693, 04/2018, Volume 779, pp. 64 - 71

The process has been studied using events from a data sample corresponding to an integrated luminosity of 5.7 pb collected with the CMD-3 detector in the...

VEPP-2M COLLIDER | PHI | ANNIHILATION | ASTRONOMY & ASTROPHYSICS | PHYSICS, NUCLEAR | RADIATIVE-CORRECTIONS | CROSS-SECTION | PION | PAIR | PHYSICS, PARTICLES & FIELDS | Physics - High Energy Physics - Experiment | Physics | High Energy Physics - Experiment

VEPP-2M COLLIDER | PHI | ANNIHILATION | ASTRONOMY & ASTROPHYSICS | PHYSICS, NUCLEAR | RADIATIVE-CORRECTIONS | CROSS-SECTION | PION | PAIR | PHYSICS, PARTICLES & FIELDS | Physics - High Energy Physics - Experiment | Physics | High Energy Physics - Experiment

Journal Article

EPJ Web of Conferences, ISSN 2100-014X, 2019, Volume 212, p. 7002

Journal Article

5. An amplitude analysis of the process e+e-→ 4π in the center-of-mass energy range 900–2000 MeV with the CMD3 detector at the VEPP-2000 e+e- collider

EPJ Web of Conferences, ISSN 2100-014X, 2019, Volume 212, p. 3008

Journal Article

EPJ Web of Conferences, ISSN 2100-014X, 2019, Volume 212, p. 4008

The cross section of the process e+ e−→ KS KL π0 has been studied with the CMD-3 detector at the VEPP-2000 electron-positron collider in the center-of-mass...

Cross sections

Cross sections

Journal Article

EPJ Web of Conferences, ISSN 2100-014X, 2019, Volume 212, p. 4001

Regular data taking with the CMD-3 at the electron-positron collider VEPP-2000 is under way since 2010. The collected data sample corresponds to about 200...

Luminosity

Luminosity

Journal Article

8. Measurement of the hadronic cross sections with the CMD-3 and SND detectors at the VEPP-2000 collider

EPJ Web of Conferences, ISSN 2101-6275, 08/2018, Volume 182, p. 2068

Conference Proceeding

Physics Letters B, ISSN 0370-2693, 06/2013, Volume 723, Issue 1-3, pp. 82 - 89

Journal Article

EPJ Web of Conferences, ISSN 2101-6275, 11/2016, Volume 130, p. 01014

Conference Proceeding

Physics letters B, ISSN 0370-2693, 09/2014, Volume 740, pp. 273 - 277

A search for the process $\epem\to \eta^\prime(958)$ in the $\pipi\eta\to\pipi\gamma\gamma$ final state has been performed with the CMD-3 detector at the...

Physics - High Energy Physics - Experiment | High Energy Physics | High Energy Physics - Experiment | Nuclear and High Energy Physics | Nuclear Experiment | Experiment | Physics

Physics - High Energy Physics - Experiment | High Energy Physics | High Energy Physics - Experiment | Nuclear and High Energy Physics | Nuclear Experiment | Experiment | Physics

Journal Article

12. Measurement of the e+e−→K+K−π+π− cross section with the CMD-3 detector at the VEPP-2000 collider

Physics Letters B, ISSN 0370-2693, 05/2016, Volume 756, Issue C, pp. 153 - 160

Journal Article

13. Study of the process e+e− → 3(π+π−)π0 in the c.m. energy range 1.6–2.0 GeV with the CMD-3 detector

Physics Letters B, ISSN 0370-2693, 05/2019, Volume 792, pp. 419 - 423

The cross section of the process has been measured for the first time using a data sample of 56.7 pb collected with the CMD-3 detector at the VEPP-2000...

Journal Article

Physics Letters, Section B: Nuclear, Elementary Particle and High-Energy Physics, ISSN 0370-2693, 01/2015, Volume 740, pp. 273 - 277

A search for the process e e →Λ (958) in the π π Λ→π π γγ final state has been performed with the CMD-3 detector at the VEPP-2000 e e collider. Using an...

Journal Article

Physics of Particles and Nuclei Letters, ISSN 1547-4771, 12/2018, Volume 15, Issue 7, pp. 749 - 753

Journal Article

16. Study of the process e+e−→π+π−π+π− in the c.m. energy range 920–1060 MeV with the CMD-3 detector

Physics Letters B, ISSN 0370-2693, 05/2017, Volume 768, Issue C, pp. 345 - 350

Journal Article

17. Investigation of the processes e + e − → 2 (π+π−π0) and e + e − → 3 (π+π−) with the aid of the CMD-3 detector

Physics of Atomic Nuclei, ISSN 1063-7788, 5/2015, Volume 78, Issue 3, pp. 353 - 357

The cross sections for the processes e + e − → 2(π+π−π0) and e + e − → 3(π+π−) at c.m. energies in the range of 1.5–2 GeV were measured with the aid of the...

Physics | Particle and Nuclear Physics | CROSS SECTIONS | PHYSICS OF ELEMENTARY PARTICLES AND FIELDS | GEV RANGE | POSITRON BEAMS | ELECTRONS | VEPP-2 | ELECTRON BEAMS | PIONS MINUS | PIONS PLUS | POSITRONS | COLLIDING BEAMS | PIONS NEUTRAL

Physics | Particle and Nuclear Physics | CROSS SECTIONS | PHYSICS OF ELEMENTARY PARTICLES AND FIELDS | GEV RANGE | POSITRON BEAMS | ELECTRONS | VEPP-2 | ELECTRON BEAMS | PIONS MINUS | PIONS PLUS | POSITRONS | COLLIDING BEAMS | PIONS NEUTRAL

Journal Article

JETP Letters, ISSN 0021-3640, 9/2015, Volume 102, Issue 5, pp. 266 - 270

The sensitivity of the VEPP-2000 e + e − collider in the search for the rare decay η → e + e − has been studied. The inverse reaction e + e − → η is proposed...

Solid State Physics | Quantum Information Technology, Spintronics | Atomic, Molecular, Optical and Plasma Physics | Biophysics and Biological Physics | Physics | Particle and Nuclear Physics

Solid State Physics | Quantum Information Technology, Spintronics | Atomic, Molecular, Optical and Plasma Physics | Biophysics and Biological Physics | Physics | Particle and Nuclear Physics

Journal Article

19. Measurement of the e(+)e(-) -> K+K-pi(+)pi(-) cross section with the CMD-3 detector at the VEPP-2000 collider

PHYSICS LETTERS B, ISSN 0370-2693, 05/2016, Volume 756, pp. 153 - 160

Journal Article

Physics of Particles and Nuclei Letters, ISSN 1547-4771, 9/2014, Volume 11, Issue 5, pp. 651 - 655

Journal Article |

This may be a silly question, and so I apologize in advance. But it stems from a reading of section 2 (page 5) of the physics paper, "Counting chiral primaries in N=1 d=4 superconformal field theories".

My

question is: What does the representation of $U(1)$ labeled as $\frac{1}{2}$ indicate?

My question is a group-theory question, and is as such a math question, but for those unfamiliar with the context, here is some background.

The superconformal group for $\mathcal{N} = 1$ supersymmetry in $d = 4$ spacetime dimensions is $SU(2,2|1)$. We focus our attention on a particular subgroup of $SU(2,2|1)$, called the maximal bosonic subgroup: $SU(2,2) \times U(1)_R$. The $U(1)_R$ is known as an R-symmetry group in physics. The generators of supersymmetry (the ''supercharges'') $Q$ and $Q^\dagger$ belong to representations $4_1$ and $\bar{4}_{-1}$ of $SU(2,2) \times U(1)_R$. The subscript denotes the $U(1)_R$ representation ($1$ is the fundamental, $-1$ is the anti-fundamental) and the $4$ and $\bar{4}$ are $SU(2,2)$ representations. So, so far we are labeling everything in terms of irreps of the maximal bosonic subgroup. The conformal group in $d = 4$ spacetime dimensions is $SO(4,2)$, which has a covering group $SU(2,2)$. We want to study a quantum field theory not in 4-dimensional Minkowski ("flat") space but on the space $\mathbb{R} \times S^3$. So one is interested in the Killing spinors of this space, and the isometries. Based on (4) and (5), we restrict our attention to the subgroup $U(1) \times SO(4)$ of the conformal group, which is the isometry group of $\mathbb{R} \times S^3$. The idea then is to decompose the generators in terms of representations of the isometry group $SU(2)_l \times SU(2)_r \times U(1)$ and the R-symmetry $U(1)_R$. Here we've used the fact that $\mathbb{so(4)} = \mathbb{su}(2)_l \times \mathbb{su}(2)_r$.

The claim is

$$4_1 \longrightarrow (2,1)_{\frac{1}{2},1} \oplus (1,2)_{-\frac{1}{2},1}$$ $$\bar{4}_{-1} \longrightarrow (2,1)_{-\frac{1}{2},-1} \oplus (1,2)_{\frac{1}{2},-1}$$

There are now

two $U(1)$ subscripts: the first is for the $U(1)$ which is part of the isometry group, and the second is for the $U(1)_R$ which is the R-symmetry group. Note that the $U(1)_R$ subscript is the same on each term on the right hand side and carries over from the left.

The question posed above pertains to the

first subscript in the above decomposition, i.e. the representation of the $U(1)$ which is part of the isometry group of the manifold.

I know that an element in $U(1)$ is represented by $e^{i\theta}$ and moreover, $U(1)$ is isomorphic to $SO(2)$. The latter made me think of the irreducible spinor representation of $SO(2)$, but that too is a real 1-dimensional representation (2d Dirac spinor with 2 complex components, but the Majorana-Weyl condition brings it down to 1 real component).

Also, if $U(1)$ is parametrized by $\left(\begin{array}{cc}\cos n\theta & \sin n\theta\\-\sin n\theta & \cos n\theta\end{array}\right)$, does $\frac{1}{2}$ simply mean that $n = 1/2$ in this representation, so that effectively, its a half rotation? |

In the comments section to Willie Wong's answer, the following Dirichlet series came up: the Riemann $\zeta$-function, Dirichlet $L$-functions, and Ramanujan's series $\sum_{n \geq 1}\tau(n) n^{-s}$, where $\tau(n)$ is the coefficient of $q^n$ in $\Delta(q) = q\prod_{n=1}^{\infty} (1-q^n)^{24}$.

First note that the $\zeta$-function is a special case of a Dirichlet $L$-function (it is the $L$-function of the trivial character).

Now what is it that Dirichlet $L$-functions and Ramanujan's series have in common? Well, they are all automorphic $L$-functions.

An automorphic form (for the group $\mathrm{GL}_n$ over $\mathbb Q$; there are generalizations where $\mathbb Q$ is replaced by an arbitrary number field $F$ and $\mathrm{GL}_n$ is replaced by an arbitrary reductive group, but to simplify the explanations, I will focus just on the simplest level of generality here) is a function on the product $\mathrm{GL}_n(\mathbb R)\times \mathrm{GL}_n(\mathbb Z/N\mathbb Z)$ for some integer $N \geq 1$ whichis

invariant under the natural (diagonal) action of $\mathrm{GL}_n(\mathbb Z)$;

grows moderately at infinity with respect to the $\mathrm{GL}_n(\mathbb R)$-coordinates;

satisfies a suitable differential equation in the $\mathrm{GL}_n(\mathbb R)$-coordinates.

Rather than explaining the generalities in more detail (they can be found in many places), I think it's better to illustrate them:

E.g. Dirichlet characters arise in the case $n = 1$: they are defined as functionson $(\mathbb Z/N\mathbb Z)^{\times} =: \mathrm{GL}_1(\mathbb Z/N\mathbb Z)$,and so we can make them into functions on $\mathrm{GL}_1(\mathbb R)\times

\mathrm{GL}_1(\mathbb Z/N\mathbb Z)$ by defining them to be trivial on the$\mathbb R^{\times}$-coordinate.

E.g. If $f(\tau)$ is a holomorphic modular form of weight $k$ and level one (where $\tau$ is an upper half-plane variable as usual), we can make $f$ into a function on$\mathrm{GL}_2(\mathbb R)$ by first identifying this matrix group with the collection of bases of $\mathbb R^2$, then identifying $\mathbb R^2$ with $\mathbb C$,and then defining, for any $\mathbb R$-basis $\omega_1,\omega_2$ of $\mathbb C$,$f(\omega_1,\omega_2) := \omega_2^{-k} f(\omega_1/\omega_2)$. (This presumesthat $\omega_1/\omega_2$ is in the upper half-plane rather than the lower,for simplicity.) Thus we get a function of the required kind (with $N = 1$).

The usual modularity condition becomes invariance under $\mathbb GL_2(\mathbb Z)$. The moderate growth condition becomes the condition that the Fourier expansion of $f$ involves only non-negative powers of $e^{2 \pi i \tau}$. The differential equation is the Cauchy--Riemann equation expressing holomorphy of $f$.

Higher level modular forms will involve values of $N$ that are $> 1$.

E.g. Maass forms are similar to the preceding example, except that now thedifferential equation expresses that a Maass form is an eigenvector of the Laplacian.

For any fixed $n$ and fixed $N$, we have Hecke operators acting on the spaceof automorphic forms, labelled by primes $p$ not dividing $N$, and so we canconsider Hecke eigenforms. In the case of Dirichlet characters, the fact that they are

characters of $(\mathbb Z/N\mathbb Z)^{\times}$ (rather than just arbitrary functions) can be reinterpreted as saying that they are Hecke eigenforms.

Of course, Ramanujan's $\Delta$ is well-known to be a Hecke eigenform of weight $12$ and level $1$.

Given an automorphic Hecke eigenform we can use the Hecke eigenvalues to makean Euler product Dirichlet series, which will give Dirichlet $L$-functionsfor Dirichlet characters, and Ramanujan's Dirichlet series for $\Delta$.(In the Dirichlet character case, if a prime $p$ divides the conductor $N$, we just have a trivial factor in the Euler product for that prime; when $n > 1$,and $N > 1$, it is a bit more of a battle to figure out what Euler factorsto put in at the primes dividing $p$, but it can be done.)

Actually, it is better to restrict to

cuspidal automorphic Hecke eigenforms.Cuspidal is a vacuous condition when $n = 1$ (i.e. in that case we agree to calleverything cuspidal), and when $n > 1$ we replace "moderate growth at infinity" by "rapid decay at infinity", suitably interpreted. I'll assume that all my eigenforms are cuspidalform now on. (E.g. $\Delta$ is cuspidal.)

In this way we get a natural class of $L$-functions which have:

meromorphic continuation to the whole complex plane, which is in factholomorphic with the sole exception of Riemann's $\zeta$.

Functional equation with completely understood $\Gamma$-factors. E.g. for aweight $k$ modular form of level one, if the $p$th Hecke eigenvalue is $a_p$,then the $L$-function is $\prod_p (1 - a_p p^{-s} + p^{k - 1 - 2s})^{-1},$ and the functional equation relates $s$ and $k - s$. For $\Delta,$ I've already noted that $k = 12$. (In general the functional equation relates the $L$-series of an automorphic eigenform with $L$-series of its "complex conjugate" suitably understood, just as in the case of Dirichlet characters that are not necessarily real valued.)

Conjecturally, they should all satisfy the analogue of RH, i.e. all non-trivial zeroes should lie on the critical line, in the centre of the critical strip.

Note incidentally that it is easy to change the apparent form of the functional equation. E.g. if we make a change of variable $s \mapsto s + 11/2$ in Ramanujan's series, then the functional equation will become $s \mapsto 1 -s$rather than $s \mapsto 12 -s $, and the critical line will be $\Re s = 1/2$, just as in the $\zeta$-function case.

All cuspidal automorphic $L$-functions can be renormalized in a similar way, so that the symmetry of the functional equation is $s \mapsto 1 -s$. This is called

unitary normalization, and is common in the automorphic forms literature.

Up to rescaling, there are only countably many automorphic eigenforms altogether (just because if we fix the level $N$ and (appropriately generalized version of) the weight the space of automorphic forms is finite dimensional) and so altogether we are talking about a very special class of just countably many Dirichlet series, but these seem to be the ones that naturally generalize $\zeta(s)$.

By the way, this general point of view is due to Langlands, and forms a part of the general Langlands program.

Another point of view was given by Selberg, which focuses more on capturing the analytic properties necessary for getting good properties of a Dirichlet series, rather than beginning from a conceptual construction (as in the automorphic point of view). Namely, he introduced the Selberg class of Dirichlet series. Note that part of his axioms are an Euler product, analytic continuation, and functional equation.

My sense is, though, that people expect the Selberg class of Dirichlet series to more-or-less coincide with the class of automorphic $L$-functions, so I think it is just two points of view on the same question: Langlands is showing how to

construct "good" Dirichlet series, and Selberg is writing down the properties a "good" Dirichlet series should satisfy. It turns out that "good" Dirichlet series are so special, though, that however you try to pick them out, you seem to end up with the same very special collection, namely the automorphic ones. |

I'm working on a homework assignment where my professor would like us to create a true regression model, simulate a sample of data and he's going to attempt to find our true regression model using some of the techniques we have learned in class. We likewise will have to do the same with a dataset he's given us.

He says that he's been able to produce a pretty accurate model for all past attempts to try and trick him. There have been some students that create some insane model but he arguably was able to produce a simpler model that was just sufficient.

How can I go about developing a tricky model for him to find? I don't want to be super cheap by doing 4 quadratic terms, 3 observations, and massive variance? How can I produce a seemingly innocuous dataset that has a tough little model underneath it?

He simply has 3 Rules to follow:

Your dataset must have one "Y" variable and 20 "X" variables labeled as "Y", "X1", ..., "X20".

Your response variable $Y$ must come from a linear regression model that satisfies:

$$ Y_i^\prime = \beta_0 + \beta_1 X_{i1}^\prime + \ldots + \beta_{p-1}X_{i,p-1}^\prime + \epsilon_i $$ where $\epsilon_i \sim N(0,\sigma^2)$ and $p \leq 21$.

All $X$-variables that were used to create $Y$ are contained in your dataset.

It should be noted, not all 20 X variables need to be in your real model

I was thinking of using something like the Fama-French 3 Factor Model and having him start with the stock data (SPX and AAPL) and have to transform those variables to the continuously compounded returns in order to obsfucate it a little more. But that leaves me with missing values in the first observation and it's time series (which we haven't discussed in class yet).

Unsure if this is the proper place to post something like this. I felt like it could generate some good discussion.

Edit: I'm also not asking for "pre-built" models in particular. I'm more curious about topics/tools in Statistics that would enable somebody to go about this. |

Korn inequality