text stringlengths 8 6.05M |

|---|

#!/bin/env python3

# Search algorithms

#from . import map_utils as utils

import heapq

def backtracking(parents, agent_cord, goal_cord):

path = [goal_cord]

while path[-1] != agent_cord:

path.append(parents[path[-1]])

path.reverse()

return path

def manhattan_heuristic_function(agent_cord, goal_cord):

return abs(agent_cord[0] - goal_cord[0]) + abs(agent_cord[1] - goal_cord[1])

def a_star_search(the_map, agent_cord, goal_cord, is_ghost=False):

parents = {}

explored = []

frontier = []

h_start = manhattan_heuristic_function(agent_cord, goal_cord)

heapq.heappush(frontier, (h_start, (h_start, agent_cord)))

while True:

if not frontier:

return None

current = heapq.heappop(frontier)

if current[1][1] in explored:

continue

explored.append(current[1][1])

if current[1][1] == goal_cord:

for p in parents:

parents[p] = parents[p][0]

return backtracking(parents, agent_cord, goal_cord)

adjacents = the_map.get_adjacents(current[1][1], not is_ghost)

for adj in adjacents:

if adj not in explored:

current_path_cost = current[0] - manhattan_heuristic_function(current[1][1], goal_cord)

h_adj = manhattan_heuristic_function(adj, goal_cord)

adjacent_priority = current_path_cost + 1 + h_adj

heapq.heappush(frontier, (adjacent_priority, (h_adj, adj)))

if adj not in parents or parents[adj][1] > adjacent_priority:

parents[adj] = [current[1][1], adjacent_priority]

# Unnecessary search

def breadth_first_search(the_map, agent_cord, goal_cord):

parents = {}

explored = []

frontier = [agent_cord]

while True:

if not frontier:

return None

current = frontier.pop(0)

if current in explored:

continue

explored.append(current)

if current == goal_cord:

return backtracking(parents, agent_cord, goal_cord)

adjacents = the_map.get_adjacents(current[1])

for adj in adjacents:

if adj not in explored and adj not in frontier:

frontier.append(adj)

parents[adj] = current |

'''

FileServer is a library with a file-like interface for reading and writing,

most of which is exposed by the BundleRPCServer for calling remotely.

The core method that opens files handles, open_file, is NOT exposed as an RPC

method for security reasons. Instead, alternate methods for opening files (such

as open_temp_file) are exposed by this class. These methods all return a file

uuid, which is like a Unix file descriptor.

The other methods, such as read_file, write_file, and close_file, are exposed

as RPC methods. These methods take a file uuid in addition to their regular

arguments, and they perform the requested operation on the file handle

corresponding to that uuid.

'''

import os

import tempfile

import uuid

import xmlrpclib

from codalab.lib import path_util

class FileServer(object):

def __init__(self):

# Keep a dictionary mapping file uuids to open file handles and a

# dictionary mapping temporary file's file uuids to their absolute paths.

self.file_paths = {}

self.file_handles = {}

self.delete_file_paths = {}

def open_temp_file(self, name):

'''

Open a new temp file with given |name| for writing and return a file

uuid identifying it. Put the file in a temporary directory so the file

can have the desired name.

'''

base_path = tempfile.mkdtemp('-file_server_open_temp_file')

path = os.path.join(base_path, name)

file_uuid = uuid.uuid4().hex

self.file_paths[file_uuid] = path

self.file_handles[file_uuid] = open(path, 'wb')

self.delete_file_paths[file_uuid] = base_path

return file_uuid

def manage_handle(self, handle):

'''

Take a handle to manage and return a file uuid identifying it.

'''

file_uuid = uuid.uuid4().hex

self.file_handles[file_uuid] = handle

return file_uuid

def read_file(self, file_uuid, num_bytes=None):

'''

Read up to num_bytes from the given file uuid. Return an empty buffer

if and only if this file handle is at EOF.

'''

file_handle = self.file_handles[file_uuid]

return xmlrpclib.Binary(file_handle.read(num_bytes))

def write_file(self, file_uuid, buffer):

'''

Write data from the given binary data buffer to the file uuid.

'''

file_handle = self.file_handles[file_uuid]

file_handle.write(buffer.data)

def close_file(self, file_uuid):

'''

Close the given file uuid.

'''

file_handle = self.file_handles[file_uuid]

file_handle.close()

def finalize_file(self, file_uuid):

'''

Remove the record from the file server.

'''

self.file_paths.pop(file_uuid, None)

self.file_handles.pop(file_uuid, None)

path = self.delete_file_paths.pop(file_uuid, None)

if path:

path_util.remove(path)

|

#!/usr/bin/python

import numpy as np

import pylab as py

from COMMON import grav, light, hub0, yr, mpc, msun, week, h0, omm, omv

import COMMON as CM

from Formulas_AjithEtAl2008 import apar, bpar, cpar, xpar, ypar, zpar, kpar

import Formulas_AjithEtAl2008 as A8

from scipy import interpolate

factor=1.

detector='ALIGO'

tobs=0.05

mch=1.

m=mch*2.**(1./5.)

nu=1./4.

mtot=2.*m

fbins=1000

finteg=100

z=np.array([2.])

zall=np.linspace(0.01,20.,100)

snrall=np.zeros(len(zall))

def htilde_f(nu, mtot, z, lumdist, fvec):

''''''

mch=nu**(3./5.)*mtot

fmer=A8.f_mer(nu, mtot)*1./(1.+z)

frin=A8.f_rin(nu, mtot)*1./(1.+z)

fcut=A8.f_cut(nu, mtot)*1./(1.+z)

fsig=A8.f_sig(nu, mtot)*1./(1.+z)

camp=CM.htilde_f(mch, z, lumdist, fmer)

aeff=np.zeros(np.shape(fvec))

selecti=(fvec<fmer)

aeff[selecti]+=A8.aeff_low(fvec[selecti], fmer, camp)

selecti=(fvec>=fmer)&(fvec<frin)

aeff[selecti]+=A8.aeff_mid(fvec[selecti], fmer, camp)

selecti=(fvec>=frin)&(fvec<fcut)

aeff[selecti]+=A8.aeff_upp(fvec[selecti], fmer, frin, fsig, camp)

return aeff

def snr_mat_f(mchvec, reds, lum_dist, fmin, fmax, fvec, finteg, tobs, sn_f):

''''''

mch_fmat=np.transpose(np.tile(mchvec, (len(reds), len(fvec), finteg, 1) ), axes=(0,3,1,2))

z_fmat=np.transpose(np.tile(reds, (len(mchvec), len(fvec), finteg, 1) ),axes=(3,0,1,2))

f_fmat=np.transpose(np.tile(fvec, (len(reds), len(mchvec), finteg, 1) ), axes=(0,1,3,2))

finteg_fmat=np.transpose(np.tile(np.arange(finteg), (len(reds), len(mchvec), len(fvec), 1) ), axes=(0,1,2,3))

stshape=np.shape(z_fmat) #Standard shape of all matrices that I will use.

DL_fmat=np.transpose(np.tile(lum_dist, (len(mchvec), len(fvec), finteg, 1) ),axes=(3,0,1,2)) #Luminosity distance in Mpc.

flim_fmat=A8.f_cut(1./4., 2.*mch_fmat*2.**(1./5.))*1./(1.+z_fmat) #The symmetric mass ratio is 1/4, since I assume equal masses.

flim_det=np.maximum(np.minimum(fmax, flim_fmat), fmin) #The isco frequency limited to the detector window.

tlim_fmat=CM.tafter(mch_fmat, f_fmat, flim_fmat, z_fmat)

#By construction, f_mat cannot be smaller than fmin or larger than fmax (which are the limits imposed by the detector).

fmin_fmat=np.minimum(f_fmat, flim_det) #I impose that the minimum frequency cannot be larger than the fisco.

fmaxobs_fmat=flim_det.copy()

#fmaxobs_fmat=fmin_fmat.copy()

fmaxobs_fmat[tobs<tlim_fmat]=CM.fafter(mch_fmat[tobs<tlim_fmat], z_fmat[tobs<tlim_fmat], f_fmat[tobs<tlim_fmat], tobs)

fmax_fmat=np.minimum(fmaxobs_fmat, flim_det) #The maximum frequency (after an observation tobs) cannot exceed fisco or the maximum frequency of the detector.

integconst=(np.log10(fmax_fmat)-np.log10(fmin_fmat))*1./(finteg-1)

finteg_fmat=fmin_fmat*10**(integconst*finteg_fmat)

sn_vec=sn_f(fvec)##########

sn_fmat=sn_f(finteg_fmat) #Noise spectral density.

#htilde_fmat=A8.htilde_f(1./4., 2.*mch_fmat*2**(1./5.), z_fmat, DL_fmat, f_fmat)

htilde_fmat=A8.htilde_f(1./4., 2.*mch_fmat*2**(1./5.), z_fmat, DL_fmat, finteg_fmat)

#py.loglog(finteg_fmat[0,0,:,0],htilde_fmat[0,0,:,0]**2.)

#py.loglog(finteg_fmat[0,0,:,0],sn_fmat[0,0,:,0])

snrsq_int_fmat=4.*htilde_fmat**2./sn_fmat #Integrand of the S/N square.

snrsq_int_m_fmat=0.5*(snrsq_int_fmat[:,:,:,1:]+snrsq_int_fmat[:,:,:,:-1]) #Integrand at the arithmetic mean of the infinitesimal intervals.

df_fmat=np.diff(finteg_fmat, axis=3) #Infinitesimal intervals.

snr_full_fmat=np.sqrt(np.sum(snrsq_int_m_fmat*df_fmat,axis=3)) #S/N as a function of redshift, mass and frequency.

fopt=fvec[np.argmax(snr_full_fmat, axis=2)] #Frequency at which the S/N is maximum, for each pixel of redshift and mass.

snr_opt=np.amax(snr_full_fmat, axis=2) #Maximum S/N at each pixel of redshift and mass.

snr_min=snr_full_fmat[:,:,0]

return snr_opt

for zeti in xrange(len(zall)):

z=np.array([zall[zeti]])

DL=CM.comdist(z)*(1.+z) #Luminosity distance in Mpc.

fvecd, sn=CM.detector_f(detector, factor)

sn_f=interpolate.interp1d(fvecd,sn)

fmin=min(fvecd)*1.000001

fmax=max(fvecd)*0.99999

fvec=np.logspace(np.log10(fmin), np.log10(fmax), fbins)

sred=sn_f(fvec)

htilde=htilde_f(nu, mtot, z, DL, fvec)

integrand=4.*htilde**2./sred

#py.loglog(fvec,integrand)

snr=np.sqrt(np.trapz(integrand,fvec))

snrmat=snr_mat_f(np.array([mch]), z, DL, fmin, fmax, fvec, finteg, tobs, sn_f)

snrall[zeti]=snrmat

py.ion()

#py.loglog(fvec, htilde**2.)

#py.loglog(fvec,sred)

py.loglog(zall, snrall)

raw_input('enter')

integrand=4.*htilde**2./sred

#py.loglog(fvec,integrand)

snr=np.sqrt(np.trapz(integrand,fvec))

snrmat=snr_mat_f(np.array([mch]), z, DL, fmin, fmax, fvec, finteg, tobs, sn_f)

print snr

print snrmat

print np.sqrt(np.sum(integrand[:-1]*np.diff(fvec)))

exit()

mvec=np.logspace(-11.,1.,100) #Vector of total mass.

nueq=1./4. #nu for equal masses.

fmervec=A8.f_mer(nueq, mvec)

frinvec=A8.f_rin(nueq,mvec)

flsovec=CM.felso(mvec*0.5, mvec*0.5)

#print fmervec*1./flsovec

#print frinvec*1./flsovec

#py.loglog(mvec, flsovec)

#py.loglog(mvec, fmervec)

#py.loglog(mvec, frinvec)

#raw_input('enter')

xmat=np.tile(xpar, (len(fvec),1))

ymat=np.tile(xpar, (len(fvec),1))

zmat=np.tile(xpar, (len(fvec),1))

kmat=np.tile(kpar, (len(fvec),1))

fmat=np.transpose(np.tile(fvec, (len(xpar),1)),axes=(1,0))

nu=A8.nu_f(m1,m2)

mtot=m1+m2

mch=CM.mchirp(m1, m2)

t0=0.017

phi0=0.

#pvec=phase_eff(nu, mtot, t0, phi0, fmat, xmat, ymat, zmat, kmat)

pvec=A8.phase_eff(nu, mtot, t0, phi0, fvec)

pvecold=CM.phase_f(mch, 0., 0., fvec)

fmer=A8.f_mer(nu, mtot)

frin=A8.f_rin(nu, mtot)

fcut=A8.f_cut(nu, mtot)

fsig=A8.f_sig(nu, mtot)

flso=CM.felso(m1,m2)

print

print fmer

print flso

py.ion()

fcomp=1.*flso

#tmer=tafter(nu, mtot, t0, xmat, ymat, zmat, kmat, fmer, fmat)

tmer=abs(A8.tafter(nu, mtot, t0, fcomp, fvec))###########

#Compare this to the typical (inspiral only) calculation.

#tmerold=np.zeros(len(fvec))

#tmerold[fvec<flso]=CM.tafter(mch, fvec, flso, 0)

tmerold=CM.tafter(mch, fvec, fcomp, 0)

#tcoalold=np.zeros(len(fvec))

print

py.clf()

py.loglog(fvec, tmer*1.8)

py.loglog(fvec, tmerold)

raw_input('enter')

py.clf()

py.subplot(2,1,1)

py.loglog(fvec, abs(pvec))

#py.loglog(fvec, abs(pvecold))

py.subplot(2,1,2)

fvec_low=np.zeros(len(fvec))

fvec_mid=np.zeros(len(fvec))

fvec_upp=np.zeros(len(fvec))

fvec_low[fvec<fmer]=fvec[fvec<fmer]

fvec_mid[(fmer<=fvec)&(fvec<frin)]=fvec[(fmer<=fvec)&(fvec<frin)]

fvec_upp[(frin<=fvec)&(fvec<fcut)]=fvec[(frin<=fvec)&(fvec<fcut)]

camp=A8.c_amp(nu, mtot, dist, fmer)

alow=np.zeros(len(fvec))

amid=np.zeros(len(fvec))

aupp=np.zeros(len(fvec))

alow[fvec_low>0]=A8.aeff_low(fvec_low[fvec_low>0], fmer, camp)

amid[fvec_mid>0]=A8.aeff_mid(fvec_mid[fvec_mid>0], fmer, camp)

aupp[fvec_upp>0]=A8.aeff_upp(fvec_upp[fvec_upp>0], fmer, frin, fsig, camp)

aeff=alow+amid+aupp

wave=aeff*np.cos(pvec)

#py.plot(fvec, wave)

py.loglog(fvec, aeff)

#py.plot(fvec, pvec)

raw_input('enter')

|

#build watershed image set

#last update 4/23/2014

import urllib

widList = [1, 2, 3, 4, 5, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29, 30, 31, 32, 33, 34, 35, 36, 37, 38, 39, 40, 41, 42, 43, 44, 46, 47, 48, 49, 50, 51, 52, 53, 54, 55, 56, 57, 58, 59, 60, 61, 62, 63, 65, 66, 67, 68, 69, 70, 71, 72, 73, 74, 76, 77, 78, 79, 80, 81, 82, 83, 84]

# 75 was deleted due to a change in the watershed dataset

for wid in widList:

fileTemplate = "C:/OSGeo4w/apps/watershed/output/wshd_" + str(wid) + ".png"

urlTemplate = "http://127.0.0.1:8080/cgi-bin/mapserv.exe?map=/OSGeo4w/apps/watershed/mapfiles/ws-new-images.map&mode=map&wid=" + str(wid)

urllib.urlretrieve(urlTemplate,fileTemplate)

print "Done" |

from flask import Flask, g, render_template, request, send_from_directory

from sounds import controller as c_sounds

from sounds.model import sounds_dir

from helpers import languages

import sqlite3

import os

app = Flask(__name__)

@app.route('/', methods=['GET'])

def index():

return render_template('index.html', languages=languages.sort_by_value())

@app.route('/sounds', methods=['POST'])

@app.route('/sounds/<idd>', methods=['GET'])

def sounds(idd=None):

if request.method == 'GET':

return c_sounds.get_sound(idd)

elif request.method == 'POST':

return c_sounds.create()

@app.route('/results', methods=['GET'])

def results():

if request.method == 'GET':

return render_template('results.html')

@app.route('/static/sounds/<path:filename>', methods=['GET'])

def download_sound(filename):

return send_from_directory(sounds_dir, filename.encode('utf-8'), as_attachment=True)

current_dir = os.path.abspath(os.path.dirname(__file__))

DATABASE = os.path.join(current_dir, 'sounds.db')

def connect_db():

return sqlite3.connect(DATABASE)

def get_db():

db = getattr(g, 'db', None)

if db is None:

db = g._database = connect_db()

return db

@app.before_request

def before_request():

g.db = get_db()

@app.teardown_appcontext

def close_connection(exception):

db = getattr(g, 'db', None)

if db is not None:

db.close()

if __name__ == '__main__':

app.run(debug=True)

|

#!/usr/bin/env python3

import sys

import math

try:

fp= open("input.txt","r")

fuel= 0

part1_fuel=0

lines= fp.readlines()

for l in lines:

try:

i= int(l.strip())

new_fuel= math.floor(i/3)-2

fuel+= new_fuel

part1_fuel+= new_fuel

while new_fuel >= 9:

new_fuel= math.floor(new_fuel/3)-2

fuel+= new_fuel

except Exception as e:

print(str(e)+" Unexpected non int " + l)

sys.exit()

print(part1_fuel,fuel)

finally:

fp.close()

|

from PIL import Image

from scipy import misc

from keras.constraints import maxnorm

from keras.models import Sequential

from keras.layers import Dense, Flatten, Conv2D, Dropout, MaxPooling2D

import matplotlib.image as mpimg

import numpy as np

import tensorflow as tf

import cv2

import os

"""

Data collection and preprocessing

"""

# An array containing the possible classifications

class_names = ['drawings', 'engraving', 'iconography', 'painting', 'sculpture']

# Collects image data and label pairs from a specified directory

def collect_data(img_dir):

x = []

y = []

class_count = 0

classes = os.listdir(img_dir)

for image_class in classes:

imgs = os.listdir(img_dir + "\\" + image_class)

for image in imgs:

img = cv2.imread(img_dir + "\\" + image_class + "\\" + image)

img = cv2.resize(img, (64,64))

x.append(img)

y.append(class_count)

class_count += 1

return [x,y]

# Raw training data parsed from images

training_data = collect_data("Images")

train_x = training_data[0]

train_y = training_data[1]

# Final numpy arrays for training

training_images = np.array(train_x) / 255.0

training_labels = np.array(train_y)

# Raw testing data parsed from images

testing_data = collect_data("Validation_Images")

test_x = testing_data[0]

test_y = testing_data[1]

# Final numpy arrays for testing

testing_images = np.array(test_x)

testing_labels = np.array(test_y)

# Prints shape of training data

print("Shape of training data:")

print(training_images.shape)

print(training_labels.shape)

"""

Keras model creation, data training, and testing

"""

# Creates a location to save training checkpoints along with the model

cp_path = "Trained_Model\\cp.ckpt"

cp_dir = os.path.dirname(cp_path)

# Establishes the checkpoint callback for saving the model in parts

cp_callback = tf.keras.callbacks.ModelCheckpoint(cp_path, save_weights_only=True, verbose=1)

# Creates a Sequential tensorflow neural network under the keras framework

model = Sequential()

# Adding layers to the model

model.add(Conv2D(32, (3, 3), input_shape=(64, 64, 3), padding='same', activation='relu', kernel_constraint=maxnorm(3)))

model.add(Dropout(0.2))

model.add(Conv2D(32, (3, 3), activation='relu', padding='same', kernel_constraint=maxnorm(3)))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Flatten())

model.add(Dense(512, activation='relu', kernel_constraint=maxnorm(3)))

model.add(Dense(5, activation='softmax'))

# Compiles the model together, the last step to establishing the neural network

model.compile(optimizer='rmsprop', loss='sparse_categorical_crossentropy', metrics=['accuracy'])

# Trains the values

model.fit(training_images, training_labels, epochs=10, batch_size=32, shuffle=True, callbacks=[cp_callback])

# Evaluates the testing samples

test_loss, test_acc = model.evaluate(testing_images, testing_labels, verbose=0)

print("Test accuracy:", test_acc)

print("Test loss:", test_loss)

predictions = model.predict(testing_images)

print(predictions[0])

print(class_names[np.argmax(predictions[0])])

print("Saving model to 'Trained_Model\\model.h5'")

model.save("Trained_Model\\model.h5")

print("Done!") |

from flask import Blueprint, redirect, render_template, url_for, flash, session, request

from .__init__ import db

roles = Blueprint('roles', __name__, template_folder='templates', static_folder='static')

@roles.route('/roles/edit')

def editRoles():

if not session:

return redirect(url_for('auth.login'))

cur = db.connection.cursor()

cur.execute('call getProfile(%s)', [session['id']])

profileInfo = cur.fetchone()

if profileInfo[5] != "Administrativo":

flash('No se tienen los permisos suficientes para acceder a esta página', 'alert')

return redirect(url_for('main.index'))

cur.execute('call getRoleless')

roleless = cur.fetchall()

cur.close()

return render_template('rolesEdit.html',roleless=roleless, search='')

@roles.route('/roles/edit', methods=['POST'])

def editRolesPost():

print(session)

cedula = request.form['cedula']

cur = db.connection.cursor()

cur.execute('call getRolelessSearch(%s)', [cedula])

roleless = cur.fetchall()

cur.close()

return render_template('rolesEdit.html',roleless=roleless, search=cedula, session=session)

@roles.route('/roles/giveRole')

def giveRole():

if not session:

return redirect(url_for('auth.login'))

cedula = request.args.get('cedula')

cur = db.connection.cursor()

cur.execute('call getProfile(%s)', [cedula])

profileInfo = cur.fetchone()

return render_template('giveRole.html', profileInfo=profileInfo)

@roles.route('/roles/giveRole', methods=['POST'])

def giveRolePost():

cedula = request.args.get('cedula')

rol = request.form['rol']

cur = db.connection.cursor()

cur.execute('call giveRole(%s,%s)', [cedula,rol])

db.connection.commit()

cur.close()

return redirect(url_for('roles.roleFiller',cedula=cedula, rol=rol))

@roles.route('/roles/roleFiller')

def roleFiller():

if not session:

return redirect(url_for('auth.login'))

cedula = request.args.get('cedula')

rol = request.args.get('rol')

cur = db.connection.cursor()

cur.execute('select nombre from perfiles where cedula = %s', [cedula])

nombre = cur.fetchone()

# Queries para el médico Especialidades y Horarios

if rol == 'Médico':

cur.execute('call getEspec()')

especialidades = cur.fetchall()

cur.execute('call getSchedule()')

horarios = cur.fetchall()

cur.close()

return render_template('roleFiller.html', cedula=cedula, nombre=nombre, rol=rol, especialidades=especialidades, horarios=horarios)

elif rol == 'Enfermero' or rol == 'Ingeniero' or rol == 'Servicios' or rol == 'Administrativo':

cur.execute('call getSchedule()')

horarios = cur.fetchall()

cur.close()

return render_template('roleFiller.html', cedula=cedula, nombre=nombre, rol=rol, horarios=horarios)

elif rol == 'Paciente':

cur.execute('select * from EPS')

eps = cur.fetchall()

cur.close()

return render_template('roleFiller.html', cedula=cedula, nombre=nombre, rol=rol, eps=eps)

# This literally, should never happen, if so, be scared.

return render_template('roleFiller.html', cedula=cedula, rol=rol)

@roles.route('/roles/roleFiller', methods=['POST'])

def roleFillerPost():

cedula = request.args.get('cedula')

print(cedula)

cur = db.connection.cursor()

if request.args.get('rol') == 'Médico':

especialidad = request.form['especialidad']

horario = request.form['horario']

cur.execute('call getMedicProfile(%s)',[cedula])

doubleChecker = cur.fetchall()

if len(doubleChecker) != 0:

cur.execute('update medico set idEspecialidad=%s, idHorario=%s where cedula==%s', [especialidad,horario,cedula])

flash('Médico actualizado correctamente', 'ok')

else:

cur.execute('insert into medico (cedula,idEspecialidad,idHorario) values (%s,%s,%s)',[cedula,especialidad,horario])

flash('Médico creado correctamente', 'ok')

db.connection.commit()

elif request.args.get('rol') == 'Enfermero':

horario = request.form['horario']

cur.execute('call getNurseProfile(%s)',[cedula])

doubleChecker = cur.fetchall()

if len(doubleChecker) != 0:

cur.execute('update enfermeras set idHorario = %s where cedula = %s', [horario,cedula])

flash('Enfermero actualizado correctamente', 'ok')

else:

cur.execute('insert into enfermeras (cedula, idHorario) values (%s,%s)', [cedula,horario])

flash('Enfermero creado correctamente', 'ok')

db.connection.commit()

elif request.args.get('rol') == 'Ingeniero':

horario = request.form['horario']

cur.execute('call getEngieProfile(%s)',[cedula])

doubleChecker = cur.fetchall()

if len(doubleChecker) != 0:

flash('Ingeniero actualizado correctamente', 'ok')

cur.execute('update ingenieros set idHorario = %s where cedula = %s', [horario,cedula])

else:

flash('Ingeniero creado correctamente', 'ok')

cur.execute('insert into ingenieros (cedula, idHorario) values (%s,%s)', [cedula,horario])

db.connection.commit()

elif request.args.get('rol') == 'Servicios':

horario = request.form['horario']

cur.execute('call getSerGenProfile(%s)',[cedula])

doubleChecker = cur.fetchall()

if len(doubleChecker) != 0:

cur.execute('update serviciosGenerales set idHorario = %s where cedula = %s', [horario,cedula])

flash('Personal de servicios generales actualizado correctamente', 'ok')

else:

cur.execute('insert into serviciosGenerales (cedula, idHorario) values (%s,%s)', [cedula,horario])

flash('Personal de servicios generales creado correctamente', 'ok')

db.connection.commit()

elif request.args.get('rol') == 'Administrativo':

horario = request.form['horario']

area = request.form['area']

cur.execute('call getAdminProfile(%s)',[cedula])

doubleChecker = cur.fetchall()

if len(doubleChecker) != 0:

cur.execute('update administrativos set idHorario = %s where cedula = %s', [horario,cedula])

flash('Administrativo actualizado correctamente', 'ok')

else:

cur.execute('insert into administrativos (cedula, idHorario,areaAsig) values (%s,%s,%s)', [cedula,horario,area])

flash('Administrativo creado correctamente', 'ok')

db.connection.commit()

elif request.args.get('rol') == 'Paciente':

eps = request.form['eps']

peso = request.form['peso']

cur.execute('call getPatientProfile(%s)',[cedula])

doubleChecker = cur.fetchall()

if len(doubleChecker) != 0:

cur.execute('update pacientes set idEPS = %s, peso = %s where cedula = %s', [eps,peso,cedula])

flash('Paciente actualizado correctamente', 'ok')

else:

cur.execute('insert into pacientes (cedula,idEPS,peso) values (%s,%s,%s)', [cedula,eps,peso])

flash('Paciente creado correctamente', 'ok')

db.connection.commit()

cur.execute('select * from historialMedico where idHistorial = %s', [cedula])

historialMedico = cur.fetchone()

if historialMedico == None:

cur.execute('insert into historialMedico (idHistorial,fechaSubida) values (%s, curdate())',[cedula])

cur.execute('update pacientes set idHistorial = %s where cedula = %s', [cedula, cedula])

db.connection.commit()

return redirect(url_for('roles.editRoles')) |

from bitstring import ConstBitStream, ReadError

from .reporter import Reporter

# How many updates we want, 100 would be every percentage. 4 would be every 25%.

UPDATES = 100

def read(config, file_name):

"""

Read binary file into an array of unsigned integers.

:param config: dict - Config values from the config file.

:param file_name: string - The file we read from, using bitstream.

"""

# Get bit length, default to 1 byte.

bit_length = int(config.get('bits', 8))

print("Reading file {} - Splitting up in chunks of {} bits.".format(file_name, bit_length))

stream = ConstBitStream(filename=file_name)

print("Starting to read...")

reporter = Reporter(int(len(stream) / bit_length), updates=UPDATES)

return _read_read(stream, bit_length, reporter)

# return _read_cut(stream, bit_length)

def _read_cut(stream, bit_length, reporter):

"""

Testing showed that this is 3 times slower than using read. Shame.

"""

result = []

for chunk in stream.cut(bit_length):

if chunk is None:

break

result.append(chunk.uint)

reporter.report(len(result))

return result

def _read_read(stream, bit_length, reporter):

"""

Currently the faster way of reading.

"""

bform = 'uint:{}'.format(bit_length)

result = []

while True:

try:

result.append(stream.read(bform))

reporter.report(len(result))

except ReadError:

break

return result

|

# coding=utf-8

from django.core.management.base import BaseCommand, CommandError

from mulan.models import Order, OrderHistory

class Command(BaseCommand):

def handle(self, *args, **options):

if OrderHistory.objects.count() == 0:

for order in Order.objects.all():

order_history = OrderHistory( original_order = order,

created = order.created,

money = order.calc_order_total())

order_history.save() |

# -*- coding: utf-8 -*-

"""

Define image properties

@author: peter

"""

import cv2

import numpy as np

class png_gray(object):

def __init__(self, image_path, isPositive ):

self.image = cv2.imread( image_path )[:,:,0]

self.integral = self.integral_image(self.image)

self.isPositive = isPositive

# Get the integrated image to speed up region summations in later steps

def integral_image(self, image : np.ndarray ):

if type(image) != np.ndarray:

raise TypeError("Input must be numpy.ndarray")

return image.cumsum(axis=0).cumsum(axis=1) |

import sys

import string

import logging

from util import mapper_logfile

logging.basicConfig(filename=mapper_logfile, format='%(message)s',

level=logging.INFO, filemode='w')

def mapper():

'''

For this exercise, compute the average value of the ENTRIESn_hourly column

for different weather types. Weather type will be defined based on the

combination of the columns fog and rain (which are boolean values).

For example, one output of our reducer would be the average hourly entries

across all hours when it was raining but not foggy.

Each line of input will be a row from our final Subway-MTA dataset in csv format.

You can check out the input csv file and its structure below:

https://s3.amazonaws.com/content.udacity-data.com/courses/ud359/turnstile_data_master_with_weather.csv

Note that this is a comma-separated file.

This mapper should PRINT (not return) the weather type as the key (use the

given helper function to format the weather type correctly) and the number in

the ENTRIESn_hourly column as the value. They should be separated by a tab.

For example: 'fog-norain\t12345'

Since you are printing the output of your program, printing a debug

statement will interfere with the operation of the grader. Instead,

use the logging module, which we've configured to log to a file printed

when you click "Test Run". For example:

logging.info("My debugging message")

Note that, unlike print, logging.info will take only a single argument.

So logging.info("my message") will work, but logging.info("my","message") will not.

'''

# Takes in variables indicating whether it is foggy and/or rainy and

# returns a formatted key that you should output. The variables passed in

# can be booleans, ints (0 for false and 1 for true) or floats (0.0 for

# false and 1.0 for true), but the strings '0.0' and '1.0' will not work,

# so make sure you convert these values to an appropriate type before

# calling the function.

def format_key(fog, rain):

return '{}fog-{}rain'.format(

'' if fog else 'no',

'' if rain else 'no'

)

first = True

for line in sys.stdin:

data = line.strip().split(",")

if first == True:

first = False

continue

if len(data) != 22:

continue

print "{0}\t{1}".format(format_key(float(data[14]), float(data[15])), data[6])

mapper()

from util import reducer_logfile

logging.basicConfig(filename=reducer_logfile, format='%(message)s',

level=logging.INFO, filemode='w')

def reducer():

'''

Given the output of the mapper for this assignment, the reducer should

print one row per weather type, along with the average value of

ENTRIESn_hourly for that weather type, separated by a tab. You can assume

that the input to the reducer will be sorted by weather type, such that all

entries corresponding to a given weather type will be grouped together.

In order to compute the average value of ENTRIESn_hourly, you'll need to

keep track of both the total riders per weather type and the number of

hours with that weather type. That's why we've initialized the variable

riders and num_hours below. Feel free to use a different data structure in

your solution, though.

An example output row might look like this:

'fog-norain\t1105.32467557'

Since you are printing the output of your program, printing a debug

statement will interfere with the operation of the grader. Instead,

use the logging module, which we've configured to log to a file printed

when you click "Test Run". For example:

logging.info("My debugging message")

Note that, unlike print, logging.info will take only a single argument.

So logging.info("my message") will work, but logging.info("my","message") will not.

'''

riders = 0 # The number of total riders for this key

num_hours = 0 # The number of hours with this key

old_key = None

dist_counts = {}

for line in sys.stdin:

data = line.strip().split("\t")

if len(data) != 2:

continue

k,v = data

if k in dist_counts.keys():

dist_counts[k][0] += float(v)

dist_counts[k][1] += 1.0

else:

dist_counts[k] = [float(v),1.0]

for key in dist_counts.keys():

print "{0}\t{1}".format( key, dist_counts[key][0]/dist_counts[key][1])

reducer()

import sys

import string

import logging

from util import mapper_logfile

logging.basicConfig(filename=mapper_logfile, format='%(message)s',

level=logging.INFO, filemode='w')

def mapper():

"""

In this exercise, for each turnstile unit, you will determine the date and time

(in the span of this data set) at which the most people entered through the unit.

The input to the mapper will be the final Subway-MTA dataset, the same as

in the previous exercise. You can check out the csv and its structure below:

https://s3.amazonaws.com/content.udacity-data.com/courses/ud359/turnstile_data_master_with_weather.csv

For each line, the mapper should return the UNIT, ENTRIESn_hourly, DATEn, and

TIMEn columns, separated by tabs. For example:

'R001\t100000.0\t2011-05-01\t01:00:00'

Since you are printing the output of your program, printing a debug

statement will interfere with the operation of the grader. Instead,

use the logging module, which we've configured to log to a file printed

when you click "Test Run". For example:

logging.info("My debugging message")

Note that, unlike print, logging.info will take only a single argument.

So logging.info("my message") will work, but logging.info("my","message") will not.

"""

first = True

for line in sys.stdin:

data = line.strip().split(",")

if first == True:

first = False

continue

if len(data) != 22:

continue

print "{0}\t{1}\t{2}\t{3}".format(data[1], data[6], data[2], data[3])

mapper()

import sys

import logging

from util import reducer_logfile

logging.basicConfig(filename=reducer_logfile, format='%(message)s',

level=logging.INFO, filemode='w')

def reducer():

'''

Write a reducer that will compute the busiest date and time (that is, the

date and time with the most entries) for each turnstile unit. Ties should

be broken in favor of datetimes that are later on in the month of May. You

may assume that the contents of the reducer will be sorted so that all entries

corresponding to a given UNIT will be grouped together.

The reducer should print its output with the UNIT name, the datetime (which

is the DATEn followed by the TIMEn column, separated by a single space), and

the number of entries at this datetime, separated by tabs.

For example, the output of the reducer should look like this:

R001 2011-05-11 17:00:00 31213.0

R002 2011-05-12 21:00:00 4295.0

R003 2011-05-05 12:00:00 995.0

R004 2011-05-12 12:00:00 2318.0

R005 2011-05-10 12:00:00 2705.0

R006 2011-05-25 12:00:00 2784.0

R007 2011-05-10 12:00:00 1763.0

R008 2011-05-12 12:00:00 1724.0

R009 2011-05-05 12:00:00 1230.0

R010 2011-05-09 18:00:00 30916.0

...

...

Since you are printing the output of your program, printing a debug

statement will interfere with the operation of the grader. Instead,

use the logging module, which we've configured to log to a file printed

when you click "Test Run". For example:

logging.info("My debugging message")

Note that, unlike print, logging.info will take only a single argument.

So logging.info("my message") will work, but logging.info("my","message") will not.

'''

max_entries = 0

old_key = None

datetime = ''

dist_counts = {}

for line in sys.stdin:

data = line.strip().split("\t")

if len(data) != 4:

continue

k = data[0]

if k in dist_counts.keys():

if float(data[1]) >= dist_counts[k][0]:

dist_counts[k][0] = float(data[1])

dist_counts[k][1] = data[2]

dist_counts[k][2] = data[3]

else:

dist_counts[k] = [float(data[1]), data[2], data[3]]

for key in dist_counts.keys():

logging.info("{0}\t{1} {2}\t{3}".format( key, dist_counts[key][1], dist_counts[key][2], dist_counts[key][0]))

print "{0}\t{1} {2}\t{3}".format( key, dist_counts[key][1], dist_counts[key][2], dist_counts[key][0])

reducer()

|

from django.db import models

from django.contrib.auth.models import AbstractUser

# Create your models here.

############################PARA LA TABLA USUARIO EN LA BASE DE DATOS###############################

class User(AbstractUser): #A partir de ahora la tabla de usuarios para la autenticacion es esta (User) y el dia de mañana puedo añadir mas campos. #Luego en los settings de le da permiso. AUTH_USER_MODEL = 'users.User' <-- ('nombreDeLaAPP.nombreDeLaClase')

nacionalidad = models.CharField(max_length = 30, null = False, blank=True)

bio = models.CharField(max_length = 250, null = False, blank=True)

face = models.CharField(max_length = 100, null = False, blank=True)

wapp = models.CharField(max_length = 100, null = False, blank=True)

web = models.CharField(max_length = 100, null = False, blank=True)

avatar = models.ImageField('Foto de Perfil', upload_to='avatar', blank=True, null=True)

fechaModif = models.DateTimeField(auto_now=True)

fecha_creacion = models.DateField('Fecha de creación', auto_now_add = True) #auto_now = False es para que no modifique su fecha si se llega a actualizar

def __str__(self):

return self.username

|

# encoding=utf8

"""

Author: 'jdwang'

Date: 'create date: 2017-01-13'; 'last updated date: 2017-01-13'

Email: '383287471@qq.com'

Describe:

"""

from __future__ import print_function

from regex_extracting.extracting.common.regex_base import RegexBase

__version__ = '1.3'

class Brand(RegexBase):

name = '品牌'

def __init__(self, sentence):

# 要处理的输入句子

self.sentence = sentence

# region 1 初始化正则表达式

# 描述正则表达式

self.statement_regexs = [

'品牌|牌'

]

# 值正则表达式

self.value_regexs = [

'诺基亚|nokia|Nokia|NOKIA|三星|SAMSUNG|samsung|Samsung|苹果|HTC|htc|华为|联想|lenovo|Lenovo|LENOVO|\

步步高|酷派|金立|魅族|黑莓|天语|摩托罗拉|OPPO|oppo|经纬|飞利浦|小米|中兴|云台|LG|lg|TCL|tcl|华硕|海信|\

长虹|海尔|康佳|夏新|纽曼|亿通|乐派|七喜|阿尔法|富士通|Yahoo|谷歌|卡西欧|优派|技嘉|惠普|多普达|东芝|爱国者|\

明基|万利达|戴尔|中天|夏普|索尼|努比亚|锤子|LG|lg|小辣椒',

'随意|随便|都可以|其他|别的',

'好一点',

'好',

]

# endregion

super(Brand, self).__int__()

self.regex_process()

if __name__ == '__main__':

price = Brand(u'三星、诺基亚')

for info_meta_data in price.info_meta_data_list:

print('-' * 80)

print(unicode(info_meta_data))

|

import os

import sys

from src.tcp import read_request, handle_request, write_response

from src.tcp.tcp_server import create_server_socket, accept_connection

def serve_client(client_socket, cid):

child_pid = os.fork()

if child_pid:

client_socket.close()

return child_pid

request = read_request(client_socket)

if request is None:

print(f"Client #{cid} disconnected.")

return

response = handle_request(request)

write_response(client_socket, response, cid)

os._exit(0)

def reap_children(active_children):

for child_pid in active_children.copy():

child_pid, _ = os.waitpid(child_pid, os.WNOHANG)

if child_pid:

active_children.discard(child_pid)

def run_server(port=53210):

server_socket = create_server_socket(port)

cid = 0

active_children = set()

while True:

client_socket = accept_connection(server_socket, cid)

child_id = serve_client(client_socket, cid)

active_children.add(child_id)

reap_children(active_children)

cid += 1

if __name__ == '__main__':

run_server(port=int(sys.argv[1]))

|

#!/usr/bin/python

import fractions

den = 1

nom = 1

for i in range(1, 10):

for j in range(1, i):

for k in range(1, j):

if (k * 10 + i) * j == k * (i * 10 + j):

den *= j

nom *= k

print(den / fractions.gcd(nom, den))

|

from MSLLib.MSL import run

run("""

COM This is a simple calculator

COM Take user input for the math

GET math Type the math equasion you want to solve:

COM Calculate the equasion and store it in the "$&maths" variable

CAL outmath $&math

COM Print the equasion and the answer

PRL $&math = $&outmath!

""") |

../../Integrate-Exp-Data.py |

from room import Room

from secrets import Secrets

from decision import Decision

from challenge import Challenge

from randomiser import Random

import yaml, os

class Scenario(object):

def __init__(self, name, image=None, text = None):

self.rooms = {}

self.name = name

self.image = image

self.text = text

self.starts = []

import inflection

self.title = inflection.titleize(name)

def add_room(self, room):

self.rooms[room.name] = room

def room(self, room):

return self.rooms[room]

def add_start(self, name):

self.starts.append(self.rooms[name])

@staticmethod

def from_data():

data = yaml.load(open(os.path.join(

os.path.dirname(os.path.dirname(__file__)),

'scenario','data.yaml')))

scenario = Scenario(data['name'],

data.get('image'),

data.get('text'))

for name, roomd in data['rooms'].items():

room= Room(name,

roomd.get('text'),

roomd.get('title'),

roomd.get('image', scenario.image)

)

scenario.add_room(room)

for name in data['start']:

scenario.add_start(name)

for name, roomd in data['rooms'].items():

room = scenario.room(name)

if 'exits' in roomd:

obstacle = Decision(

roomd['exits']

)

if 'cards' in roomd:

obstacle = Challenge(

roomd['success'],

roomd['fail'],

roomd['cards'],

roomd.get('timer',5),

roomd.get('boni',[])

)

if 'requirements' in roomd:

obstacle = Secrets(

roomd['success'],

roomd['fail'],

roomd['requirements'],

roomd.get('special',{}),

roomd.get('failtext',""),

roomd.get('failcontinue',"")

)

if 'random' in roomd:

obstacle = Random(

roomd['prompt'],

roomd['random']

)

room.add_obstacle(obstacle)

return scenario

scenario = Scenario.from_data() |

from string import join

from datetime import datetime

def get_between(string, sep1, sep2):

tmp = string.split(sep1)[1]

return tmp.split(sep2)[0]

ids = file('ids.txt', 'r')

names = file('titles.txt', 'r')

times = file('times.txt', 'r')

outf = file('edges.csv', 'w')

for iline in ids:

nameline = names.readline()

timeline = times.readline()

lines = (iline, nameline, timeline)

_id, _name, timestr = [get_between(l, '>', '<') for l in lines]

dttime = (datetime.strptime(timestr, '%Y-%m-%dT%H:%M:%SZ'))

_time = dttime.strftime('%s')

combline = join([_id, _name, _time, '\n'], ',')

outf.write(combline)

[f.close() for f in (ids, names, outf)]

|

from folium import Marker

from sunnyday import Weather

from geopy.distance import geodesic

from geopy.geocoders import Nominatim

class Address:

def __init__(self, area, zone, city, country='India'):

self.area = area

self.zone = zone

self.city = city

self.country = country

def coord(self):

nom = Nominatim(user_agent="Mozilla/5.0")

address = f"{self.area+', '+self.zone+', '+self.city+', '+self.country}"

loc = nom.geocode(address)

return loc

class GeoLoc(Marker):

def __init__(self, lat, long):

# Inheriting the parent(Marker) class

super().__init__(location=[lat, long])

self.lat = lat

self.long = long

def weather(self):

# Calculates the weather

weather = Weather(apikey="586f3825fb98b38d0c5719a3f11dc995", lat=self.lat, lon=self.long)

return weather.next_12h_simplified()

# Calculates the distance

def distance(loc1, loc2):

return geodesic(loc1, loc2)

|

# coding=utf-8

import os

import sys

import unittest

from time import sleep

from selenium import webdriver

from selenium.common.exceptions import NoAlertPresentException, NoSuchElementException

sys.path.append(os.environ.get('PY_DEV_HOME'))

from webTest_pro.common.initData import init

from webTest_pro.common.model.baseActionAdd import user_login, \

add_cfg_jpks

from webTest_pro.common.model.baseActionDel import del_cfg_jpks

from webTest_pro.common.model.baseActionSearch import search_cfg_jpks

from webTest_pro.common.model.baseActionModify import update_ExcellentClassroom

from webTest_pro.common.logger import logger, T_INFO

reload(sys)

sys.setdefaultencoding("utf-8")

loginInfo = init.loginInfo

hdk_lesson_cfgs = [{'name': u'互动课模板'}, {'name': u'互动_课模板480p'}]

jp_lesson_cfgs = [{'name': u'精品课'}, {'name': u'精品_课480p'}]

conference_cfgs = [{'name': u'会议'}, {'name': u'会_议480p'}]

speaker_lesson_cfgs = [{'name': u'主讲下课'}, {'name': u'主讲_下课_1'}]

listener_lesson_cfgs = [{'name': u'听讲下课'}, {'name': u'听讲_下课_1'}]

excellentClassroomData = [{'name': '720PP', 'searchName': u'精品_课480p'},

{'name': u'720精品_课480p', 'searchName': '720PP'}]

class jpkCfgsMgr(unittest.TestCase):

''''精品课模板管理'''

def setUp(self):

if init.execEnv['execType'] == 'local':

T_INFO(logger,"\nlocal exec testcase")

self.driver = webdriver.Chrome()

self.driver.implicitly_wait(8)

self.verificationErrors = []

self.accept_next_alert = True

T_INFO(logger,"start tenantmanger...")

else:

T_INFO(logger,"\nremote exec testcase")

browser = webdriver.DesiredCapabilities.CHROME

self.driver = webdriver.Remote(command_executor=init.execEnv['remoteUrl'], desired_capabilities=browser)

self.driver.implicitly_wait(8)

self.verificationErrors = []

self.accept_next_alert = True

T_INFO(logger,"start tenantmanger...")

def tearDown(self):

self.driver.quit()

self.assertEqual([], self.verificationErrors)

T_INFO(logger,"tenantmanger end!")

def test_add_cfg_jpks(self):

'''添加精品课模板'''

print "exec:test_add_cfg_jpks..."

driver = self.driver

user_login(driver, **loginInfo)

for jp_lesson_cfg in jp_lesson_cfgs:

add_cfg_jpks(driver, **jp_lesson_cfg)

self.assertEqual(u"添加成功!", driver.find_element_by_css_selector(".layui-layer-content").text)

sleep(0.5)

print "exec:test_add_cfg_jpks success."

def test_bsearch_cfg_jpks(self):

'''查询精品课模板信息'''

print "exec:test_search_cfg_jpks"

driver = self.driver

user_login(driver, **loginInfo)

for jp_lesson_cfg in jp_lesson_cfgs:

search_cfg_jpks(driver, **jp_lesson_cfg)

self.assertEqual(jp_lesson_cfg['name'],

driver.find_element_by_xpath("//table[@id='excellentclassroomtable']/tbody/tr/td[3]").text)

print "exec: test_search_cfg_jpks success."

sleep(0.5)

def test_bupdate_cfg_jpks(self):

'''修改精品课模板信息'''

print "exec:test_bupdate_cfg_jpks"

driver = self.driver

user_login(driver, **loginInfo)

for jp_lesson_cfg in excellentClassroomData:

update_ExcellentClassroom(driver, **jp_lesson_cfg)

print "exec: test_bupdate_cfg_jpks success."

sleep(0.5)

def test_del_cfg_jpks(self):

'''删除精品课模板_确定'''

print "exec:test_del_cfg_jpks..."

driver = self.driver

user_login(driver, **loginInfo)

for jp_lesson_cfg in jp_lesson_cfgs:

del_cfg_jpks(driver, **jp_lesson_cfg)

sleep(1.5)

self.assertEqual(u"删除成功!", driver.find_element_by_css_selector(".layui-layer-content").text)

sleep(0.5)

print "exec:test_del_cfg_jpks success."

def is_element_present(self, how, what):

try:

self.driver.find_element(by=how, value=what)

except NoSuchElementException as e:

return False

return True

def is_alert_present(self):

try:

self.driver.switch_to_alert()

except NoAlertPresentException as e:

return False

return True

def close_alert_and_get_its_text(self):

try:

alert = self.driver.switch_to_alert()

alert_text = alert.text

if self.accept_next_alert:

alert.accept()

else:

alert.dismiss()

return alert_text

finally:

self.accept_next_alert = True

if __name__ == '__main__':

unittest.main()

|

# coding: utf-8

# # Tools for visualizing data

#

# This notebook is a "tour" of just a few of the data visualization capabilities available to you in Python. It focuses on two packages: [Bokeh](https://blog.modeanalytics.com/python-data-visualization-libraries/) for creating _interactive_ plots and _[Seaborn]_ for creating "static" (or non-interactive) plots. The former is really where the ability to develop _programmatic_ visualizations, that is, code that generates graphics, really shines. But the latter is important in printed materials and reports. So, both techniques should be a core part of your toolbox.

#

# With that, let's get started!

#

# > **Note 1.** Since visualizations are not amenable to autograding, this notebook is more of a demo of what you can do. It doesn't require you to write any code on your own. However, we strongly encourage you to spend some time experimenting with the basic methods here and generate some variations on your own. Once you start, you'll find it's more than a little fun!

# >

# > **Note 2.** Though designed for R programs, Hadley Wickham has an [excellent description of many of the principles in this notebook](http://r4ds.had.co.nz/data-visualisation.html).

# ## Part 0: Downloading some data to visualize

#

# For the demos in this notebook, we'll need the Iris dataset. The following code cell downloads it for you.

# In[1]:

import requests

import os

import hashlib

import io

def download(file, url_suffix=None, checksum=None):

if url_suffix is None:

url_suffix = file

if not os.path.exists(file):

url = 'https://cse6040.gatech.edu/datasets/{}'.format(url_suffix)

print("Downloading: {} ...".format(url))

r = requests.get(url)

with open(file, 'w', encoding=r.encoding) as f:

f.write(r.text)

if checksum is not None:

with io.open(file, 'r', encoding='utf-8', errors='replace') as f:

body = f.read()

body_checksum = hashlib.md5(body.encode('utf-8')).hexdigest()

assert body_checksum == checksum, "Downloaded file '{}' has incorrect checksum: '{}' instead of '{}'".format(file, body_checksum, checksum)

print("'{}' is ready!".format(file))

datasets = {'iris.csv': ('tidy', 'd1175c032e1042bec7f974c91e4a65ae'),

'tips.csv': ('seaborn-data', 'ee24adf668f8946d4b00d3e28e470c82'),

'anscombe.csv': ('seaborn-data', '2c824795f5d51593ca7d660986aefb87'),

'titanic.csv': ('seaborn-data', '56f29cc0b807cb970a914ed075227f94')

}

for filename, (category, checksum) in datasets.items():

download(filename, url_suffix='{}/{}'.format(category, filename), checksum=checksum)

print("\n(All data appears to be ready.)")

# # Part 1: Bokeh and the Grammar of Graphics ("lite")

#

# Let's start with some methods for creating an interactive visualization in Python and Jupyter, based on the [Bokeh](https://bokeh.pydata.org/en/latest/) package. It generates JavaScript-based visualizations, which you can then run in a web browser, without you having to know or write any JS yourself. The web-friendly aspect of Bokeh makes it an especially good package for creating interactive visualizations in a Jupyter notebook, since it's also browser-based.

#

# The design and use of Bokeh is based on Leland Wilkinson's Grammar of Graphics (GoG).

#

# > If you've encountered GoG ideas before, it was probably when using the best known implementation of GoG, namely, Hadley Wickham's R package, [ggplot2](http://ggplot2.org/).

# ## Setup

#

# Here are the modules we'll need for this notebook:

# In[2]:

from IPython.display import display, Markdown

import pandas as pd

import bokeh

# Bokeh is designed to output HTML, which you can then embed in any website. To embed Bokeh output into a Jupyter notebook, we need to do the following:

# In[3]:

from bokeh.io import output_notebook

from bokeh.io import show

output_notebook ()

# ## Philosophy: Grammar of Graphics

#

# [The Grammar of Graphics](http://www.springer.com.prx.library.gatech.edu/us/book/9780387245447) is an idea of Leland Wilkinson. Its basic idea is that the way most people think about visualizing data is ad hoc and unsystematic, whereas there exists in fact a "formal language" for describing visual displays.

#

# The reason why this idea is important and powerful in the context of our course is that it makes visualization more systematic, thereby making it easier to create those visualizations through code.

#

# The high-level concept is simple:

# 1. Start with a (tidy) data set.

# 2. Transform it into a new (tidy) data set.

# 3. Map variables to geometric objects (e.g., bars, points, lines) or other aesthetic "flourishes" (e.g., color).

# 4. Rescale or transform the visual coordinate system.

# 5. Render and enjoy!

#

#

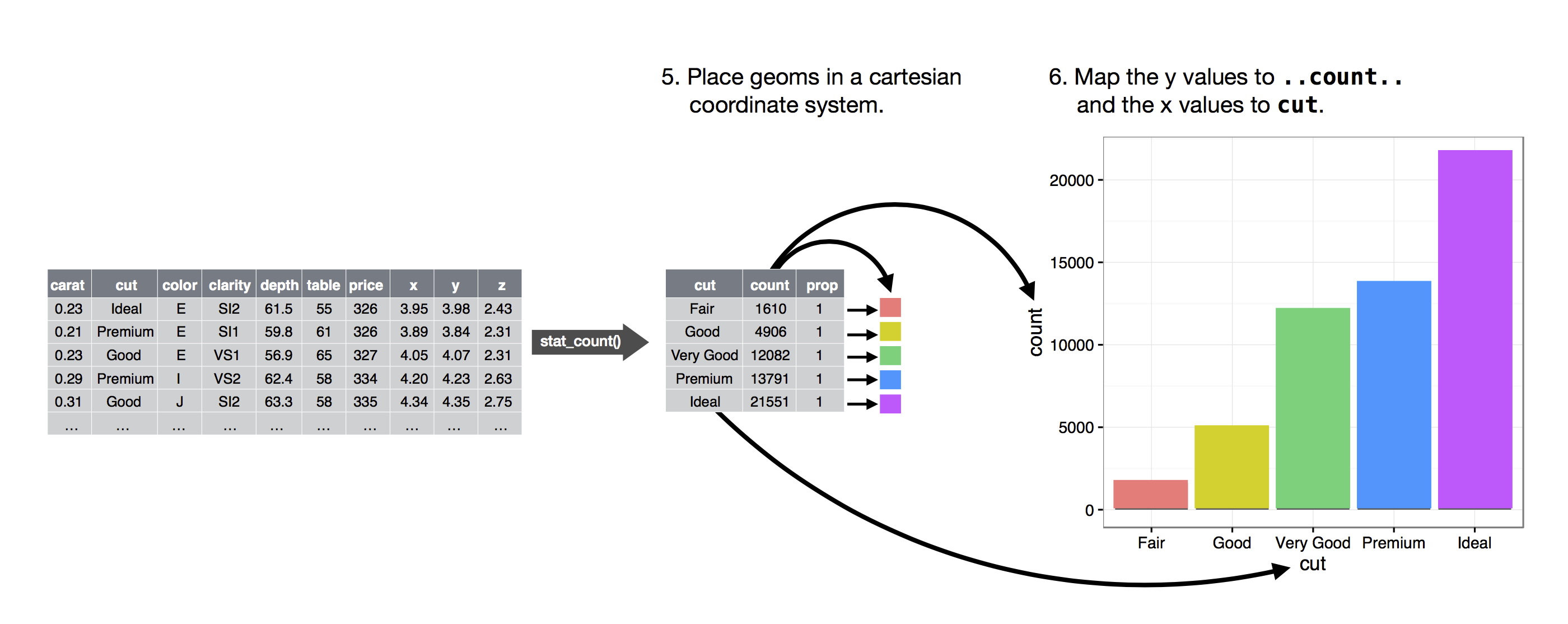

# > This image is "liberated" from: http://r4ds.had.co.nz/data-visualisation.html

# ## HoloViews

#

# Before seeing Bokeh directly, let's start with an easier way to take advantage of Bokeh, which is through a higher-level interface known as [HoloViews](http://holoviews.org/). HoloViews provides a simplified interface suitable for "canned" charts.

#

# To see it in action, let's load the Iris data set and study relationships among its variables, such as petal length vs. petal width.

#

# The cells below demonstrate histograms, simple scatter plots, and box plots. However, there is a much larger gallery of options: http://holoviews.org/reference/index.html

# In[4]:

flora = pd.read_csv ('iris.csv')

display (flora.head ())

# In[5]:

from bokeh.io import show

import holoviews as hv

import numpy as np

hv.extension('bokeh')

# ### 1. Histogram

#

# * The Histogram(f, e) can takes two arguments, frequencies and edges (bin boundaries).

# * These can easily be created using numpy's histogram function as illustrated below.

# * The plot is interactive and comes with a bunch of tools. You can customize these tools as well; for your many options, see http://bokeh.pydata.org/en/latest/docs/user_guide/tools.html.

#

# > You may see some warnings appear in a pink-shaded box. You can ignore these. They are caused by some slightly older version of the Bokeh library that is running on Vocareum.

# In[6]:

frequencies, edges = np.histogram(flora['petal width'], bins = 5)

hv.Histogram(frequencies, edges, label = 'Histogram')

# A user can interact with the chart above using the tools shown on the right-hand side. Indeed, you can select or customize these tools! You'll see an example below.

# ### 2. ScatterPlot

# In[7]:

hv.Scatter(flora[['petal width','sepal length']],label = 'Scatter plot')

# ### 3. BoxPlot

# In[8]:

hv.BoxWhisker(flora['sepal length'], label = "Box whiskers plot")

# ## Mid-level charts: the Plotting interface

#

# Beyond the canned methods above, Bokeh provides a "mid-level" interface that more directly exposes the grammar of graphics methodology for constructing visual displays.

#

# The basic procedure is

# * Create a blank canvas by calling `bokeh.plotting.figure`

# * Add glyphs, which are geometric shapes.

#

# > For a full list of glyphs, refer to the methods of `bokeh.plotting.figure`: http://bokeh.pydata.org/en/latest/docs/reference/plotting.html

# In[9]:

from bokeh.plotting import figure

# Create a canvas with a specific set of tools for the user:

TOOLS = 'pan,box_zoom,wheel_zoom,lasso_select,save,reset,help'

p = figure(width=500, height=500, tools=TOOLS)

print(p)

# In[10]:

# Add one or more glyphs

p.triangle(x=flora['petal width'], y=flora['petal length'])

# In[11]:

show(p)

# **Using data from Pandas.** Here is another way to do the same thing, but using a Pandas data frame as input.

# In[12]:

from bokeh.models import ColumnDataSource

data=ColumnDataSource(flora)

p=figure()

p.triangle(source=data, x='petal width', y='petal length')

show(p)

# **Color maps.** Let's make a map that assigns each unique species its own color. Incidentally, there are many choices of colors! http://bokeh.pydata.org/en/latest/docs/reference/palettes.html

# In[13]:

# Determine the unique species

unique_species = flora['species'].unique()

print(unique_species)

# In[14]:

# Map each species with a unique color

from bokeh.palettes import brewer

color_map = dict(zip(unique_species, brewer['Dark2'][len(unique_species)]))

print(color_map)

# In[15]:

# Create data sources for each species

data_sources = {}

for s in unique_species:

data_sources[s] = ColumnDataSource(flora[flora['species']==s])

# Now we can more programmatically generate the same plot as above, but use a unique color for each species.

# In[16]:

p = figure()

for s in unique_species:

p.triangle(source=data_sources[s], x='petal width', y='petal length', color=color_map[s])

show(p)

# That's just a quick tour of what you can do with Bokeh. We will incorporate it into some of our future labs. At this point, we'd encourage you to experiment with the code cells above and try generating your own variations!

# # Part 2: Static visualizations using Seaborn

#

# Parts of this lab are taken from publicly available Seaborn tutorials.

# http://seaborn.pydata.org/tutorial/distributions.html

#

# They were adapted for use in this notebook by [Shang-Tse Chen at Georgia Tech](https://www.cc.gatech.edu/~schen351).

# In[17]:

import seaborn as sns

# The following Jupyter "magic" command forces plots to appear inline

# within the notebook.

get_ipython().magic('matplotlib inline')

# When dealing with a set of data, often the first thing we want to do is get a sense for how the variables are distributed. Here, we will look at some of the tools in seborn for examining univariate and bivariate distributions.

#

# ### Plotting univariate distributions

# distplot() function will draw a histogram and fit a kernel density estimate

# In[18]:

import numpy as np

x = np.random.normal(size=100)

sns.distplot(x)

# ## Plotting bivariate distributions

#

# The easiest way to visualize a bivariate distribution in seaborn is to use the jointplot() function, which creates a multi-panel figure that shows both the bivariate (or joint) relationship between two variables along with the univariate (or marginal) distribution of each on separate axes.

# In[19]:

mean, cov = [0, 1], [(1, .5), (.5, 1)]

data = np.random.multivariate_normal(mean, cov, 200)

df = pd.DataFrame(data, columns=["x", "y"])

# **Basic scatter plots.** The most familiar way to visualize a bivariate distribution is a scatterplot, where each observation is shown with point at the x and y values. You can draw a scatterplot with the matplotlib plt.scatter function, and it is also the default kind of plot shown by the jointplot() function:

# In[20]:

sns.jointplot(x="x", y="y", data=df)

# **Hexbin plots.** The bivariate analogue of a histogram is known as a “hexbin” plot, because it shows the counts of observations that fall within hexagonal bins. This plot works best with relatively large datasets. It’s availible through the matplotlib plt.hexbin function and as a style in jointplot()

# In[21]:

sns.jointplot(x="x", y="y", data=df, kind="hex")

# **Kernel density estimation.** It is also posible to use the kernel density estimation procedure described above to visualize a bivariate distribution. In seaborn, this kind of plot is shown with a contour plot and is available as a style in jointplot()

# In[22]:

sns.jointplot(x="x", y="y", data=df, kind="kde")

# ## Visualizing pairwise relationships in a dataset

# To plot multiple pairwise bivariate distributions in a dataset, you can use the pairplot() function. This creates a matrix of axes and shows the relationship for each pair of columns in a DataFrame. by default, it also draws the univariate distribution of each variable on the diagonal Axes:

# In[23]:

sns.pairplot(flora)

# In[24]:

# We can add colors to different species

sns.pairplot(flora, hue="species")

# ### Visualizing linear relationships

# In[25]:

tips = pd.read_csv("tips.csv")

tips.head()

# We can use the function `regplot` to show the linear relationship between total_bill and tip.

# It also shows the 95% confidence interval.

# In[26]:

sns.regplot(x="total_bill", y="tip", data=tips)

# ### Visualizing higher order relationships

# In[27]:

anscombe = pd.read_csv("anscombe.csv")

sns.regplot(x="x", y="y", data=anscombe[anscombe["dataset"] == "II"])

# The plot clearly shows that this is not a good model.

# Let's try to fit a polynomial regression model with degree 2.

# In[28]:

sns.regplot(x="x", y="y", data=anscombe[anscombe["dataset"] == "II"], order=2)

# **Strip plots.** This is similar to scatter plot but used when one variable is categorical.

# In[29]:

sns.stripplot(x="day", y="total_bill", data=tips)

# **Box plots.**

# In[30]:

sns.boxplot(x="day", y="total_bill", hue="time", data=tips)

# **Bar plots.**

# In[31]:

titanic = pd.read_csv("titanic.csv")

sns.barplot(x="sex", y="survived", hue="class", data=titanic)

# **Fin!** That ends this tour of basic plotting functionality available to you in Python. It only scratches the surface of what is possible. We'll explore more advanced features in future labs, but in the meantime, we encourage you to play with the code in this notebook and try to generate your own visualizations of datasets you care about!

#

# Although this notebook did not require you to write any code, go ahead and "submit" it for grading. You'll effectively get "free points" for doing so: the code cell below gives it to you.

# In[33]:

# Test cell: `freebie_test`

assert True

|

import loader

import pyautogui

import time

import json

import os

import distutils.dir_util

pyautogui.FAILSAFE = True

def main():

generateFiles()

generateFileProperties()

createSave()

def generateFiles():

try:

distutils.dir_util.mkpath(pathing('SaveGames'))

return False

except FileExistsError:

pass

def createSave():

try:

saveFile = False

i = 0

while False:

try:

with open(pathing('SaveGames') + f'/save{i}.json', 'w+') as f:

x = {"Pass": 0, "Cookies": 0, "Buildings": 0}

json.dump(x, f)

except FileExistsError:

i += 1

except FileExistsError:

x = pyautogui.confirm('A save file exists. Delete and reset?')

if x == 'OK':

pass

else:

print('done')

def generateFileProperties():

loader.writeLine('cookieMaker2.py properties:', 'endWOinput', 0.1)

loader.writeLine(f'File ----------> : {__file__}', 'endWOinput')

loader.writeLine(f'Access time ---> : {time.ctime(os.path.getatime(__file__))}', 'endWOinput')

loader.writeLine(f'Modified time -> : {time.ctime(os.path.getmtime(__file__))}', 'endWOinput')

loader.writeLine(f'Change time ---> : {time.ctime(os.path.getctime(__file__))}', 'endWOinput')

loader.writeLine('File size -----> : ', 'endWOinput')

loader.writeLine(f'In bytes ------> : {os.path.getsize(__file__)}', 'endWOinput')

loader.writeLine(f'In kilobytes --> : {os.path.getsize(__file__) / 1000}', 'endWOinput')

loader.writeLine(f'In megabytes --> : {os.path.getsize(__file__) / 1000000}', 'endWOinput')

loader.writeLine(f'In gigabytes --> : {os.path.getsize(__file__) / 1000000000}', 'endWOinput')

loader.writeLine(f'In terabytes --> : {os.path.getsize(__file__) / 1000000000000}', 'endWOinput')

loader.writeLine(f'In petabytes --> : {os.path.getsize(__file__) / 100000000000000}', 'endWOinput')

def pathing(option):

if option == 'dirPath':

dir_path = os.path.dirname(os.path.realpath(__file__))

return dir_path

elif option == 'SaveGames':

dir_path = os.path.dirname(os.path.realpath(__file__))

savePath = dir_path + '/SaveGames'

return savePath

if __name__ == '__main__':

main()

|

#!/usr/bin/python3

'''

DBStorage schema using SQLAlchemy and MySQL

'''

import pymongo

from pymongo import MongoClient

from models.base_model import BaseModel

import models

import os

class DBStorage:

'''

Main database storage class

'''

__collection = None

__client = None

def __init__(self):

'''

Instantiation of a database storage class

'''

self.database = os.environ.get('MONGODB_URL')

self.__client = MongoClient(self.database)

def all(self, cls=None):

'''

Queries database for specified classes

Parameters:

cls (object): the class to query

Return:

a dictionary of objects of the corresponding cls

'''

to_query = []

new_dict = {}

results = []

if cls is not None:

# Set the database collection to query

self.new(cls)

# Queries the database collection for __class__ equivalency.

# If there are matches, it loops and appends to results array.

for dic in self.__collection.find({"__class__": cls.__name__}):

results.append(models.classes[cls.__name__](**dic))

for row in results:

key = row.__class__.__name__ + '.' + row.id

new_dict[key] = row

else:

for key, value in models.classes.items():

try:

# Sets the database collection to query

self.new(value)

# If the query returns something, the class is

# appended to an array

if self.__collection.find_one({"__class__": key}):

to_query.append(models.classes[key])

except BaseException:

continue

for classes in to_query:

# Set the database collection to query

self.new(classes)

# For every object with the classname associated with the

# collection, append to the results array

for dic in self.__collection.find(

{"__class__": classes.__name__}):

results.append(models.classes[classes.__name__](**dic))

for row in results:

key = row.__class__.__name__ + '.' + row.id

new_dict[key] = row

return new_dict

def new(self, obj):

'''

Sets the MongoDB collection

Parameters:

obj (object): the object to refer to for database

table/collection

'''

self.__collection = eval("self._DBStorage__client.AdventureUs.{}"

.format(obj.collection), {"__builtins__": {}},

{'self': self})

def save(self, obj):

'''

Saves an object to MongoDB or updates it if it exists

'''

self.new(obj)

# Checks if the MongoDB _id is present:

# if not, the object is not in the database

if hasattr(obj, "_id"):

self.__collection.update_one({"id": obj.id},

{"$set": obj.to_dict_mongoid()})

else:

self.__collection.insert_one(obj.to_dict())

def delete(self, obj=None):

'''

Deletes a specified object from the database

Parameters:

obj (object): the object to delete

'''

self.new(obj)

self.__collection.delete_one({"id": obj.id})

def reload(self):

'''

Restarts the database engine session

'''

def get(self, cls, id):

'''

Gets a single instance of a particular object based on id and class

Parameters:

cls (string): the class of the object to get

id (string): the id of the object to get

'''

if cls not in models.classes.keys():

return None

self.new(models.classes[cls])

obj = self.__collection.find_one({"id": id})

if obj is None:

return None

return models.classes[cls](**obj)

def get_user(self, username):

'''

Gets a single instance of a user based on username

Parameters:

username (string): the username to pull from the database

'''

self.new(models.User)

obj = self.__collection.find_one({"username": username})

if obj is None:

return None

return models.User(**obj)

def count(self, cls=None):

'''

Counts the number of a specific class in storage or all objects

if cls variable is None

Parameters:

cls (string): the class of the objects to count

'''

count_dict = {}

if cls is None:

count_dict = self.all()

else:

if cls in models.classes.keys():

count_dict = self.all(models.classes[cls])

return len(count_dict)

|

import flask

from flask import render_template

from helpers import json_method

from standalone import get_queue_and_start

app = flask.Flask(__name__)

logger = app.logger

queue = None

@app.route('/')

def index():

global queue

if queue is None:

queue = get_queue_and_start()

return render_template('home.html')

@app.route('/move')

@json_method

def move():

messages = []

while queue.qsize() > 0:

messages.append(queue.get())

return messages

if __name__ == '__main__':

app.run()

|

n=int(input())

f=1

for x in range (n,1,-1):

f*=x

print(f)

|

from time import sleep

from enum import Enum

import napari

import numpy as np

import psygnal

from napari.qt.threading import thread_worker

BOARD_SIZE = 14

INITIAL_SNAKE_LENGTH = 4

class Direction(Enum):

UP = (-1, 0)

DOWN = (1, 0)

LEFT = (0, -1)

RIGHT = (0, 1)

class Snake:

board_update = psygnal.Signal()

nom = psygnal.Signal()

def __init__(self):

self.length = INITIAL_SNAKE_LENGTH

self.direction = Direction.DOWN

self.board = np.zeros((BOARD_SIZE, BOARD_SIZE), dtype=int)

start_position = self._random_empty_board_position

self._head_position = start_position

self.board[start_position] = 1

self.tail = self.board.copy()

self.food = np.zeros_like(self.board)

self.food[self._random_empty_board_position] = 1

def update(self):

self._head_position = self._next_head_position

if self.on_food:

self.length += 1

self._update_food()

self.nom.emit()

self.tail[self.tail > 0] += 1

self.tail[self.tail > self.length] = 0

self.tail[self._head_position] = 1

self.board = self.tail > 0

self.board_update.emit()

def _update_food(self):

self.food = np.zeros_like(self.food)

self.food[self._random_empty_board_position] = 1

@property

def direction(self):

"""not synchronised with velocity"""

return self._direction

@direction.setter

def direction(self, value: Direction):

self._velocity_ = value.value

self._direction = value

@property

def about_to_self_collide(self):

if self._next_board_value == 1:

return True

else:

return False

@property

def on_food(self):

if self.food[self._head_position] == 1:

return True

else:

return False

@property

def _head_position(self):

return self._head_position_

@_head_position.setter

def _head_position(self, value):

self._head_position_ = tuple(value)

@property

def _velocity(self):

return self._velocity_

@property

def _random_empty_board_position(self):

idx = np.where(self.board == 0)

n_idx = len(idx[0])

random_idx = np.random.randint(0, n_idx - 1, 1)

return int(idx[0][random_idx]), int(idx[1][random_idx])

@property

def _next_head_position(self):

next_position = np.array(self._head_position) + np.array(self._velocity)

next_position[next_position > (BOARD_SIZE - 1)] = 0

next_position[next_position < 0] = BOARD_SIZE - 1

return tuple(next_position)

@property

def _next_board_value(self):

return int(self.board[self._next_head_position])

viewer = napari.Viewer()

snake = Snake()

outline = np.ones((BOARD_SIZE + 2, BOARD_SIZE + 2))

outline[1:-1, 1:-1] = 0

outline_layer = viewer.add_image(outline, blending='additive', colormap='blue', translate=(-1, -1))

board_layer = viewer.add_image(snake.board, blending='additive')

food_layer = viewer.add_image(snake.food, blending='additive', colormap='red')

viewer.text_overlay.visible = True

@snake.board_update.connect

def on_board_update():

viewer.text_overlay.text = f'Score: {snake.length - INITIAL_SNAKE_LENGTH}'

board_layer.data = snake.board

food_layer.data = snake.food

@snake.nom.connect

def nom():

print('nom')

viewer.text_overlay.text = 'nom nom nom'

@viewer.bind_key('w')

def up(event=None):

snake.direction = Direction.UP

@viewer.bind_key('s')

def down(event=None):

snake.direction = Direction.DOWN

@viewer.bind_key('a')

def left(event=None):

snake.direction = Direction.LEFT

@viewer.bind_key('d')

def right(event=None):

snake.direction = Direction.RIGHT

@thread_worker(connect={"yielded": snake.update})

def update_in_background():

while True:

sleep(1/10)

if snake.about_to_self_collide:

print('game over!')

sleep(1)

snake.__init__()

yield

worker = update_in_background()

napari.run()

|

import os

from PIL import Image

def argumanetation(path, name):

label = name[-7:]

id = str(int(name[:-7])+300)

newName = id + label

img = Image.open(path+name)

img = img.transpose(Image.ROTATE_90) # rotation 90

#img = img.transpose(Image.ROTATE_180) # rotation 180

#img = img.transpose(Image.ROTATE_270) # rotation 270

# img.show("img/rotateImg.png")

img.save(path+newName)

def mirror(path, name):

label = name[-7:]

id = str(int(name[:-7])+600)

newName = id + label

img = Image.open(path+name)

img.transpose(Image.FLIP_LEFT_RIGHT).save(path+newName)

path = "./dataAndLabel/"

fileNames = os.listdir(path)

errorNames = ['117b33.jpg', '16b44.jpg', '171b55.jpg', '218g32.jpg', '254b11.jpg', '256b22.jpg', '267g23.jpg', '295g13.jpg', '37s23.jpg', '43g23.jpg', '56s23.jpg', '8g14.jpg']

rightNames = set(fileNames).difference(set(errorNames))

for name in rightNames:

# argumanetation(path, name)

mirror(path, name)

|

from django.core.cache.backends import memcached

class MemcachedCache(memcached.MemcachedCache):

def _get_memcache_timeout(self, timeout):

"""Override _get_memcache_timeout so that it accepts 0."""

if timeout == 0: return 0

else: return super(MemcachedCache, self)._get_memcache_timeout(timeout)

|

import socket

def create_command_socket(host, port):

sock = socket.socket()

sock.setsockopt(socket.SOL_SOCKET, socket.SO_REUSEADDR, 1)

sock.bind((host, port))

sock.listen(1)

return sock

def create_data_socket(client):

sock = socket.socket(socket.AF_INET, socket.SOCK_STREAM)

print("Connecting to client " + client.address[0] + ":" + str(client.address[1]) + " on dataport " + str(client.data_port))

sock.connect((client.address[0], client.data_port))

return sock

|