branch_name stringclasses 149 values | text stringlengths 23 89.3M | directory_id stringlengths 40 40 | languages listlengths 1 19 | num_files int64 1 11.8k | repo_language stringclasses 38 values | repo_name stringlengths 6 114 | revision_id stringlengths 40 40 | snapshot_id stringlengths 40 40 |

|---|---|---|---|---|---|---|---|---|

refs/heads/master | <file_sep>/* ①book.h文件的完整内容 */

#ifndef _BOOK /*条件编译,防止重复包含的错误*/

#define _BOOK

#include <string.h>

#define NUM 20 /*定义图书常量,此处可以根据实际需要修改常量值*/

struct Student /*学生记录的数据域*/

{

long num; /*学号 */

char name[20]; /*姓名 */

};

struct Book

{

char title[20]; /*书名 */

long number[20]; /*索引号 */

char state[4]; /*借阅状态 */

int kc; /*库存*/

};

typedef struct Book Book;

#define sizeBook sizeof(Book) /*一条图书记录所需要的内存空间大小*/

int readBook(Book book[],int n); /*读入书书籍名称*/

void printB00k( Book *Book , int n); /*输出所有书籍记录的值*/

void sortBook(Book book[],int n,int condition); /*选择法从大到小排序,按condition所规定的条件*/

int searchBook(Book book[],int n,Book b,int condition,int f[]) ; /*根据条件找数组中与b相等的各元素*/

int addBook(Book book[],int n,Book b); /*向数组中增加一条图书信息*/

int deleteBook(Book book[],int n,Book b); /*从数组中删除一条图书信息*/

#endif<file_sep>#include<stdio.h>

#include<stdlib.h>

#include"file.h"

#include"book.h"

void printHead( ) /*打印图书信息的表头*/

{

printf("%ld%s%ld%s%d\n","索引号","书名","学号","姓名","库存");

}

void menu( ) /*顶层菜单函数*/

{

printf("******** 1. 显示基本信息 ********\n");

printf("******** 2. 图书信息管理 ********\n");

printf("******** 3. 借阅人信息管理 ********\n");

printf("******** 4. 借阅数据统计********\n");

printf("******** 5. 根据条件查询 ********\n");

printf("******** 0. 退出 ********\n");

}

void menuBase( ) /*2、基本信息管理菜单函数*/

{

printf("%%%%%%%% 1. 插入图书记录 %%%%%%%%\n");

printf("%%%%%%%% 2. 删除图书记录 %%%%%%%%\n");

printf("%%%%%%%% 3. 修改图书记录 %%%%%%%%\n");

printf("%%%%%%%% 0. 返回上层菜单 %%%%%%%%\n");

}

void peopleBase() /*3、借阅人信息管理菜单函数*/

{

printf("@@@@@@@@@ 1. 插入借阅人信息记录 @@@@@@@@\n");

printf("@@@@@@@@@ 2. 删除借阅人信息记录 @@@@@@@@\n");

printf("@@@@@@@@@ 3. 修改借阅人信息记录 @@@@@@@@\n");

printf("@@@@@@@@@ 0. 返回上层菜单 @@@@@@@@\n");

}

void menuCount( ) /*4、统计菜单函数*/

{

printf("&&&&&&&& 1. 求借阅次数最高 &&&&&&&&\n");

printf("&&&&&&&& 1. 求借阅次数最低 &&&&&&&&\n");

printf("&&&&&&&& 0. 返回上层菜单 &&&&&&&&\n");

}

void menuSearch() /*5、根据条件查询菜单函数*/

{

printf("######## 1. 按书名查询 ########\n");

printf("######## 2. 按索引号查询 ########\n");

printf("######## 3. 借阅人信息查询 ########\n");

printf("######## 0. 返回上层菜单 ########\n");

}

int baseManage(Book book[],int n) /*该函数完成基本图书信息管理*/

/*按索引号进行插入删除修改,索引号不能重复*/

{

int choice,t,find[NUM];

Book b;

do

{

menuBase( ); /*显示对应的二级菜单*/

printf("choose one operation you want to do:\n");

scanf("%d",&choice); /*读入选项*/

switch(choice)

{

case 1: readBook(&b,1); /*读入一条待插入的图书记录*/

n=addBook(book,n,b); /*调用函数插入图书记录*/

break;

case 2: printf("Input the number deleted\n");

scanf("%ld",&b.number); /*读入一个待删除的图书索引号*/

n=deleteBook(book,n,b); /*调用函数删除指定索引号的图书记录*/

break;

case 3: printf("Input the number modified\n");

scanf("%ld",&b.number); /*读入一个待修改的图书索引号*/

t=searchBook(book,n,b,1,find) ; /*调用函数查找指定索引号的图书记录*/

if (t) /*如果该索引号的记录存在*/

{

readBook(&b,1); /*读入一条完整的图书记录信息*/

book[find[0]]=b; /*将刚读入的记录赋值给需要修改的数组记录*/

}

else /*如果该索引号的记录不存在*/

printf("this book is not in,can not be modified.\n"); /*输出提示信息*/

break;

case 0: break;

}

}while(choice);

return n; /*返回当前操作结束后的实际记录条数*/

}

int baseManage1(Student student[],int n) /*该函数完成借阅人基本信息管理*/

/*按学号进行插入删除修改, 学号不能重复*/

{

int choice,t,find[NUM];

Student s;

do

{

peopleBase( ); /*显示对应的二级菜单*/

printf("choose one operation you want to do:\n");

scanf("%d",&choice); /*读入选项*/

switch(choice)

{

case 1: readStudent(&s,1); /*读入一条待插入的借阅人记录*/

n=insertStudent(student,n,s); /*调用函数插入借阅人记录*/

break;

case 2: printf("Input the student number deleted\n");

scanf("%ld",&s.num); /*读入一个待删除的学号*/

n=deleteStudent(student,n,s); /*调用函数删除指定学号的借阅人记录*/

break;

case 3: printf("Input the student number modified\n");

scanf("%ld",&s.num); /*读入一个待修改的学号记录*/

t=searchStudent(student,n,s,1,find) ; /*调用函数查找指定学号的借阅人记录*/

if (t) /*如果该学号的记录存在*/

{

readStudent(&s,1); /*读入一条完整的借阅人记录信息*/

student[find[0]]=s; /*将刚读入的记录赋值给需要修改的数组记录*/

}

else /*如果该学好的借阅人记录不存在*/

printf("this people is not in,can not be modified.\n"); /*输出提示信息*/

break;

case 0: break;

}

}while(choice);

return n; /*返回当前操作结束后的实际记录条数*/

}

void printBookTimes(char *b,double m[NUM][2],int k) /*打印借阅次数通用函数,被countManage 调用

/*形式参数k代表输出不同的内容*/

{

int i;

printf(b);

for(i=0;i<NUM;i++)

printf("%d",m[i][k]);

printf("\n");

}

void countManage(Book book[],int n) /*该函数完成借阅次数的统计功能*/

{

int choice;

double mark[NUM][2];

do

{

menuCount( ); /*显示对应的二级菜单*/

printf("choose one operation you want to do:\n");

scanf("%d",&choice);

switch(choice)

{

case 1: printBookTimes("10本书的最高借阅次数是:\n",mark,0); /*输出最高次数*/

break;

case 2: printBookTimes("·10本书的最低借阅次数是:\n",mark,1); /*输出最低次数*/

break;

case 0: break;

}

}while (choice);

}

void searchManage(Book book[],int n) /*该函数完成根据条件查询功能*/

{

int i,choice,findnum,f[NUM];

Book b;

Student s;

do

{

menuSearch( ); /*显示对应的二级菜单*/

printf("choose one operation you want to do:\n");

scanf("%d",&choice);

switch(choice)

{

case 1: printf("Input a book\'s title will be searched:\n");

scanf("%ld",&b.title); /*输入待查询图书的索引号*/

break;

case 2: printf("Input a book\'s number will be searched:\n");

scanf("%s",&b.number); /*输入待查询图书的书名*/

break;

case 3: printf(" search the informatiom of the readers");

scanf("%ld",&s.num); /*输入待查询借阅人的学号*/

case 0: break;

}

if (choice>=1&&choice<=2)

{

findnum=searchBook(book,n,b,choice,f); /*查找的符合条件元素的下标存于f数组中*/

if (findnum) /*如果查找成功*/

{

printHead( ); /*打印表头*/

for (i=0;i<findnum;i++) /*循环控制f数组的下标*/

printBook(&book[f[i]],1); /*每次输出一条记录*/

}

else

printf("this record does not exist!\n"); /*如果查找不到元素,则输出提示信息*/

}

}while (choice);

}

int runMain(Book book[],Student student[],int n,int choice) /*主控模块,对应于一级菜单其下各功能选择执行*/

{

switch(choice)

{

case 1: printHead( ); /* 1. 显示基本信息*/

sortBook(book,n,1); /*按借阅次数由小到大的顺序排序记录*/

printBook(book,n); /*按借阅次数由小到大的顺序输出所有记录*/

break;

case 2: n=baseManage(book,n); /* 2. 图书基本信息管理*/

break;

case 3: n=baseManage1(student,n); /* 3. 借阅人信息管理*/

break;

case 4: countManage(book,n); /* 4. 借阅次数统计*/

break;

case 5: searchManage(book,n); /* 5. 根据条件查询*/

break;

case 0: break;

}

return n;

}

int main( )

{

Book book[NUM];

Student student[STU]; /*定义实参一维数组存储图书记录*/

int choice,n,m;

n=readFile(book); /*首先读取文件,记录条数返回赋值给n*/

m=readstudentFile(student);

if (!n) /*如果原来的文件为空*/

{

n=createFile(book); /*则首先要建立文件,从键盘上读入一系列记录存于文件*/

}

else if(!m)

{

m=createstudentfile(student);

}

do

{

menu(); /*显示主菜单*/

printf("Please input your choice: ");

scanf("%d",&choice);

if (choice>=0&&choice<=5)

n=runMain(book,student,n,choice); /*通过调用此函数进行一级功能项的选择执行*/

else

printf("error input,please input your choice again!\n");

} while (choice);

sortBook(book,n,1); /*存入文件前借阅次数由小到大排序*/

saveFile(book,n); /*将结果存入文件*/

return 0;

}

<file_sep> /*③ file.h文件的内容如下:*/

#include <stdio.h>

#include <stdlib.h>

#include "book.h"

int createFile(Book book[]) /*建立初始的数据文件*/

{

FILE *fp;

int n;

if((fp=fopen("d:\\book.dat", "wb")) == NULL) /*指定好文件名,以写入方式打开*/

{

printf("can not open book file !\n"); /*若打开失败,输出提示信息*/

exit(0); /*然后退出*/

}

printf("input book's information:\n");

n=readBook(book,NUM); /*调用book.h中的函数读数据*/

fwrite(book,sizeBook,n,fp); /*将刚才读入的所有记录一次性写入文件*/

fclose(fp);

return n;

}

int readFile(Book book[ ] ) /*将文件中的内容读出置于结构体数组book中*/

{

FILE *fp;

int i=0;

if((fp=fopen("d:\\book.dat", "rb")) == NULL) /*以读的方式打开指定文件*/

{

printf("bookfile does not exist,create it first:\n"); /*如果打开失败输出提示信息*/

return 0; /*然后返回0*/

}

fread(&book[i],sizeBook,1,fp); /*读出第一条记录*/

while(!feof(fp)) /*文件未结束时循环*/

{

i++;

fread(&book[i],sizeBook,1,fp); /*再读出下一条记录*/

}

fclose(fp); /*关闭文件*/

return i; /*返回记录条数*/

}

void saveFile(Book book[],int n) /*将结构体数组的内容写入文件*/

{

FILE *fp;

if((fp=fopen("d:\\book.dat", "wb")) == NULL) /*以写的方式打开指定文件*/

{

printf("can not open book file !\n"); /*如果打开失败,输出提示信息*/

exit(0); /*然后退出*/

}

fwrite(book,sizeBook,n,fp);

fclose(fp); /*关闭文件*/

}

int createstudentfile(Student student[])

{

FILE *fq;

int m;

if((fq=fopen("d:\\student.dat","wb"))==NULL)

{

printf("can not open student file !\n");

exit(0);

}

printf("input borrower's information:\n"); /*关闭文件*/

m=readStudent(student,STU);

fwrite(student,sizeStudent,m,fq);

fclose(fq);

return m;

}

int readstudentFile(Student student[ ] ) /*将文件中的内容读出置于结构体数组book中*/

{

FILE *fq;

int j=0;

if((fq=fopen("d:\\student.dat", "rb")) == NULL) /*以读的方式打开指定文件*/

{

printf("studentfile does not exist,create it first:\n"); /*如果打开失败输出提示信息*/

return 0; /*然后返回0*/

}

fread(&student[j],sizeStudent,1,fq); /*读出第一条记录*/

while(!feof(fq)) /*文件未结束时循环*/

{

j++;

fread(&student[j],sizeStudent,1,fq); /*再读出下一条记录*/

}

fclose(fq); /*关闭文件*/

return j; /*返回记录条数*/

}

void savestudentFile(Student student[],int n) /*将结构体数组的内容写入文件*/

{

FILE *fq;

if((fq=fopen("d:\\student.dat", "wb")) == NULL) /*以写的方式打开指定文件*/

{

printf("can not open student file !\n"); /*如果打开失败,输出提示信息*/

exit(0); /*然后退出*/

}

fwrite(student,sizeStudent,n,fq);

fclose(fq); /*关闭文件*/

}<file_sep>#ifndef _BOOK /*条件编译,防止重复包含的错误*/

#define _BOOK

#include <string.h>

#define NUM 3

#define STU 3

struct Book

{

char title[20]; /*书名 */

long number; /*索引号 */

int time; /*借阅次数 */

int kc; /*该图书总数量*/

};

typedef struct Book Book; /*定义图书常量,此处可以根据实际需要修改常量值*/

struct Student /*学生记录的数据域*/

{

long num; /*学号 */

char name[20]; /*姓名 */

Book message;

};typedef struct Student Student;

#define sizeBook sizeof(Book) /*一条图书记录所需要的内存空间大小*/

#define sizeStudent sizeof(Student) /*一个借阅人记录所需要的内存空间大小*/

int readBook(Book book[],int n); /*读入书书籍名称*/

void printBook( Book *Book , int n); /*输出所有书籍记录的值*/

int equal(Book b1,Book b2,int condition);/*比较图书记录是否相同*/

int larger(Book b1,Book b2,int condition); /*根据condition条件比较两本图书索引号的大小*/

void sortBook(Book book[],int n,int condition); /*选择法从小到大排序,按condition所规定的条件*/

int searchBook(Book book[],int n,Book b,int condition,int f[]) ; /*根据条件找数组中与b相等的各元素*/

int addBook(Book book[],int n,Book b); /*向数组中增加一条图书信息*/

int deleteBook(Book book[],int n,Book b); /*从数组中删除一条图书信息*/

int readStudent(Student student[], int n); /*读入借阅人记录值,借阅人为0或读满规定条数记录时停止*/

void printStudent( Student *student, int n); /*输出所有借阅人记录的值*/

int equal1(Student s1,Student s2,int condition);/*比较学生记录是否相同*/

void sortStudent(Student stu[],int n,int condition); /*选择法排序,按condition条件由小到大排序*/

int searchStudent(Student stu[],int n,Student s,int condition,int f[ ]); /*在stu数组中依condition条件查找*/

int insertStudent(Student student[],int n,Student s); /*向数组中增加一条学生信息*/

int deleteStudent(Student student[],int n,Student s);

#endif | 71d9eeb3952ac7aafd44d297d0c57dffde6b325d | [

"C",

"C++"

] | 4 | C | B15090806/B150908 | ab1b31b18ccdaea75881c1d2175474e6e7ed248b | fe7dd24b47b21ec2532763514e11e81ab1ae7ea7 |

refs/heads/master | <repo_name>ciena/ZeroTouchProvisioning<file_sep>/main.go

package main

import (

"bytes"

"flag"

"fmt"

"github.com/tmc/scp"

"golang.org/x/crypto/ssh"

"log"

"os"

"time"

)

const (

//onosIP = "10.0.0.1"

TIMEOUT = 3

)

func main() {

ip := flag.String("ip", "", "IP address of the switch")

host := flag.String("hostname", "", "Hoatname of the switch")

dpid := flag.String("dpid", "", "DPID of the switch")

user := flag.String("user", "root", "Username for the switch login")

password := flag.String("password", "onl", "Password for the switch login")

onosIP := flag.String("onosip", "10.1.0.1", "ONOS controller IP")

flag.Parse()

var buf bytes.Buffer

logger := log.New(&buf, "AUTOCONFIG: ", log.Ltime)

logger.Println("logger initialized")

config := &ssh.ClientConfig{

User: *user,

Auth: []ssh.AuthMethod{

ssh.Password(*password),

},

}

client, err := ssh.Dial("tcp", *ip+":22", config)

if err != nil {

panic("Failed to dial: " + err.Error())

}

cmd1 := "Working on... Hostname: " + *host + " with DPID: " + *dpid + " IP: " + *ip

fmt.Println(cmd1)

scpCmd := "scp"

cmdRC := "echo dpkg -i --force-overwrite /mnt/flash2/ofdpa-i.12.1.1_12.1.1+accton1.7-1_amd64.deb > /etc/rc.local"

hostnameString := fmt.Sprintf("hostname %s", *host)

cmdRChost := "echo " + hostnameString + " >> /etc/rc.local"

cmdRCexit := "echo exit 0 >> /etc/rc.local"

connect := "brcm-indigo-ofdpa-ofagent --dpid=" + *dpid + " --controller=" + *onosIP

cmds := []string{"test -e /etc/.configured && echo 'found' || echo 'notFound'",

"test -e /etc/.connected && echo 'connected' || echo 'notConnected'",

"persist /etc/network/interfaces",

"savepersist",

scpCmd,

"service ofdpa stop",

"dpkg -i --force-overwrite /mnt/flash2/ofdpa-i.12.1.1_12.1.1+accton1.7-1_amd64.deb",

"service ofdpa restart",

"persist /etc/accton/ofdpa.conf",

"savepersist",

cmdRC,

cmdRChost,

cmdRCexit,

"persist /etc/rc.local",

"savepersist",

connect,

"touch /etc/.configured",

"persist /etc/.configured",

"savepersist",

}

for cmdNumber, cmd := range cmds {

session, err := client.NewSession()

if err != nil {

panic("Failed to create session: " + err.Error())

}

defer session.Close()

var b bytes.Buffer

session.Stdout = &b

if cmd == scpCmd {

src := "ofdpa-i.12.1.1_12.1.1+accton1.7-1_amd64.deb"

dst := "/mnt/flash2/" + src

err = scp.CopyPath(src, dst, session)

if _, err := os.Stat(src); os.IsNotExist(err) {

fmt.Printf("no such file or directory: %s", src)

panic(err)

} else {

fmt.Println("SCP Success")

continue

}

}

fmt.Println(" RUNNING: " + cmd)

if cmd == "savepersist" {

session.Run(cmd) //savepersist returns error even if it succeeds (ONL bug)

} else if cmd == connect {

go func() {

time.Sleep(TIMEOUT * time.Millisecond)

timeout <- true

}()

go func() {

fmt.Println(" RUNNING: " + connect)

session.Run(cmd)

}()

time.Sleep(2 * time.Second)

} else {

if err := session.Run(cmd); err != nil {

fmt.Println("Failed to run cmd: " + cmd + " ERROR: " + err.Error())

}

}

rpl := b.String()

if cmdNumber == 0 {

fmt.Println(rpl[:5])

if rpl[:5] == "found" {

fmt.Println("Switch is already configured!")

}

}

if cmdNumber == 1 {

fmt.Println(rpl[:9])

if rpl[:9] == "connected" {

fmt.Println("Switch is already CONNECTED!")

break

} else {

fmt.Println("Switch is configured but not connected to ONOS, connecting now...")

go func() {

fmt.Println(" RUNNING: " + connect)

session.Run(connect)

}()

time.Sleep(2 * time.Second)

connd := "touch /etc/.connected"

session, err := client.NewSession()

if err != nil {

panic("Failed to create session: " + err.Error())

}

defer session.Close()

fmt.Println(" RUNNING: " + connd)

if err := session.Run(connd); err != nil {

fmt.Println("Failed to run cmd: " + connd + " ERROR: " + err.Error())

}

}

break

}

}

}

<file_sep>/Makefile

build:

GOOS=linux GOARCH=amd64 go build -o switchGo

image:

sudo docker build -t cord/fabricdhcpharvester .

run1:

sudo docker-compose -f harvest-compose-1.yml up

run2:

sudo docker-compose -f harvest-compose-2.yml up

runquiet1:

sudo docker-compose -f harvest-compose-1.yml up -d

runquiet2:

sudo docker-compose -f harvest-compose-2.yml up -d<file_sep>/Dockerfile

FROM iron/base

RUN apk update && apk upgrade \

&& apk add python \

&& rm -rf /var/cache/apk/*

ADD dhcpharvester.py /dhcpharvester.py

ADD switchGo /switchGo

ADD main.go /main.go

ADD ofdpa-i.12.1.1_12.1.1+accton1.7-1_amd64.deb /ofdpa-i.12.1.1_12.1.1+accton1.7-1_amd64.deb

ENTRYPOINT [ "python", "/dhcpharvester.py" ]

<file_sep>/README.md

# Zero Touch Provisioning (ZTP) for Whitebox fabric

- It uses DHCP information to see if a switch bootsup and matches it's config based on the MAC in the DHCP request (using DHCP harvest)

- Then it calls the Go program wich configures the switch

- Configuration includes, copying the appropriate OFDPA image, installing ofdpa, restarting ofdpa, hostname, make sure the configuration is persisted across reboots, etc. | f1ea56528cc76499a1b9432584f73db9daf45b2a | [

"Makefile",

"Go",

"Dockerfile",

"Markdown"

] | 4 | Go | ciena/ZeroTouchProvisioning | a262fc3630a17ff415ce06d1ff82af7550a20804 | da75719b39a6cb8b0175a4d7cacdf17f143e5658 |

refs/heads/master | <file_sep># RNCryptor

RNCryptor加密解密Python代码

#### **Dependencies**

/assets/pycrypto-2.6.1.tar.gz

```bash

tar -zxvf /assets/pycrypto-2.6.1.tar.gz

sudo python setup.py install

```

#### **用法**

```bash

python RNCryptor.py

```

```python

cryptor = RNCryptor()

#加密

cryptor.encrypt(Data,password)

#解密

cryptor.decrypt(Data,password)

```

<file_sep>#!/usr/bin/env python

# coding: utf-8

from __future__ import print_function

import hashlib

import hmac

import sys

import base64

from Crypto.Cipher import AES

from Crypto.Protocol import KDF

from Crypto import Random

PY2 = sys.version_info[0] == 2

PY3 = sys.version_info[0] == 3

if PY2:

def to_bytes(s):

if isinstance(s, str):

return s

if isinstance(s, unicode):

return s.encode('utf-8')

to_str = to_bytes

def bchr(s):

return chr(s)

def bord(s):

return ord(s)

elif PY3:

def to_bytes(s):

if isinstance(s, bytes):

return s

if isinstance(s, str):

return s.encode('utf-8')

def to_str(s):

if isinstance(s, bytes):

return s.decode('utf-8')

if isinstance(s, str):

return s

def bchr(s):

return bytes([s])

def bord(s):

return s

class RNCryptor(object):

"""Cryptor for RNCryptor"""

AES_BLOCK_SIZE = AES.block_size

AES_MODE = AES.MODE_CBC

SALT_SIZE = 8

def pre_decrypt_data(self, data):

""" Change this function for handling data before decryption. """

data = to_bytes(data)

return data

def post_decrypt_data(self, data):

""" Removes useless symbols which appear over padding for AES (PKCS#7). """

data = data[:-bord(data[-1])]

return to_str(data)

def decrypt(self, data, password):

data = self.pre_decrypt_data(data)

password = to_<PASSWORD>(password)

n = len(data)

version = data[0]

options = data[1]

encryption_salt = data[2:10]

hmac_salt = data[10:18]

iv = data[18:34]

cipher_text = data[34:n - 32]

hmac = data[n - 32:]

encryption_key = self._pbkdf2(password, encryption_salt)

hmac_key = self._pbkdf2(password, hmac_salt)

if self._hmac(hmac_key, data[:n - 32]) != hmac:

raise Exception("Bad data")

decrypted_data = self._aes_decrypt(encryption_key, iv, cipher_text)

return self.post_decrypt_data(decrypted_data)

def pre_encrypt_data(self, data):

""" Does padding for the data for AES (PKCS#7). """

data = to_bytes(data)

rem = self.AES_BLOCK_SIZE - len(data) % self.AES_BLOCK_SIZE

return data + bchr(rem) * rem

def post_encrypt_data(self, data):

""" Change this function for handling data after encryption. """

return data

def encrypt(self, data, password):

data = self.pre_encrypt_data(data)

password = <PASSWORD>(password)

encryption_salt = self.encryption_salt

encryption_key = self._pbkdf2(password, encryption_salt)

hmac_salt = self.hmac_salt

hmac_key = self._pbkdf2(password, hmac_salt)

iv = self.iv

cipher_text = self._aes_encrypt(encryption_key, iv, data)

version = b'\x03'

options = b'\x01'

new_data = b''.join([version, options, encryption_salt, hmac_salt, iv, cipher_text])

encrypted_data = new_data + self._hmac(hmac_key, new_data)

return self.post_encrypt_data(encrypted_data)

@property

def encryption_salt(self):

return Random.new().read(self.SALT_SIZE)

@property

def hmac_salt(self):

return Random.new().read(self.SALT_SIZE)

@property

def iv(self):

return Random.new().read(self.AES_BLOCK_SIZE)

def _aes_encrypt(self, key, iv, text):

return AES.new(key, self.AES_MODE, iv).encrypt(text)

def _aes_decrypt(self, key, iv, text):

return AES.new(key, self.AES_MODE, iv).decrypt(text)

def _hmac(self, key, data):

return hmac.new(key, data, hashlib.sha256).digest()

def _pbkdf2(self, password, salt, iterations=10000, key_length=32):

return KDF.PBKDF2(password, salt, dkLen=key_length, count=iterations,

prf=lambda p, s: hmac.new(p, s, hashlib.sha1).digest())

def main():

from time import time

cryptor = RNCryptor()

###################password,text#########################

#F17T7AN3HG71

password = "<PASSWORD>($@^"

########encrypt###########

yourimail_password = "<PASSWORD>"

encrypt_password = cryptor.encrypt(yourimail_password,password)

en_yourimail_password = base64.b64encode(encrypt_password)

#######decrypt##########

yourimail_password_base64encode = "<KEY>

base64decodetext = base64.b64decode(yourimail_password_base64encode)

de_yourimail_password = cryptor.decrypt(base64decodetext,password)

#####################################################################################################################################################

#encrypt

print("\n\n\n\n密文:\n",en_yourimail_password)

print("\n\n\n");

#=======================================================

#decrypt

print("明文:\n",de_yourimail_password)

print("\n\n\n\n\n")

#=======================================================

if __name__ == '__main__':

main()

| d8886da4e3098fb83fd93885d69621c798535c4b | [

"Markdown",

"Python"

] | 2 | Markdown | Vxer-Lee/RNCryptor | 53ff159a47300ddaa6d4a5be7948a056710992ba | efe9d22f0767dc3d5649dcd4b0c070192ab928c8 |

refs/heads/main | <file_sep>const colors = ['#DE6E51', '#E8A339', '#68B253'];

const sampleData = [

{

"name": "Feed",

"unit": "Kilograms per hector (kg/ha)",

"values": [

{

"timestamp": "2021-01-15T10:00:00.000",

"value": 0.5

},

{

"timestamp": "2021-01-16T10:00:00.000",

"value": 1.5

},

{

"timestamp": "2021-01-17T10:00:00.000",

"value": 1.4

},

{

"timestamp": "2021-01-18T10:00:00.000",

"value": 1.3

},

{

"timestamp": "2021-01-19T10:00:00.000",

"value": 1.2

},

{

"timestamp": "2021-01-20T10:00:00.000",

"value": 2.5

},

{

"timestamp": "2021-01-21T10:00:00.000",

"value": 2.0

}

]

},

{

"name": "Biomass",

"unit": "Kilograms per hector (kg/ha)",

"values": [

{

"timestamp": "2021-01-15T10:00:00.000",

"value": 10

},

{

"timestamp": "2021-01-16T10:00:00.000",

"value": 11

},

{

"timestamp": "2021-01-17T10:00:00.000",

"value": 12

},

{

"timestamp": "2021-01-18T10:00:00.000",

"value": 12

},

{

"timestamp": "2021-01-19T10:00:00.000",

"value": 13.5

},

{

"timestamp": "2021-01-20T10:00:00.000",

"value": 9

},

{

"timestamp": "2021-01-21T10:00:00.000",

"value": 8

}

]

},

{

"name": "Ammonia",

"unit": "Kilograms per hector (kg/ha)",

"values": [

{

"timestamp": "2021-01-15T10:00:00.000",

"value": 5.1

},

{

"timestamp": "2021-01-16T10:00:00.000",

"value": 7

},

{

"timestamp": "2021-01-17T10:00:00.000",

"value": 7

},

{

"timestamp": "2021-01-18T10:00:00.000",

"value": 6

},

{

"timestamp": "2021-01-19T10:00:00.000",

"value": 5

},

{

"timestamp": "2021-01-20T10:00:00.000",

"value": 4

},

{

"timestamp": "2021-01-21T10:00:00.000",

"value": 3.1

}

]

}

];

// set the dimensions and margins of the graph

const margin = { top: 40, right: 80, bottom: 60, left: 50 },

width = 960 - margin.left - margin.right,

height = 280 - margin.top - margin.bottom;

const parseDate = d3.timeParse("%m/%d/%Y");

const formatDate = d3.timeFormat("%m %d, %Y");

const formatMonth = d3.timeFormat("%b");

const x = d3.scaleTime().range([0, width]);

const y = d3.scaleLinear().range([height, 0]);

// append the svg object to the body of the page

const svg = d3

.select("#root")

.append("svg")

.attr(

"viewBox",

`0 0 ${width + margin.left + margin.right} ${height + margin.top + margin.bottom}`)

.append("g")

.attr("transform", "translate(" + margin.left + "," + margin.top + ")");

/* x axis */

svg

.append("g")

.attr("class", "x axis")

.attr("transform", "translate(0," + height + ")")

.call(d3.axisBottom(x).ticks(3).tickFormat(formatDate));

/* y axis */

svg.append("g").attr("class", "y axis").call(d3.axisLeft(y));

/* y axis lable */

svg

.append("text")

.attr("y", -20)

.attr("x", -20)

.attr("dy", "1em")

.style("text-anchor", "middle")

.text("kg/ha");

appendData(sampleData);

function appendData(sampleData) {

/* combine all values for domain */

const allValues = sampleData.map(d => d.values).flat();

sampleData.forEach((line, index) => {

// line.values = line.values.reverse();

line.values.forEach((d) => {

d.timestamp = new Date(d.timestamp);

d.value = Number(d.value);

});

x.domain(

d3.extent(allValues, (d) => d.timestamp)

);

y.domain([

0,

d3.max(allValues, (d) => d.value),

]);

/* animate axis */

svg

.select(".x.axis")

.transition()

.duration(750)

.call(d3.axisBottom(x).ticks(3).tickFormat(formatDate));

svg

.select(".y.axis")

.transition()

.duration(750)

.call(d3.axisLeft(y));

/* The data lines */

const linePath =

svg.append("path")

.data([line.values])

.attr("class", `line-${index}`)

.attr("d", d3

.line()

.x((d) => x(d.timestamp))

.y((d) => y(d.value))

.curve(d3.curveCardinal))

/* data point circles */

line.values.forEach(d => {

svg.selectAll()

.data([d])

.enter()

.append("circle")

.attr("fill", "white")

.attr("stroke", colors[index])

.attr("cx", (d) => x(d.timestamp))

.attr("cy", (d) => y(d.value))

.attr("r", 3)

})

const pathLength = linePath.node().getTotalLength();

linePath

.attr("stroke-dasharray", pathLength)

.attr("stroke-dashoffset", pathLength)

.attr("stroke-width", 3)

.transition()

.duration(1000)

.attr("stroke-width", 0)

.attr("stroke-dashoffset", 0);

/* dynamic legend text */

svg

.append("text")

.attr("class", "title")

.attr("x", (width / 2) + 100 * (index - 1))

.attr("y", 0 - margin.top / 2)

.attr("text-anchor", "left")

.text(line.name);

/* dynamic legend dots */

svg.append("circle")

.attr("fill", colors[index])

.attr("stroke", "none")

.attr("cx", ((width / 2) + 100 * (index - 1)) - 8)

.attr("cy", -4 - margin.top / 2)

.attr("r", 4)

const focus = svg

.append("g")

.attr("class", "focus")

.style("display", "none");

focus

.append("line")

.attr("class", "x")

.style("stroke-dasharray", "3,3")

.style("opacity", 0.5)

.attr("y1", 0)

.attr("y2", height);

focus

.append("line")

.attr("class", "y")

.style("stroke-dasharray", "3,3")

.style("opacity", 0.5)

.attr("x1", width)

.attr("x2", width);

focus

.append("circle")

.attr("class", "y")

.style("fill", "none")

.attr("r", 4);

focus.append("text").attr("class", "y1").attr("dx", 8).attr("dy", "-.3em");

focus.append("text").attr("class", "y2").attr("dx", 8).attr("dy", "-.3em");

focus.append("text").attr("class", "y3").attr("dx", 8).attr("dy", "1em");

focus.append("text").attr("class", "y4").attr("dx", 8).attr("dy", "1em");

function mouseMove(event) {

const bisect = d3.bisector((d) => d.timestamp).left;

const x0 = x.invert(d3.pointer(event, this)[0]);

const i = bisect(line.values, x0, 1);

const d0 = line.values[i - 1];

const d1 = line.values[i];

const d = x0 - d0.timestamp > d1.timestamp - x0 ? d1 : d0;

focus

.select("circle.y")

.attr("transform", "translate(" + x(d.timestamp) + "," + y(d.value) + ")");

focus

.select("text.y1")

.attr("transform", "translate(" + x(d.timestamp) + "," + y(d.value) + ")")

.text(`${line.name} ${d.value} ${line.unit}`);

/* focus

.select("text.y2")

.attr("transform", "translate(" + x(d.timestamp) + "," + y(d.value) + ")")

.text(d.value); */

focus

.select("text.y3")

.attr("transform", "translate(" + x(d.timestamp) + "," + y(d.value) + ")")

.text(formatDate(d.timestamp));

focus

.select("text.y4")

.attr("transform", "translate(" + x(d.timestamp) + "," + y(d.value) + ")")

.text(formatDate(d.timestamp));

focus

.select(".x")

.attr("transform", "translate(" + x(d.timestamp) + "," + y(d.value) + ")")

.attr("y2", height - y(d.value));

focus

.select(".y")

.attr("transform", "translate(" + width * -1 + "," + y(d.value) + ")")

.attr("x2", width + width);

}

svg

.append("rect")

.attr("width", width)

.attr("height", height)

.style("fill", "none")

.style("pointer-events", "all")

.on("mouseover", () => {

focus.style("display", null);

})

.on("mouseout", () => {

focus.style("display", "none");

})

.on("touchmove mousemove", mouseMove);

});

}

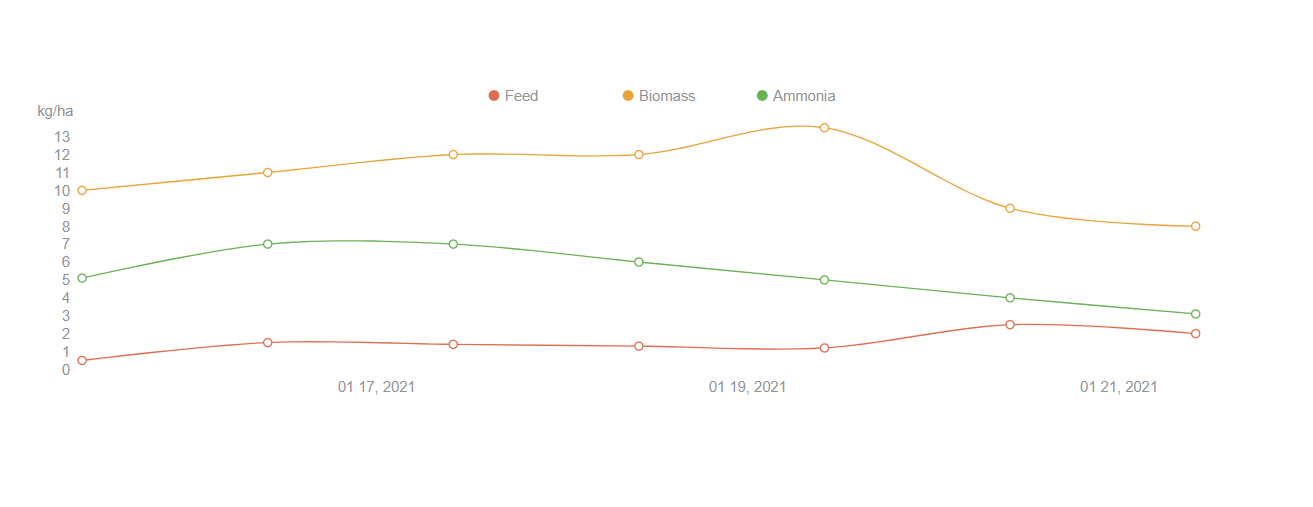

<file_sep># d3-line-chart

***This is an in-progress peek*** <br/>

<p>The chart is setup to handle a non-specified number of lines. I could set the color designation to loop back around, if there were ever more lines than colors. I used a color picker to find the colors and wrote a color array to dynamically color each line as it is looped. Typically, if an API endpoint is not available to set up a microservice, I would run a Json server, to access the initial data from a local json file, like the file I included, but did not use, yet. This data is a hard-coded const. Often my first step for a rough-in.</p>

<p>For interactivity, when you hover over data points, the info for the nearest focussed point should display. Currently, only the last line in the loop works, and I have that in my list of todos, below. </p>

***With more time, I would:*** <br/>

<ul>

<li>refine style, including adding subtle y grid dashed lines, etc. (I could see the very subtle horizontal grid in the assignment doc)</li>

<li>figure out how to have the data lines begin and end before and after the first and last data points</li>

<li>setup promised data (I chose not to use d3.csv, because the provided data sample was basically, already Json. I could have formatted the JSON into csv and posted it to a remote location to grab as a promise.)</li>

<li>make mouse move work for all lines</li>

<li>move the chart into React (The example I demoed, durring the first interview, was a d3.js bar chart reusable component with a live API search in React)</li>

<li>figure out what's going on with the y axis initial format, before the animation</li>

</ul>

| 1e74e259fb9dd3bd84443645366dbca06af15be9 | [

"JavaScript",

"Markdown"

] | 2 | JavaScript | JeffACDev/shrimpy-d3 | 6c086ae77ea29d89a1a31292b9c00a8947626231 | cdc03ee410bddf726c301163255df8cbc9f4c1dd |

refs/heads/main | <file_sep>

# solver.py

def solve(gr):

find = find_empty(gr)

if not find:

return True

else:

row, col = find

for i in range(1,10):

if valid(gr, i, (row, col)):

gr[row][col] = i

if solve(gr):

return True

gr[row][col] = 0

return False

def valid(gr, num, pos):

# Checking row

for i in range(len(gr[0])):

if gr[pos[0]][i] == num and pos[1] != i:

return False

# Checking column

for i in range(len(gr)):

if gr[i][pos[1]] == num and pos[0] != i:

return False

# Checking 3x3 subgrid

gr_x = pos[1] // 3

gr_y = pos[0] // 3

for i in range(gr_y*3, gr_y*3 + 3):

for j in range(gr_x * 3, gr_x*3 + 3):

if gr[i][j] == num and (i,j) != pos:

return False

return True

def print_grid(gr):

for i in range(len(gr)):

if i % 3 == 0 and i != 0:

print("- - - - - - - - - - - -")

for j in range(len(gr[0])):

if j % 3 == 0 and j != 0:

print(" | ", end="")

print(gr[i][j], end = ' ')

print()

def find_empty(gr):

for i in range(len(gr)):

for j in range(len(gr[0])):

if gr[i][j] == 0:

return (i, j) # row, col

return None<file_sep># Sudoku-solver-py

## Designed a sudoku solver in python using pygame library.

* I have used backtracking algorithm to solve the sudoku puzzle.

For GUI I have used pygame library of python.

To input your number click on the blank box and type in the number and press enter:

- If your value is correct then it will take it otherwise a cross will be shown at bottom left corner.

- To clear your choice provided it is wrong press : 'DEL'

If you want the computer to solve it - Simply press 'SPACE'

THANK YOU FOR VISITING THIS REPO!!

| e019fe2e2875978aeca2e015d1d2f81fc0811ab2 | [

"Markdown",

"Python"

] | 2 | Python | shubham25121999/Sudoku-solver-py | 26e4e49ea5c597683ef8c057c028fcf29fcdf8c2 | 72aa15a3ae387e7bf930fec2dd5f38c86afb7326 |

refs/heads/master | <repo_name>eduardoacv2/StoreApp-EL-ICOM5016<file_sep>/appjs/account.js

function Account(uId, uFirstName, uLastName, uBillingAddress, uCreditCard, aType, uBuyHistory, uSellHistory, uPInventory, uPassword)

{

this.uId = "123";

this.uFirstName = fName;

this.uLastName = lName;

this.uBillingAddress = address;

this.uCreditCard = cardNumber;

this.aType = accountType;

this.uBuyHistory = productList1;

this.uSellHistory = productList2;

this.uPInventory = productList3;

this.uPassword = <PASSWORD>;

this.toJSON = toJSON;

}

<file_sep>/appjs/app.js

$(document).on('pagebeforeshow', "#product", function( event, ui ) {

console.log("John");

$.ajax({

url : "http://localhost:3412/StoreApp/product",

contentType: "application/json",

success : function(data, textStatus, jqXHR){

var productList = data.product;

var len = productList.length;

var list = $("#product-list");

list.empty();

var product;

for (var i=0; i < len; ++i){

product = productList[i];

list.append("<li><a onclick=GetProduct(" + product.pName+ ")>" +

"<h2>" + product.pType1 + " " + product.pType2 + "</h2>" +

"<p><strong> Seller: " + product.pSeller + "</strong></p>" +

"<p>" + product.pCondition + "</p>" +

"<p class=\"ui-li-aside\">" + accounting.formatMoney(product.pBidPrice) + "</p>" +

"</a></li>");

}

list.listview("refresh");

},

error: function(data, textStatus, jqXHR){

console.log("textStatus: " + textStatus);

alert("Data not found!");

}

});

});

$(document).on('pagebeforeshow', "#product-view", function( event, ui ) {

$("#upd-type").val(currentProduct.pType1);

$("#upd-typeOfType").val(currentProduct.pType2);

$("#upd-name").val(currentProduct.pName);

$("#upd-price").val(currentProduct.pPrice);

$("#upd-condition").val(currentProduct.pCondition);

$("#upd-startingBid").val(currentProduct.pStartingBid);

$("#upd-bidPrice").val(currentProduct.pBidPrice);

$("#upd-seller").val(currentProduct.pSeller);

$("#upd-buyer").val(currentProduct.pBuyer);

$("#upd-image").val(currentProduct.pImage);

});

////////////////////////////////////////////////////////////////////////////////////////////////

/// Functions Called Directly from Buttons ///////////////////////

function ConverToJSON(formData){

var result = {};

$.each(formData,

function(i, o){

result[o.name] = o.value;

});

return result;

}

function SaveProduct(){

$.mobile.loading("show");

var form = $("#product-form");

var formData = form.serializeArray();

console.log("form Data: " + formData);

var newProduct = ConverToJSON(formData);

console.log("New Car: " + JSON.stringify(newProduct));

var newProductJSON = JSON.stringify(newProduct);

$.ajax({

url : "http://localhost:3412/StoreApp/product",

method: 'post',

data : newProductJSON,

contentType: "application/json",

dataType:"json",

success : function(data, textStatus, jqXHR){

$.mobile.loading("hide");

$.mobile.navigate("#product");

},

error: function(data, textStatus, jqXHR){

console.log("textStatus: " + textStatus);

$.mobile.loading("hide");

alert("Data could not be added!");

}

});

}

var currentPro = {};

function GetProduct(id){

$.mobile.loading("show");

$.ajax({

url : "http://localhost:3412/StoreApp/product/" + id,

method: 'get',

contentType: "application/json",

dataType:"json",

success : function(data, textStatus, jqXHR){

currentPro = data.product;

$.mobile.loading("hide");

$.mobile.navigate("#product-view");

},

error: function(data, textStatus, jqXHR){

console.log("textStatus: " + textStatus);

$.mobile.loading("hide");

if (data.status == 404){

alert("Product not found.");

}

else {

alter("Internal Server Error.");

}

}

});

}

function UpdateProduct(){

$.mobile.loading("show");

var form = $("#product-view-form");

var formData = form.serializeArray();

console.log("form Data: " + formData);

var updPro = ConverToJSON(formData);

updPro.id = currentPro.id;

console.log("Updated Product: " + JSON.stringify(updPro));

var updProJSON = JSON.stringify(updPro);

$.ajax({

url : "http://localhost:3412/StoreApp/product/" + updPro.id,

method: 'put',

data : updProJSON,

contentType: "application/json",

dataType:"json",

success : function(data, textStatus, jqXHR){

$.mobile.loading("hide");

$.mobile.navigate("#product");

},

error: function(data, textStatus, jqXHR){

console.log("textStatus: " + textStatus);

$.mobile.loading("hide");

if (data.status == 404){

alert("Data could not be updated!");

}

else {

alert("Internal Error.");

}

}

});

}

function DeleteProduct(){

$.mobile.loading("show");

var id = currentPro.id;

$.ajax({

url : "http://localhost:3412/StoreApp/product/" + id,

method: 'delete',

contentType: "application/json",

dataType:"json",

success : function(data, textStatus, jqXHR){

$.mobile.loading("hide");

$.mobile.navigate("#product");

},

error: function(data, textStatus, jqXHR){

console.log("textStatus: " + textStatus);

$.mobile.loading("hide");

if (data.status == 404){

alert("Product not found.");

}

else {

alter("Internal Server Error.");

}

}

});

}<file_sep>/appjs/product.js

function Product(pId, pName, pType1, pType2, pBidPrice, pPrice, pStartingBid, pCondition, pImage, pSeller, pBuyer)

{

this.pId = "0";

this.pName = name;

this.pType1 = type;

this.pType2 = typeOfType;

this.pBidPrice = bidPrice;

this.pPrice = price;

this.pStartingBid = startingBid;

this.pCondition = condition;

this.pImage = image;

this.pSeller = seller;

this.pBuyer = buyer;

this.toJSON = toJSON;

}

| 3c3b17422cfd9ab200caaecd2304876ea7648dc8 | [

"JavaScript"

] | 3 | JavaScript | eduardoacv2/StoreApp-EL-ICOM5016 | 97beddc119fe83b6107545d71859eb9e6adbd2ef | d002af41659536480e0843973442a226de1d9230 |

refs/heads/main | <repo_name>Gideon-Zozingao/express-sessions-and-cookies<file_sep>/README.md

# express-sessions-and-cookies | c3c0d430d0130cb0f31a6aa5764f9bf78ad2c0b5 | [

"Markdown"

] | 1 | Markdown | Gideon-Zozingao/express-sessions-and-cookies | cad4265f3dcdcc9ad52c585bb36d70c300b5ca14 | 4460bfbd9308779bc59ce91a4571b808d64e9c94 |

refs/heads/main | <file_sep># :oncoming_bus: Real Time Bus Tracker :oncoming_bus:

On this project, the goal was to make an API connection to gather the bus geolocation every 20 seconds and tracks its route.

# :bomb: How to run

1. Download the repository files

2. Get an API token at MapBox's website and replace it on the mapanimation.js

3. Open the index.html with your favorite browser

# :dart: Future Improvements:

- Calculate and show an ETA (Estimated Time of Arrival), based on historic information

# :registered: Licence Information:

Copyright 2021 <NAME>

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

<file_sep>const colors = ['#4d0ff1', '#6e0723', '#65202e', '#844aef', '#11a94c', '#5af68e', '#838452', '#1ac8cb', '#4e42e8', '#5d6eeb', '#0d3155', '#60d4d0', '#7ecdf3', '#d00cdd', '#af534b', '#fd1269', '#101ff8', '#9aa72c', '#cc5446', '#1a3ab7']

mapboxgl.accessToken = '<KEY>';

let map = new mapboxgl.Map({

container: 'map',

style: 'mapbox://styles/mapbox/streets-v11',

center: [-71.104081, 42.365554],

zoom: 14,

});

map.resize();

var busMark = [];

async function run() {

const locations = await getBusLocation();

console.log(location);

location.forEach(bus, i) => {

var marker = new mapboxgl.marker({'color': colors[i] })

.setLngLat([bus.attributes.longitude, bus.attributes.latitude])

.setPopup(new mapboxgl.Popup({offset: 25, closeOnClick: false, closeButton: false}).setHTML(`<h3>Bus ID <br>${bus.attributes.label}</h3>`))

.addTo(map)

.toogglePopup();

busesMarkers.push(marker);

});

function deleteMark() {

if (busMark!==null) {

for (var i = busMark.length - 1; i >=0; i--) {

busMark[i].remove();

}

}

}

locations.forEach((marker, i) => {

let popUp = document.getElementsByClassName('mapboxgl-popup-content');

popUp[i].style.background = colors[i];

});

setTimeout(deleteMark, 8000);

setTimeout(run, 20000);

}

async function getBusLocation() {

const url = 'https://api-v3.mbta.com/vehicles?filter[route]=1&include=trip';

const response = await fetch(url);

const json = await response.json();

return json.data;

}

map.on('load', function() {

run();

}); | 41ea3281db549d3c2add7b65d22278aedeb6aa9d | [

"Markdown",

"JavaScript"

] | 2 | Markdown | ejhenriques/Real_Time_Bus_Tracker | 38b094bd6852613c528425537d69f40462eec6d8 | 041722a438b978b4ba7ecca8aaf9e208da79111c |

refs/heads/master | <file_sep>import numpy as np

import cv2

from matplotlib import pyplot as plt

# 1.載入原始圖像並灰值化,原始影像為1000x2000 ,3 channel #灰:1 #RGB:3

img = cv2.imread('digits.png')

print("img shape=", img.shape)

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)#RGB變成GRAY

print("gray shape=", gray.shape)

# 2.切成每一小塊20x20 pixel

# 先將gray 1000x2000 [rowsxcols] pixel , 將row=1000/50 :意為50列20pixel單位

# 再將產生的cols =2000/100 =>意為100行20pixel單位

cells = [np.hsplit(row, 100) for row in np.vsplit(gray, 50)]

# 3.將cells為一50x100 的list轉成array (50,100,20,20)

x = np.array(cells)

print("x shape=", x.shape)

# 4.把20x20 pixel 展平成一行400 pixel

# 將cells array X 轉成5000x400 後並分成兩半 train's data and test's data

train = x[:, :50].reshape(-1, 400).astype(np.float32) # Size = (2500,400)

test = x[:, 50:100].reshape(-1, 400).astype(np.float32) # Size = (2500,400)

print("train shape=", train.shape)

print("test shape=", test.shape)

# 5.Create labels for train and test data

k = np.arange(10)

train_labels = np.repeat(k, 250)[:, np.newaxis]

test_labels = train_labels.copy()

print("train_labels.shape=", train_labels.shape)

print("test_labels.shape=", test_labels.shape)

# 6. Initiate kNN, train the data, then test it with test data for k=1

knn = cv2.ml.KNearest_create()

knn.train(train, cv2.ml.ROW_SAMPLE, train_labels)

ret, result, neighbours, dist = knn.findNearest(test, k=1)

# 7. Now we check the accuracy of classification

# For that, compare the result with test_labels and check which are wrong

matches = result == test_labels

correct = np.count_nonzero(matches)

accuracy = correct * 100.0 / result.size

print(accuracy)

# 8.save the data

np.savez('knn_data.npz', train=train, train_labels=train_labels)

##-------------------------------------------------------------------------

##=========================================================================

##======Predict testing====================================================

# A.Now re-load the data

with np.load('knn_data.npz') as data:

print(data.files)

train = data['train']

train_labels = data['train_labels']

# B.輸入自己手寫的image data 必須是20x20 pixel

Input_Numer = [0] * 10

img_num = [0] * 10

img_res = [0] * 10

testData_r = [0] * 10

result = [0] * 10

result_str = [0] * 10

Input_Numer[0] = "0.png"

Input_Numer[1] = "1.png"

Input_Numer[2] = "2.png"

Input_Numer[3] = "3.png"

Input_Numer[4] = "4.png"

Input_Numer[5] = "5.png"

Input_Numer[6] = "6.png"

Input_Numer[7] = "7.png"

Input_Numer[8] = "8.png"

Input_Numer[9] = "9.png"

font = cv2.FONT_HERSHEY_SIMPLEX

# C.Predicting

for i in range(10): # input 10 number

img_num[i] = cv2.imread(Input_Numer[i], 0)

testData_r[i] = img_num[i][:, :].reshape(-1, 400).astype(np.float32) # Size = (1,400)

ret, result[i], neighbours, dist = knn.findNearest(testData_r[i], k=5)

# 產生white screen以顯示預測結果的白底

img_res[i] = np.zeros((64, 64, 3), np.uint8)

img_res[i][:, :] = [255, 255, 255]

# 將結果轉成字串以便顯示在圖上

print("result[i][0][0] =", result[i][0][0].astype(np.int32)) # change type from float32 to int32

result_str[i] = str(result[i][0][0].astype(np.int32))

if result[i][0][0].astype(np.int32) == i:

cv2.putText(img_res[i], result_str[i], (15, 52), font, 2, (0, 255, 0), 3, cv2.LINE_AA)

else:

cv2.putText(img_res[i], result_str[i], (15, 52), font, 2, (255, 0, 0), 3, cv2.LINE_AA)

# ===顯示輸入與預測結果圖======

Input_Numer_name = ['Input 0', 'Input 1', 'Input 2', 'Input 3', 'Input 4', \

'Input 5', 'Input 6', 'Input 7', 'Input8', 'Input9']

predict_Numer_name = ['predict 0', 'predict 1', 'predict 2', 'predict 3', 'predict 4', \

'predict 5', 'predict6 ', 'predict 7', 'predict 8', 'predict 9']

for i in range(10):

plt.subplot(2, 10, i + 1), plt.imshow(img_num[i], cmap='gray')

plt.title(Input_Numer_name[i]), plt.xticks([]), plt.yticks([])

plt.subplot(2, 10, i + 11), plt.imshow(img_res[i], cmap='gray')

plt.title(predict_Numer_name[i]), plt.xticks([]), plt.yticks([])

plt.show()

| 950bee852e439323a5683c2a6648c158dbaefb14 | [

"Python"

] | 1 | Python | luyihsien/knn-project | 6370415f6795eeaa6cfbbba08f796e4c758cbee7 | 1f03fa2cd39e49a9c8e07946ff2e4f6476d6d770 |

refs/heads/master | <repo_name>JaarenDSacharow/BurgerBuilder<file_sep>/src/containers/BurgerBuilder/BurgerBuilder.js

import React, {Component} from 'react';

import Aux from '../../hoc/Aux/Aux';

import Burger from '../../components/Burger/Burger';

import BuildControls from '../../components/Burger/BuildControls/BuildControls';

import Modal from '../../components/UI/Modal/Modal';

import OrderSummary from '../../components/Burger/OrderSummary/OrderSummary';

import Spinner from '../../components/UI/Spinner/Spinner';

//HOC to wrap this

import withErrorHandler from '../../hoc/WithErrorHandler/WithErrorHandler';

// our own axios instance

import axios from '../../axios-orders-instance';

//global prices to use for calculations

const INGREDIENT_PRICES = {

salad : 0.2,

cheese: 0.3,

meat: 1.0,

bacon: 0.3

}

//the main stateful class component (container)

// keep state and handlers in containers.

class BurgerBuilder extends Component {

// constructor(props) {

// super(props);

// this.state = {};

// }

state = {

ingredients: null, //<-- this is stored in firebase

totalPrice: 5, //base price

purchaseable: false, //for enabling/disabling the order now button

purchasing: false, //for determining if we are in the modal or not

loading: false, //for checking whether or not to show the spinner

error: false // in case the ingredients can't be loaded, we can get rid of the spinner.

}

componentDidMount(){

axios.get('/ingredients.json') //<-- it's firebase, don't forget the JSON extension or you'll get a CORS error

.then((response) =>{

this.setState({

ingredients: response.data

})

}).catch((error)=>{

this.setState({

error: true //this is the last line of defense to display an error to the user

})

})

}

updatePurchaseableState = (updatedIngredients) => {

// const ingredients = {

// ...this.state.ingredients

// };

//take the key and return the value in a new array,

//which we then reduce to get the s

const sum = Object.keys(updatedIngredients)

.map((ingredientKey)=>{

return updatedIngredients[ingredientKey]

})

.reduce((sum, el) =>{

return sum + el

}, 0);

this.setState({purchaseable: sum > 0});

}

purchasingHandler = () =>{

this.setState({

purchasing: true

})

}

purchaseCancelHandler = () => {

this.setState({

purchasing: false

})

}

//here's where we hit the firebase instance

purchaseContinueHandler = () => {

// alert('You Continued!');

//first set loading to true

this.setState({

loading : true

})

//this is a firebase specific thing for real time database

// firbase realtime database creates nodes with data based

// on this request

// you target the base URL node with a .json extension

//it will store data beneath that node

const order = {

ingredients: this.state.ingredients,

price: this.state.totalPrice, //recalc the price on the server in a real app

customer: {

name: '<NAME>',

address : {

street: '123 test street',

city: 'test city',

zip: '123456',

country: 'US'

},

email: "<EMAIL>"

},

deilveryMethod: 'fastest'

}

axios.post('/orders.json', order )

.then((response) =>{

//when you get the response, set loading to false to hide spinner

// and also set purchasing to false to have the modal leave

this.setState({

loading: false,

purchasing: false

})

console.log(response);

}).catch((error) => {

this.setState({

loading: false,

purchasing: false

})

})

}

addIngredientHandler = (type) => {

const oldCount = this.state.ingredients[type];

const newCount = oldCount + 1;

//update state immutably by making a copy with the spread operator

// since we're dealing with an entire object

const updatedIngredients = {

...this.state.ingredients

};

updatedIngredients[type] = newCount;

//now update the price based on the constant above

const oldPrice = this.state.totalPrice;

const priceAdd = INGREDIENT_PRICES[type]; //reference a key, not a property :/

const newPrice = oldPrice + priceAdd;

this.setState({

ingredients: updatedIngredients,

totalPrice : newPrice

});

this.updatePurchaseableState(updatedIngredients);

}

removeIngredientHandler = (type) => {

const oldCount = this.state.ingredients[type];

// to prevent sending a -1 array key to the burger component

// do nothing to update the amount of ingredients if a user

//tries to remove an ingredient whose count is 0

if (oldCount <= 0) {

return;

}

const newCount = oldCount - 1;

//update state immutably by making a copy with the spread operator

// since we're dealing with an entire object

const updatedIngredients = {

...this.state.ingredients

};

updatedIngredients[type] = newCount;

//now update the price based on the constant above

const oldPrice = this.state.totalPrice;

// to prevent sending a deduction when there are no ingredients

let priceToRemove = 0;

if (oldCount !==0) {

priceToRemove = INGREDIENT_PRICES[type]; //reference a key, not a property :/

}

const newPrice = oldPrice - priceToRemove;

this.setState({

ingredients: updatedIngredients,

totalPrice : newPrice

});

this.updatePurchaseableState(updatedIngredients);

}

render(){ //required lifecycle method

//let's add a check here to disable the "LESS" button of a given Build Control

//if there are no ingredients to take away

const disabledButtonInfo = {

...this.state.ingredients

}

for (let key in disabledButtonInfo ){

disabledButtonInfo[key] = disabledButtonInfo[key] <= 0

}

//here we have a check for loading state, replacing the order summary with a spinner

// but now that we're retriving ingredients from firebase, we need to also check for

//this.state.ingredients

let orderSummary = null;

if(this.state.ingredients){

orderSummary = <OrderSummary

ingredients={this.state.ingredients}

price={this.state.totalPrice}

cancel={this.purchaseCancelHandler}

continue={this.purchaseContinueHandler}

/>

}

if (this.state.loading) {

orderSummary = <Spinner />

}

//here we check to see if the burger's ingredients have been populated into state

//if not, show a spinner.

// if you don't have these checks, the app will break because the state isn't

//ready yet, as it relies on an external call

let burger = !this.state.error ? <Spinner /> : <p>Ingredients can't be loaded</p>

if(this.state.ingredients){

burger =

<Aux>

<Burger ingredients={this.state.ingredients} />

<BuildControls

ingredientAdded={this.addIngredientHandler}

ingredientRemoved={this.removeIngredientHandler}

disabled={disabledButtonInfo}

price={this.state.totalPrice}

purchaseable={!this.state.purchaseable}

ordered={this.purchasingHandler}

/>

</Aux>

}

return(

<Aux>

<Modal

show={this.state.purchasing}

modalClosed={this.purchaseCancelHandler}>

{orderSummary}

</Modal>

{burger}

</Aux>

)

}

}

//wrap this component with the HOC to display a global error modal

export default withErrorHandler(BurgerBuilder, axios);<file_sep>/src/components/Burger/OrderSummary/OrderSummary.js

import React from 'react';

import './OrderSummary.css';

import Aux from '../../../hoc/Aux/Aux';

import Button from '../../UI/Button/Button';

const OrderSummary = (props) => {

//create an array with the object's keys, again

const ingredientSummary = Object.keys(props.ingredients)

.map((ingredientKey, index) => {

return(

//now use that key and the corresponding value for it for display

<li key={ingredientKey}><span style={{textTransform:'capitalize'}}>{ingredientKey}</span>: {props.ingredients[ingredientKey]}</li>

)

});

return(

<Aux>

<h3>Your Order</h3>

<p>Delicious burger with the follwing ingredients</p>

<ul>

{ingredientSummary}

</ul>

<p>Total Price: ${props.price.toFixed(2)}</p>

<p>Continue to Checkout?</p>

<Button clicked={props.cancel} btnType={"Danger"}>Cancel</Button>

<Button clicked={props.continue} btnType={"Success"}>Continue</Button>

</Aux>

)

}

export default OrderSummary;<file_sep>/src/components/Burger/Burger.js

import React from 'react';

import './Burger.css';

import BurgerIngredient from './BurgerIngredient/BurgerIngredient';

import Aux from '../../hoc/Aux/Aux';

const Burger = (props) => {

//transformed ingredients take the key value pairs

//from the props and returns an array based on the value

// of the ingredients and the number (key : value)

//Object.keys returns an array based on the object keys

let transFormedIngredients = Object.keys(props.ingredients)

.map((ingredientKey) => {

console.log(ingredientKey);

//props.ingredients[ingredientsKey] returns the value from that key

console.log(props.ingredients[ingredientKey]);

//now we use the Array method to create a new array of a length that matches the key above

//spread operator creates a new array based on the current key

return[...Array(props.ingredients[ingredientKey])]

//and finally we map that array with a blank arg and index

//and render BurgerIngredient components in a list

.map((_, index) => {

return <BurgerIngredient key={ingredientKey + index} type={ingredientKey}/>

})

})

//after all of this, call reduce on the array to flatten it so we can see

//if there are any ingredients for the purposes of displaying a message

// reduce returns a value based on the elements of the array

// in this case we take each subarray and concat it to the main array

.reduce((arr,el) =>{

return arr.concat(el);

}, []);

console.log(transFormedIngredients.length);

if(transFormedIngredients.length === 0) {

transFormedIngredients = <p>Please begin adding ingredients.</p>;

}

return(

<Aux>

<div className="Burger">

<BurgerIngredient type="bread-top"/>

{transFormedIngredients}

<BurgerIngredient type="bread-bottom"/>

</div>

</Aux>

);

}

export default Burger;<file_sep>/src/hoc/Layout/Layout.js

import React, {Component} from 'react';

import Aux from '../../hoc/Aux/Aux';

import './Layout.css';

import Toolbar from '../../components/Navigation/Toolbar/Toolbar';

import SideDrawer from '../../components/Navigation/SideDrawer/SideDrawer';

//this component is merely a wrapper that returns props.children (see how it's used in APP)

// this is so we can have a universal layout for all child components

// it also includes all navigation and default layouts

//I changed it to a container so I can handle the

//opening and closing of the sidedrawer component

class Layout extends Component {

state = {

showSideDrawer: false

}

sideDrawerClosedHandler = () => {

this.setState({

showSideDrawer: false

})

}

sideDrawerToggle= () => {

this.setState({

showSideDrawer: !this.state.showSideDrawer

})

}

render() {

return(

<Aux>

<Toolbar toggleSideDrawer={this.sideDrawerToggle} />

<SideDrawer

open={this.state.showSideDrawer}

clicked={this.sideDrawerClosedHandler} />

<main className="Content">

{this.props.children}

</main>

</Aux>

);

}

}

export default Layout;<file_sep>/src/components/UI/Modal/Modal.js

import React, {Component} from 'react';

import './Modal.css';

import Aux from '../../../hoc/Aux/Aux';

import Backdrop from '../Backdrop/Backdrop'

class Modal extends Component {

//without shouldComponentUpdate, you'll trigger a rerender

//EVERYTIME state changes in the build control component.

// this lifecycle method improves performance by only rerendering

//if the right props change, in this case, we only want the modal

//to trigger a renender if it is visible.

//NOTE: because this component has children, you have to check for that too

// if you want to display the spinner in the ORDERSUMMARY child component.

shouldComponentUpdate(nextProps, nextState) {

return nextProps.show !== this.props.show || nextProps.children !== this.props.children;

}

componentDidUpdate() {

console.log('[Modal] will update');

}

render(){

return(

<Aux>

<Backdrop show={this.props.show} clicked={this.props.modalClosed}/>

<div style={{

//inline styles to display based on the show prop

transform: this.props.show ? 'translateY(0)' : 'translateY(-100vh)',

opacity: this.props.show ? '1' : '0'

}}

className="Modal"

>

{this.props.children}

</div>

</Aux>

)

}

}

export default Modal; | 4b232894468459ece3f685749997ba6103855980 | [

"JavaScript"

] | 5 | JavaScript | JaarenDSacharow/BurgerBuilder | 3db50112c25508a03373a180d3896cb4e7f1509b | a111dc291d3157f4e5abae74740f32a6cf4be7e2 |

refs/heads/master | <repo_name>jane-great/ChainBook<file_sep>/README.md

## Build Setup

``` bash

# install dependencies

npm install

# serve with hot reload at localhost:8080

npm run dev

# build for production with minification

npm run build

# build for production and view the bundle analyzer report

npm run build --report

# run unit tests

npm run unit

# run all tests

npm test

```

# mongodb install

从mongodb官网现在并安装mongodb

https://www.mongodb.com/download-center?jmp=tutorials&_ga=2.99390234.503106171.1527646565-1887027323.1527385485#atlas

启动mongodb

https://docs.mongodb.com/tutorials/install-mongodb-on-windows/

导入db文件夹中的db初始化文件

<file_sep>/front/src/apis/base.js

export default function (request) {

return {

getCommon() {

return request({ url: 'src/mock/base/test.json' }).then(({ data }) => data);

},

getForText() {

return Promise.resolve({});

}

};

}

<file_sep>/smartcontracts/test/Transaction.test.js

var BuyAndSell = artifacts.require("./BuyAndSell.sol");

var RentAndLease = artifacts.require("./RentAndLease.sol");

var Transaction = artifacts.require("./Transaction.sol");

var BookOwnerShip = artifacts.require("./BookOwnerShip.sol");

contract("Transaction", function(accounts) {

var buys;

var rents;

var book;

var trans;

BookOwnerShip.deployed().then(function(ins) {

book = ins;

})

Transaction.deployed().then(function(ins) {

trans = ins;

})

BuyAndSell.deployed().then(function(ins) {

buys = ins;

})

RentAndLease.deployed().then(function(ins) {

rents = ins;

})

it("初始化成功", async () => {

let addr = await trans.ceoAddress();

assert.equal(addr, accounts[0], "ceo地址设置正确");

await buys.addAddressToWhitelist(trans.address);

await rents.addAddressToWhitelist(trans.address);

})

it("只有ceo可以设置合约地址", async () => {

await trans.setBuyAndSell(buys.address,{from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "error message must contain revert");

});

let buyAddr = await trans.buyAndSell();

assert.equal(buyAddr, 0, "买卖合约地址尚未设置");

await trans.setBuyAndSell(buys.address);

let buyAddr2 = await trans.buyAndSell();

assert.equal(buyAddr2, buys.address, "买卖合约地址设置正确");

await trans.setBuyAndSell(rents.address,{from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "error message must contain revert");

});

let rentAddr = await trans.rentAndLease();

assert.equal(rentAddr, 0, "租赁合约地址尚未设置");

await trans.setRentAndLease(rents.address);

let rentAddr2 = await trans.rentAndLease();

assert.equal(rentAddr2, rents.address, "租赁合约地址设置正确");

})

it("只有ceo可以设置交易费用", async () => {

// 不是ceo不能设置交易价格

await trans.setBuyFees(1,{from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "error message must contain revert");

})

let buyfees = await trans.getBuyFees();

assert.equal(buyfees, 0, "买卖比例为初始值");

// ceo 可以设置交易价格

await trans.setBuyFees(100);

let buyfees2 = await trans.getBuyFees();

assert.equal(buyfees2, 100, "买卖比例为100");

// 不是ceo,不能设置租赁价格

await trans.setLeaseFees(1,{from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "error message must contain revert");

})

let rentfees = await trans.getLeaseFees();

assert.equal(rentfees, 0, "租赁比例为0");

// ceo,可以设置租赁价格

await trans.setLeaseFees(100);

let rentfees2 = await trans.getLeaseFees();

assert.equal(rentfees2, 100, "租赁比例为100");

})

it("出售书籍", async () => {

// 不是所有者,不允许出售书籍

await book.approve(trans.address,0, {from:accounts[0]});

await trans.sell(book.address, 0, 100, {from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "非所有者不能出售图书");

})

let owner = await book.ownerOf(0);

assert(owner, accounts[0], "图书所有者不变");

// 图书所有者,允许出售图书

await trans.sell(book.address, 0, 100, {from:accounts[0]}); // 出售图书

let owner3 = await book.ownerOf(0);

assert.equal(owner3, trans.address, "图书所有权转移到合约");

await book.transfer(accounts[0], 0).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "出售书籍后,原所有者无法转移图书所有权");

})

let owner2 = await book.ownerOf(0);

assert.equal(owner2, trans.address, "图书所有权不变");

let allowRead = await book.allowToRead(accounts[0], 0);

assert.equal(allowRead, false, "出售书籍后无法阅读图书");

})

it("修改出售价格", async () => {

// 不是所有者,不允许修改价格

await trans.setSellPrice(book.address, 0, 1, {from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "不是所有者不允许修改价格");

})

let sellinfo = await trans.getSellInfo(book.address, 0);

assert.equal(sellinfo[0], accounts[0], "售卖人不变");

assert.equal(sellinfo[1].toNumber(), 100, "售卖价格不变");

// 图书所有者,允许修改价格

await trans.setSellPrice(book.address, 0 , 1000, {from:accounts[0]});

let info = await trans.getSellInfo(book.address, 0);

assert.equal(info[0], accounts[0], "售卖人不变");

assert.equal(info[1].toNumber(), 1000, "售卖价格变为1000");

let owner = await book.ownerOf(0);

assert.equal(owner, trans.address, "图书所有权在合约");

})

it("取消出售", async () => {

// 不是所有者,不允许出售

await trans.cancelSell(book.address, 0, {from:accounts[1]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "不是所有者不允许取消出售");

})

let addr = await book.ownerOf(0);

assert.equal(addr, trans.address, "图书所有权不变");

// 图书所有者,出售成功

await trans.cancelSell(book.address,0);

let addr2 = await book.ownerOf(0);

assert.equal(addr2, accounts[0], "图书所有者变为accounts[0]");

let approveAddr = await book.getApproved(0);

assert.equal(approveAddr, 0, "图书授权取消");

let allowRead = await book.allowToRead(accounts[0], 0);

assert.equal(allowRead, true, "accounts[0]允许阅读图书");

})

it("购买图书", async () => {

// 重新出售图书

await book.approve(trans.address, 0);

await trans.sell(book.address, 0, 999);

// 金额不够,购买失败

await trans.buy(book.address, 0, {from:accounts[1], value: 10}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "金额不够,购买失败");

})

let owner = await book.ownerOf(0);

assert.equal(owner, trans.address, "图书所有权不变");

// 金额足够,购买成功

await trans.buy(book.address, 0, {from:accounts[2], value: 1000});

let owner2 = await book.ownerOf(0);

assert.equal(owner2, accounts[2], "accounts[2]拥有图书0");

let allowRead = await book.allowToRead(accounts[2], 0);

assert.equal(allowRead, true, "accounts[2]允许阅读图书");

})

it("出租图书", async () => {

await book.approve(trans.address, 0, {from:accounts[2]});

// 不是所有者,不能出租图书

await trans.rent(book.address, 0 , 10, 3600, {from:accounts[0]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "不是所有者不允许出租");

})

// 所有者允许出租图书

await trans.rent(book.address, 0, 10000, 3600, {from:accounts[2]});

let owner = await book.ownerOf(0);

assert.equal(owner, accounts[2], "accounts[2]拥有图书所有权");

let allowRead = await book.allowToRead(accounts[2], 0 );

assert.equal(allowRead, true, "accounts[2]允许阅读图书");

})

it("修改出售信息", async () => {

// 不是所有者,不允许修改出售信息

await trans.setRentInfo(book.address, 0, 1, 7200, {from: accounts[0]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "不是所有者修改出售信息");

})

let info = await trans.getRentInfo(book.address, 0);

assert.equal(info[0], accounts[2], "所有者不变");

assert.equal(info[1].toNumber(), 10000, "价格不变");

assert.equal(info[3].toNumber(), 3600, "出售时间不变");

// 图书所有者允许修改出售信息

await trans.setRentInfo(book.address, 0, 20000, 7200, {from:accounts[2]});

let res = await trans.getRentInfo(book.address, 0);

assert.equal(res[0], accounts[2], "所有者不变");

assert.equal(res[1].toNumber(), 20000, "价格变为20000");

assert.equal(res[3].toNumber(), 7200, "出售时间变为7200");

let owner = await book.ownerOf(0);

assert.equal(owner, accounts[2], "图书所有者还是accounts[2]");

})

it("取消出租", async () => {

// 不是所有者,不允许取消出租

await trans.cancelRent(book.address, 0, {from:accounts[0]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "不是所有者不允许取消");

})

let res = await book.isRent(0);

assert.equal(res, true, "图书正在出租");

// 图书所有者运行取消出租

await trans.cancelRent(book.address, 0, {from:accounts[2]});

let res2 = await book.isRent(0);

assert.equal(res2, false, "图书已经取消出租");

let owner = await book.ownerOf(0);

assert.equal(owner, accounts[2], "图书所有者不变");

let allowRead = await book.allowToRead(accounts[2], 0);

assert.equal(allowRead, true, "账户2允许阅读图书");

})

it("租赁图书", async () => {

// 重新出售图书

await book.approve(trans.address, 0, {from:accounts[2]});

await trans.rent(book.address, 0, web3.toWei(1,'ether'), 2, {from:accounts[2]});

// 金额不够,租赁失败

await trans.lease(book.address, 0, {from:accounts[1], value:1000}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "金额不够,租赁失败");

})

let allowRead = await book.allowToRead(accounts[1], 0);

assert.equal(allowRead, false, "不允许阅读");

let leaser = await book.tokenIdToLeaser(0);

assert.equal(leaser, 0, "无人租赁");

// 金钱足够,租赁成功

await trans.lease(book.address, 0, {from:accounts[1], value:web3.toWei(3,'ether')});

let allowRead2 = await book.allowToRead(accounts[1],0);

assert.equal(allowRead2, true, "允许账户1阅读");

let allowRead3 = await book.allowToRead(accounts[2],0);

assert.equal(allowRead3, false, "不允许账户2阅读");

let owner = await book.ownerOf(0);

assert.equal(owner, accounts[2], "图书所有权不转移");

let leaser2 = await book.tokenIdToLeaser(0);

assert.equal(leaser2, accounts[1], "账户1为租赁人");

let islease = await book.isLease(0);

assert.equal(islease, true, "书籍正在租赁");

await trans.cancelRent(book.address, 0, {from:accounts[2]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "租赁时不允许取消租赁");

})

await book.rentCancel(0,{from:accounts[2]}).then(assert.fail).catch(function(error) {

assert(error.message.indexOf('revert') >= 0, "租赁时不允许取消租赁");

})

})

it("提款成功", async () => {

let balance02 = web3.fromWei(web3.eth.getBalance(accounts[2]).toNumber(),'ether');

await trans.withdraw({from:accounts[2]});

let balance12 = web3.fromWei(web3.eth.getBalance(accounts[2]).toNumber(),'ether');

assert.equal(balance12 > balance02 + web3.toWei(0.5,'ether'), true, "转账成功");

let balance01 = web3.fromWei(web3.eth.getBalance(accounts[1]).toNumber(),'ether');

await trans.withdraw({from:accounts[1]});

let balance11 = web3.fromWei(web3.eth.getBalance(accounts[1]).toNumber(),'ether');

assert.equal(balance11 > balance01 + web3.toWei(0.5,'ether'), true, "转账成功");

})

})

<file_sep>/server/utils/logger.js

var log4js = require('log4js');

var logger = log4js.getLogger();

logger.level = 'debug';

//debug

EVENT.ENTER_FUNCTION = "enter function";

log4js.configure({

appenders: [

{

type: 'console',

layout: {

type: 'pattern',

pattern: "[%d{ISO8601}][%p][%x{pid}][%c] >>>%m",

tokens: {

"pid": function () {

return process.pid;

}

}

}

},

{

type: 'file',

filename: config.log.file,//文件的配置路径

//日志文件大小10m

maxLogSize: config.log.maxLogSize,

backups: config.log.backups,

layout: {

type: 'pattern',

pattern: "[%d{ISO8601}][%p][%x{pid}][%c] >>>%m",

tokens: {

"pid": function () {

return process.pid;

}

}

}

}

],

replaceConsole: true

});

function Logger(filepath) {

var category;

if (filepath == undefined || filepath.replace == undefined) {

category = 'common';

} else {

category = filepath.replace(/.*([\/\\]server)+/, "");

}

this._logger = log4js.getLogger(category);

this._logger.setLevel(config.log.level);

}