branch_name stringclasses 149 values | text stringlengths 23 89.3M | directory_id stringlengths 40 40 | languages listlengths 1 19 | num_files int64 1 11.8k | repo_language stringclasses 38 values | repo_name stringlengths 6 114 | revision_id stringlengths 40 40 | snapshot_id stringlengths 40 40 |

|---|---|---|---|---|---|---|---|---|

refs/heads/master | <repo_name>wysohn/HideOres<file_sep>/HideOres/src/com/hideores/utils/BlockHider.java

package com.hideores.utils;

import com.hideores.main.HideOres;

import com.hideores.main.HideOresConfigs;

public class BlockHider {

public static HideOresConfigs config = HideOres.config;

public static void checkAndCopy(byte[] original, boolean newDataSystem) {

//LER init

LittleEndianReader LER = new LittleEndianReader(original);

if(newDataSystem){

//while has bytes to read

while(LER.getRemaining() > 0){

//a single data

short data = LER.readShort();

//extract type and meta from data

int type = (data >> 4);

//int meta = (data & 0x000F);

//if ore

if (config.getOres().contains(type)) {

int replacingType;//replacing block //TODO: make replacing block list

int replacingMeta = 0;//replacing meta

if(config.getReplacingBlocks().size() == 0)//set to 1 if nothing inside the list

replacingType = 1;

else{

int random = (int) (Math.random() * (config.getReplacingBlocks().size()));

replacingType = config.getReplacingBlocks().get(random);//get a random block from list

}

short combine = 0;

//backup

combine = (short) ((replacingType << 4) | replacingMeta);//combine type and meta

//Bukkit.getLogger().log(Level.INFO,"type ["+type+"] found and changed into ["+replacingType+"]");

data = combine;//replace previous data

LER.putShort(data);//write new data

//Bukkit.getLogger().info("sky: "+skylight+" block: "+blocklight

// +" type: "+replacingType+" meta: "+replacingMeta);

}

}

}else{

while(LER.getRemaining() > 0){

byte data = LER.readByte();

//Bukkit.getLogger().log(Level.INFO,"type ["+data+"] found");

//if ore

if (config.getOres().contains(Integer.valueOf(data))) {

int replacingType;//replacing block //TODO: make replacing block list

if(config.getReplacingBlocks().size() == 0)//set to 1 if nothing inside the list

replacingType = 1;

else{

int random = (int) (Math.random() * (config.getReplacingBlocks().size()));

replacingType = config.getReplacingBlocks().get(random);//get a random block from list

}

//Bukkit.getLogger().log(Level.INFO,"type ["+data+"] found and changed into ["+replacingType+"]");

data = (byte) replacingType;//replace previous data

LER.putByte(data);//write new data

}

}

}

System.arraycopy(LER.toByteArray(), 0, original, 0, original.length);

}

public static void checkAndCopy(char[] original){

byte[] b = new byte[original.length];

int i = 0;

for(char c : original){

b[i] = (byte) c; i++;

}

System.arraycopy(b, 0, original, 0, original.length);

}

}

<file_sep>/HideOres/src/com/hideores/core/v1_7_R2/PlayerHooker.java

package com.hideores.core.v1_7_R2;

import org.bukkit.craftbukkit.v1_7_R2.entity.CraftPlayer;

import org.bukkit.entity.Player;

import com.hideores.core.Iinstance.IPlayerHooker;

import com.hideores.utils.ReflectionHelper;

public class PlayerHooker implements IPlayerHooker{

public void hookPlayer(Player player){

CraftPlayer p = (CraftPlayer) player;

ReflectionHelper.setPrivateFinal(p.getHandle(), "chunkCoordIntPairQueue", new ChunkCoordQueue(p));

}

}

<file_sep>/HideOres/src/com/hideores/main/HideOresConfigs.java

package com.hideores.main;

import java.util.ArrayList;

import java.util.List;

import org.bukkit.configuration.file.FileConfiguration;

import org.bukkit.plugin.java.JavaPlugin;

public class HideOresConfigs {

private FileConfiguration config;

private JavaPlugin plugin;

public FileConfiguration getConfig() {

return config;

}

private List<Integer> ores = new ArrayList<Integer>(){{add(56);}};

private List<Integer> replacingBlocks = new ArrayList<Integer>(){{add(1);add(3);}};

private int radius = 6;

public HideOresConfigs(JavaPlugin plugin){

this.config = plugin.getConfig();

this.plugin = plugin;

config.options().copyDefaults(true);

config.addDefault("ores", ores);

config.addDefault("replacingBlocks", replacingBlocks);

config.addDefault("radius", radius);

loadConfigs();

plugin.saveConfig();

}

public void loadConfigs(){

ores = config.getIntegerList("ores");

replacingBlocks = config.getIntegerList("replacingBlocks");

}

public void saveConfigs(){

config.set("ores", this.getOres());

config.set("replacingBlocks", this.getReplacingBlocks());

plugin.saveConfig();

}

public List<Integer> getOres() {

return ores;

}

public void setOres(List<Integer> ores) {

this.ores = ores;

}

public List<Integer> getReplacingBlocks() {

return replacingBlocks;

}

public void setReplacingBlocks(List<Integer> replacingBlocks) {

this.replacingBlocks = replacingBlocks;

}

public int getRadius() {

return radius;

}

public void setRadius(int radius) {

this.radius = radius;

}

}

<file_sep>/HideOres/src/com/hideores/core/v1_8_R2/MapChunkCalculation.java

package com.hideores.core.v1_8_R2;

import java.util.Map;

import org.bukkit.World;

import com.hideores.cache.ChunkCoord;

import com.hideores.cache.ChunkMapCache;

import com.hideores.core.Iinstance.IMapChunkCalculation;

import com.hideores.main.HideOres;

import com.hideores.utils.BlockHider;

import com.hideores.utils.ReflectionHelper;

import net.minecraft.server.v1_8_R2.PacketPlayOutMapChunk;

import net.minecraft.server.v1_8_R2.PacketPlayOutMapChunk.ChunkMap;

import net.minecraft.server.v1_8_R2.PacketPlayOutMapChunkBulk;

public class MapChunkCalculation implements IMapChunkCalculation{

public static void calcAndChange(World world, PacketPlayOutMapChunk packet){

int x = (int) ReflectionHelper.getPrivateField(packet, "a");

int z = (int) ReflectionHelper.getPrivateField(packet, "b");

//get original chunk map c from packet

ChunkMap originalChunkMap = (ChunkMap) ReflectionHelper.getPrivateField(packet, "c");

//make a copy of chunk map

ChunkMap newChunkMap = new ChunkMap();

newChunkMap.b = originalChunkMap.b;

//calculate and change new chunk map

ChunkCoord coord = new ChunkCoord(world.getName(),x,z);

if((newChunkMap.a = HideOres.getCacheManager().getCache(coord)) == null){

newChunkMap.a = originalChunkMap.a.clone();

BlockHider.checkAndCopy(newChunkMap.a, true);

HideOres.getCacheManager().putCache(coord, newChunkMap.a.clone());

}

//put new chunk map to packet

ReflectionHelper.setPrivateField(packet, "c", newChunkMap);

}

public static void calcAndChange(World world, PacketPlayOutMapChunkBulk packet){

int[] xArray = (int[]) ReflectionHelper.getPrivateField(packet, "a");

int[] zArray = (int[]) ReflectionHelper.getPrivateField(packet, "b");

ChunkMap[] originalChunkMapArray = (ChunkMap[]) ReflectionHelper.getPrivateField(packet, "c");

ChunkMap[] newChunkMapArray = new ChunkMap[originalChunkMapArray.length];

int index = 0;

for(ChunkMap originalChunkMap : originalChunkMapArray){

int x = xArray[index];

int z = zArray[index];

//make a copy of chunk map

ChunkMap newChunkMap = new ChunkMap();

newChunkMap.b = originalChunkMap.b;

//calculate and change new chunk map

ChunkCoord coord = new ChunkCoord(world.getName(),x,z);

if((newChunkMap.a = HideOres.getCacheManager().getCache(coord)) == null){

newChunkMap.a = originalChunkMap.a.clone();

BlockHider.checkAndCopy(newChunkMap.a, true);

HideOres.getCacheManager().putCache(coord, newChunkMap.a.clone());

}

//put it into new array

newChunkMapArray[index] = newChunkMap; index++;

}

//put new chunk map array to packet

ReflectionHelper.setPrivateField(packet, "c", newChunkMapArray);

}

}

<file_sep>/HideOres/src/com/hideores/core/Iinstance/IBlockNotifier.java

package com.hideores.core.Iinstance;

import org.bukkit.block.Block;

import org.bukkit.entity.Player;

public interface IBlockNotifier {

public void notifyBlock(Player player, double x, double y, double z);

public boolean canSee(Block block);

}

<file_sep>/README.md

# HideOres

hmm

<file_sep>/HideOres/src/com/hideores/core/Iinstance/IChunkCoordQueue.java

package com.hideores.core.Iinstance;

public interface IChunkCoordQueue {

}

<file_sep>/HideOres/src/com/hideores/utils/LittleEndianReader.java

package com.hideores.utils;

import java.nio.ByteBuffer;

import java.nio.ByteOrder;

public class LittleEndianReader{

private ByteBuffer bytebuffer;

public LittleEndianReader(byte[] data){

bytebuffer = ByteBuffer.wrap(data);

bytebuffer.order(ByteOrder.LITTLE_ENDIAN);

}

public byte readByte(){

return bytebuffer.get();

}

public short readShort(){

return bytebuffer.getShort();

}

public void putByte(byte b){

bytebuffer.position(bytebuffer.position() - 1);

bytebuffer.put(b);

}

public void putShort(short s){

bytebuffer.position(bytebuffer.position() - 2);

bytebuffer.putShort(s);

}

public void reset(){

bytebuffer.rewind();

}

public int getRemaining(){

return bytebuffer.remaining();

}

public byte[] toByteArray(){

return bytebuffer.array();

}

}

| d0db600142dad67e2e694a1008c11d3dfc842e8e | [

"Markdown",

"Java"

] | 8 | Java | wysohn/HideOres | 21e84e2d06f2b655c95c21b5558fae3e2dddb726 | 263e37f84ae41e1ac4ec93766af2500479392abe |

refs/heads/master | <file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.AI;

public class EnemySight : MonoBehaviour

{

public bool playerInSight = false;

public float fieldOfView = 110;

public Vector3 alertPosition = Vector3.zero;

private Animator playerAnim;

private NavMeshAgent navAgent;

private SphereCollider collider;

private Vector3 preLastPlayerPosition;

void Awake()

{

playerAnim = GameObject.FindGameObjectWithTag(Tags.player).GetComponent<Animator>();

navAgent = GetComponent<NavMeshAgent>();

collider = GetComponent<SphereCollider>();

}

void Start()

{

preLastPlayerPosition = GameController._instance.lastPlayerPosition;

}

void Update()

{

if(GameController._instance.lastPlayerPosition != preLastPlayerPosition)

{

alertPosition = GameController._instance.lastPlayerPosition;

preLastPlayerPosition = GameController._instance.lastPlayerPosition;

}

}

public void OnTriggerStay(Collider other)

{

if(other.tag == Tags.player)

{

Vector3 forward = transform.forward;

Vector3 playerDir = other.transform.position - transform.position;

float temp = Vector3.Angle(forward, playerDir);

RaycastHit hitInfo;

bool res = Physics.Raycast(transform.position + Vector3.up, other.transform.position - transform.position, out hitInfo);

if(temp < 0.5f * fieldOfView && (res == false||hitInfo.collider.tag == Tags.player))

{

playerInSight = true;

alertPosition = other.transform.position;

GameController._instance.SeePlayer(other.transform);

}

else

{

playerInSight = false;

}

//判断敌人能否接受到玩家的脚步声,声音传播轨迹绕过障碍物寻路

if (playerAnim.GetCurrentAnimatorStateInfo(0).IsName("Locomotion"))

{

NavMeshPath path = new NavMeshPath();

if (navAgent.CalculatePath(other.transform.position, path))

{

Vector3[] wayPoints = new Vector3[path.corners.Length + 2];

wayPoints[0] = transform.position;

wayPoints[wayPoints.Length - 1] = other.transform.position;

for(int i = 0; i < path.corners.Length; i++)

{

wayPoints[i + 1] = path.corners[i];

}

float length = 0;

for (int i = 1; i < wayPoints.Length; i++)

{

length += (wayPoints[i] - wayPoints[i - 1]).magnitude;

}

if(length < collider.radius)//若声音轨迹长度小于触发器的直径,则能接收到声音

{

alertPosition = other.transform.position;

}

}

}

}

}

public void OnTriggerExit(Collider other)

{

if (other.tag == Tags.player)

{

playerInSight = false;

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class HoverPad : MonoBehaviour

{

public float hoverForce;

void OnTriggerEnter(Collider other)

{

Debug.Log("Object entered the trigger");

}

void OnTriggerStay(Collider other)

{

other.GetComponent<Rigidbody>().AddForce(Vector3.up * hoverForce, ForceMode.Acceleration);

}

void OnTriggerExit(Collider other)

{

Debug.Log("Object exited the trigger");

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class VoxAmin : MonoBehaviour

{

public AnimationCurve ac;

Vector3 s;

public float playSpeed = 3f;

private float timeOffet = 0;

// Start is called before the first frame update

void Start()

{

s = transform.localScale;

timeOffet = Random.value;

}

// Update is called once per frame

void Update()

{

timeOffet += Time.deltaTime;

float r = ac.Evaluate(timeOffet * playSpeed);

transform.localScale = new Vector3(s.x, s.y * r, s.z);

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace 观察者设计模式_猫捉老鼠

{

class Mouse

{

private string name;

private string color;

public Mouse(string name, string color,Cat cat)

{

this.name = name;

this.color = color;

cat.catCome += this.RunAway; //把自身的逃跑方法注册进猫里面

}

public void RunAway()

{

Console.WriteLine(color + "的老鼠" + name + "说:老猫来了,快跑");

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Biker : MonoBehaviour

{

private Animator anim;

// Start is called before the first frame update

void Start()

{

anim = GetComponent<Animator>();

}

// Update is called once per frame

void Update()

{

float v = Input.GetAxisRaw("Vertical");

anim.SetInteger("Vertical", (int)v);

//transform.Translate(Vector3.forward * v * Time.deltaTime * 4);

}

}

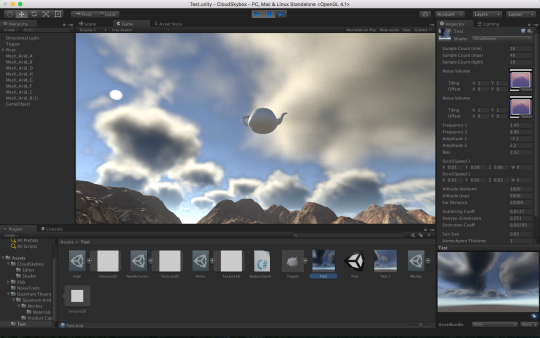

<file_sep>CloudSkybox

===========

*CloudSkybox* is an extention for Unity's default procedural skybox shader

that draws clouds with a volumetric rendering technique.

System Requirements

-------------------

- Unity 5.3 or later

- A GPU which supports SM 3.0

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.Playables;

public class Player : MonoBehaviour

{

private Animator anim;

private int speedID = Animator.StringToHash("Speed");

private int isSpeedupID = Animator.StringToHash("IsSpeedup");

private int horizontalID = Animator.StringToHash("Horizontal");

private int speedRotateID = Animator.StringToHash("SpeedRotate");

private int speedZID = Animator.StringToHash("SpeedZ");

private int vaultID = Animator.StringToHash("Vault");

private int sliderID = Animator.StringToHash("Slider");

private int colliderID = Animator.StringToHash("Collider");

private int isHoldLogID = Animator.StringToHash("IsHoldLog");

private Vector3 matchTarget = Vector3.zero;

private CharacterController characterController;

public GameObject unityLog = null;

public Transform rightHand;

public Transform leftHand;

public PlayableDirector director;

// Start is called before the first frame update

void Start()

{

anim = GetComponent<Animator>();

characterController = GetComponent<CharacterController>();

//unityLog = transform.Find("Unity_Log").gameObject;

}

// Update is called once per frame

void Update()

{

anim.SetFloat(speedZID, Input.GetAxis("Vertical") * 4.1f);

anim.SetFloat(speedRotateID, Input.GetAxis("Horizontal") * 126);

//anim.SetFloat(speedID, Input.GetAxis("Vertical")*4.1f);

//anim.SetFloat(horizontalID, Input.GetAxis("Horizontal"));

//if (Input.GetKeyDown(KeyCode.LeftShift))

//{

// anim.SetBool(isSpeedupID, true);

//}

//if (Input.GetKeyUp(KeyCode.LeftShift))

//{

// anim.SetBool(isSpeedupID, false);

//}

ProcessVault();

ProcessSlider();

//if(anim.GetFloat(colliderID)>0.5f)

//{

// characterController.enabled = false;

//}

//else

//{

// characterController.enabled = true;

//}

characterController.enabled = anim.GetFloat(colliderID) < 0.5f;

}

private void ProcessVault()

{

bool isVault = false;

if (anim.GetFloat(speedZID) > 3 && anim.GetCurrentAnimatorStateInfo(0).IsName("Locomotion"))

{

RaycastHit hit;

if (Physics.Raycast(transform.position + Vector3.up * 0.3f, transform.forward, out hit, 4f))

{

if (hit.collider.tag == "Obstacle")

{

Vector3 point = hit.point;

point.y = hit.collider.transform.position.y + hit.collider.bounds.size.y + 0.07f;

matchTarget = point;

isVault = true;

}

}

}

anim.SetBool(vaultID, isVault);

if (anim.GetCurrentAnimatorStateInfo(0).IsName("Vault") && anim.IsInTransition(0) == false)

{

anim.MatchTarget(matchTarget, Quaternion.identity, AvatarTarget.LeftHand, new MatchTargetWeightMask(Vector3.one, 0), 0.32f, 0.4f);

}

}

private void ProcessSlider()

{

bool isSlider = false;

if (anim.GetFloat(speedZID) > 3 && anim.GetCurrentAnimatorStateInfo(0).IsName("Locomotion"))

{

RaycastHit hit;

if (Physics.Raycast(transform.position + Vector3.up * 1.5f, transform.forward, out hit, 3f))

{

if (hit.collider.tag == "Obstacle")

{

if (hit.distance > 2)

{

Vector3 point = hit.point;

point.y = 0;

matchTarget = point + transform.forward * 2;

isSlider = true;

}

}

}

}

anim.SetBool(sliderID, isSlider);

if (anim.GetCurrentAnimatorStateInfo(0).IsName("Slider") && anim.IsInTransition(0) == false)

{

anim.MatchTarget(matchTarget, Quaternion.identity, AvatarTarget.Root, new MatchTargetWeightMask(new Vector3(1,0,1), 0), 0.17f, 0.67f);

}

}

private void OnTriggerEnter(Collider other)

{

if(other.tag == "Log")

{

Destroy(other.gameObject);

CarryWood();

}

if(other.tag == "Playable")

{

director.Play();

}

}

void CarryWood()

{

unityLog.SetActive(true);

anim.SetBool(isHoldLogID, true);

}

private void OnAnimatorIK(int layerIndex)

{

if(layerIndex == 1)

{

int weight = anim.GetBool(isHoldLogID) ? 1 : 0;

anim.SetIKPosition(AvatarIKGoal.LeftHand, leftHand.position);

anim.SetIKRotation(AvatarIKGoal.LeftHand, leftHand.rotation);

anim.SetIKPositionWeight(AvatarIKGoal.LeftHand, weight);

anim.SetIKRotationWeight(AvatarIKGoal.LeftHand, weight);

anim.SetIKPosition(AvatarIKGoal.RightHand, rightHand.position);

anim.SetIKRotation(AvatarIKGoal.RightHand, rightHand.rotation);

anim.SetIKPositionWeight(AvatarIKGoal.RightHand, weight);

anim.SetIKRotationWeight(AvatarIKGoal.RightHand, weight);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using System.Net;

using System.Net.Sockets;

using System.Text;

using System.Threading;

using UnityEngine;

using UnityEngine.UI;

public class ChatManager : MonoBehaviour

{

public string ipaddress = "192.168.3.11";

public int port = 7788;

public InputField textInput;

public Text chatText;

private Socket clientSocket;

private Thread t;

private byte[] data = new byte[1024];

private string message = ""; //消息容器

// Start is called before the first frame update

void Start()

{

ConnectToServer();

}

// Update is called once per frame

void Update()

{

if(message != null && message != "")

{

chatText.text += message + "\n";

message = "";

}

}

void ConnectToServer()

{

clientSocket = new Socket(AddressFamily.InterNetwork, SocketType.Stream, ProtocolType.Tcp);

clientSocket.Connect(new IPEndPoint(IPAddress.Parse(ipaddress), port));

//创建一个新的线程,用来接收消息

t = new Thread(ReceiveMessage);

t.Start();

}

void ReceiveMessage()

{

while (true)

{

if (clientSocket.Connected == false)

break;

int length = clientSocket.Receive(data);

message = Encoding.UTF8.GetString(data, 0, length);

print(message);

//chatText.text += "\n" + message;

}

}

void SendMessage(string message)

{

byte[] data = Encoding.UTF8.GetBytes(message);

clientSocket.Send(data);

}

public void OnSendButtonClick()

{

string value = textInput.text;

SendMessage(value);

textInput.text = "";

}

void OnDestroy()

{

clientSocket.Close(); //关闭连接

}

}

<file_sep>using System;

namespace HighLevel

{

class Program

{

static void CommonSort<T>(T[] sortArray, Func<T,T,bool> compareMethod)

{

bool swapped = true;

do

{

swapped = false;

for (int i = 0; i < sortArray.Length - 1; i++)

{

if (compareMethod(sortArray[i],sortArray[i + 1]))

{

T temp = sortArray[i];

sortArray[i] = sortArray[i + 1];

sortArray[i + 1] = temp;

swapped = true;

}

}

} while (swapped);

}

static void Main(string[] args)

{

Employee[] employees = new Employee[]

{

new Employee("gh",35),

new Employee("re",65),

new Employee("df",24),

new Employee("yhu",58),

new Employee("fg",124),

new Employee("fc",69)

};

CommonSort<Employee>(employees, Employee.Compare);

foreach(Employee em in employees)

{

Console.WriteLine(em.ToString());

}

Console.ReadKey();

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace json操作

{

class Player

{

public string Name { get; set; }

public int Level { get; set; }

public int Age { get; set; }

public List<Skill> SkillList { get; set; }

public override string ToString()

{

return string.Format("Name:{0},Level:{1},Age:{2},SkillList:{3}", Name,Level,Age,SkillList);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

public class ItemUI : MonoBehaviour

{

//public Image ItemImage;

//public void UpdateImage(Sprite s)

//{

// ItemImage.sprite = s; //更新图片

//}

public Text ItemName;

public void UpdateItem(string name)

{

ItemName.text = name;

}

}

<file_sep>using System;

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.EventSystems;

public class GridUI : MonoBehaviour, IPointerEnterHandler, IPointerExitHandler,IBeginDragHandler,IDragHandler,IEndDragHandler

{

#region Enter&&Exit

public static Action<Transform> OnEnter;

public static Action OnExit;

public void OnPointerEnter(PointerEventData eventData) //鼠标移过去时触发

{

if(eventData.pointerEnter.tag == "Grid") //射线检测鼠标下面的物体是否tag为Grid

{

if (OnEnter!=null)

OnEnter(transform); //参数为该处格子的位置

}

}

public void OnPointerExit(PointerEventData eventData) //鼠标离开时触发

{

if (eventData.pointerEnter.tag == "Grid")

{

if (OnExit != null)

OnExit();

}

}

#endregion

public static Action<Transform> OnLeftBeginDrag;

public static Action<Transform,Transform> OnLeftEndDrag; //参数是拖动之前的位置和拖动之后的位置

public void OnBeginDrag(PointerEventData eventData)

{

if(eventData.button == PointerEventData.InputButton.Left) //按下鼠标左键时

{

if (OnLeftBeginDrag != null)

OnLeftBeginDrag(transform);

}

}

public void OnDrag(PointerEventData eventData)

{

}

public void OnEndDrag(PointerEventData eventData)

{

if(eventData.button == PointerEventData.InputButton.Left) //松开鼠标左键时

{

if (OnLeftEndDrag != null)

{

if(eventData.pointerEnter == null) //如果鼠标下面没有UI

OnLeftEndDrag(transform,null);

else

OnLeftEndDrag(transform, eventData.pointerEnter.transform);

}

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.IO;

using System.Xml;

namespace _8_xml操作

{

class Program

{

static void Main(string[] args)

{

List<Skill> skillList = new List<Skill>(); //创建技能信息集合

XmlDocument xmlDoc = new XmlDocument(); //XmlDocument专门用来解析xml文档

//xmlDoc.Load("skill.txt");

xmlDoc.LoadXml(File.ReadAllText("skill.txt"));

XmlNode rootNode = xmlDoc.FirstChild;

XmlNodeList skillNodeList = rootNode.ChildNodes;

foreach(XmlNode skillNode in skillNodeList)

{

Skill skill = new Skill();

XmlNodeList fieldNodeList = skillNode.ChildNodes;

foreach(XmlNode fieldNode in fieldNodeList)

{

if(fieldNode.Name == "id")

{

int id = Int32.Parse(fieldNode.InnerText);

skill.Id = id;

}else if(fieldNode.Name == "name")

{

string name = fieldNode.InnerText;

skill.Name = name;

skill.Lang = fieldNode.Attributes[0].Value;

}

else

{

skill.Damage = Int32.Parse(fieldNode.InnerText);

}

}

skillList.Add(skill);

}

foreach(Skill skill in skillList)

{

Console.WriteLine(skill);

}

Console.ReadKey();

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.SceneManagement;

public class PlayerHealth : MonoBehaviour

{

private Animator anim;

public float hp = 100f;

void Awake()

{

anim = GetComponent<Animator>();

}

public void TakeDamage(float damage)

{

hp -= damage;

if(hp <= 0)

{

anim.SetBool("Dead", false);

StartCoroutine(ReloadScene());

}

}

IEnumerator ReloadScene()

{

yield return new WaitForSeconds(4f);

SceneManager.LoadScene(0);

}

}

<file_sep>using System;

namespace _01背包问题_递归实现_带备忘的自顶向下法_

{

class Program

{

public static int[,] result = new int[11, 4];

static void Main(string[] args)

{

int m; //背包容量

int[] w = { 0, 3, 4, 5 }; //每个物品重量

int[] p = { 0, 4, 5, 6 }; //每个物品价值

Console.WriteLine(UpDown(10, 3, w, p));

Console.WriteLine(UpDown(3, 3, w, p));

Console.WriteLine(UpDown(4, 3, w, p));

Console.WriteLine(UpDown(5, 3, w, p));

Console.WriteLine(UpDown(7, 3, w, p));

Console.ReadKey();

}

public static int UpDown(int m, int i, int[] w, int[] p) //返回值是m可以存储的最大价值

{

if (i == 0 || m == 0) return 0;

if (result[m,i] != 0)

{

return result[m, i];

}

if (w[i] > m)

{

result[m,i] = UpDown(m, i - 1, w, p);

return result[m, i];

}

else

{

int maxValue1 = UpDown(m - w[i], i - 1, w, p) + p[i];

int maxValue2 = UpDown(m, i - 1, w, p);

if (maxValue1 > maxValue2)

{

result[m, i] = maxValue1;

}

else

{

result[m, i] = maxValue2;

}

return result[m, i];

}

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class KnapsackManager : MonoBehaviour

{

private static KnapsackManager _instance;

public static KnapsackManager Instance { get { return _instance; } }

public GridPanelUI GridPanelUI;

public TooltipUI TooltipUI;

public DragItemUI DragItemUI;

private bool isShow = false;

private bool isDrag = false;

public Dictionary<int, Item> ItemList { get; private set; }

void Awake()

{

_instance = this; //单例模式

Load();

GridUI.OnEnter += GridUI_OnEnter;

GridUI.OnExit += GridUI_OnExit;

GridUI.OnLeftBeginDrag += GridUI_OnLeftBeginDrag;

GridUI.OnLeftEndDrag += GridUI_OnLeftEndDrag;

}

void Update()

{

Vector2 position;

//将鼠标指向的屏幕上的位置的坐标转化为物体坐标并赋值给position

RectTransformUtility.ScreenPointToLocalPointInRectangle(GameObject.Find("KnapsackUI").transform as RectTransform, Input.mousePosition, null, out position);

if (isDrag)

{

DragItemUI.Show(); //另外创建的DragItemUI显示

DragItemUI.SetLocalPosition(position);

}

else if (isShow)

{

TooltipUI.Show();

TooltipUI.SetLocalPosition(position); //设置TooltipUI显示时的坐标

}

}

public void StoreItem(int itemId)

{

if (!ItemList.ContainsKey(itemId))

{

return;

}

Transform emptyGrid = GridPanelUI.GetEmptyGrid(); //得到空格子的位置

if(emptyGrid == null)

{

Debug.LogWarning("背包已满!");

return;

}

Item temp = ItemList[itemId]; //通过itemId查找Dictionary中的Item

this.CreateNewItem(temp, emptyGrid);

}

private void Load()

{

ItemList = new Dictionary<int, Item>();

Weapon w2 = new Weapon(0, "牛刀", "宰牛刀", 20, 10, "", 100);

Weapon w1 = new Weapon(1, "金枪", "可以射击", 150, 100, "", 190);

Consumable c1 = new Consumable(2, "红瓶", "加血", 20, 12, "", 20,0);

Consumable c2 = new Consumable(3, "蓝瓶", "加蓝", 40, 20, "", 0, 20);

Armor a1 = new Armor(4, "头盔", "保护头部", 120, 80, "", 5, 40, 1);

Armor a2 = new Armor(5, "胸甲", "护胸", 200, 100, "", 25, 30, 12);

ItemList.Add(w1.ID, w1);

ItemList.Add(w2.ID, w2);

ItemList.Add(c1.ID, c1);

ItemList.Add(c2.ID, c2);

ItemList.Add(a1.ID, a1);

ItemList.Add(a2.ID, a2);

}

#region 事件回调

private void GridUI_OnEnter(Transform gridTransform)

{

Item item = ItemModel.GetItem(gridTransform.name); //得到鼠标下面格子处的item

if (item == null)

return;

TooltipUI.UpdateTooltip(item.Name);

isShow = true;

}

private void GridUI_OnExit()

{

isShow = false;

TooltipUI.Hide();

}

private void GridUI_OnLeftBeginDrag(Transform gridTransform)

{

if (gridTransform.childCount == 0)

return;

else

{

Item item = ItemModel.GetItem(gridTransform.name);

DragItemUI.UpdateItem(item.Name);

Destroy(gridTransform.GetChild(0).gameObject);

isDrag = true;

}

}

private void GridUI_OnLeftEndDrag(Transform preTransform,Transform enterTransform)

{

isDrag = false;

DragItemUI.Hide();

if(enterTransform == null) //扔东西

{

ItemModel.DeleteItem(preTransform.name);

Debug.LogWarning("物品已扔");

}

else if(enterTransform.tag == "Grid") //拖到格子里

{

if (enterTransform.childCount == 0) //直接扔进去

{

Item item = ItemModel.GetItem(preTransform.name);

this.CreateNewItem(item, enterTransform);

ItemModel.DeleteItem(preTransform.name);

}

else //交换

{

Destroy(enterTransform.GetChild(0).gameObject);

Item preGridItem = ItemModel.GetItem(preTransform.name);

Item enterGridItem = ItemModel.GetItem(enterTransform.name);

this.CreateNewItem(preGridItem, enterTransform);

this.CreateNewItem(enterGridItem, preTransform);

}

}

else //拖到缝隙里就还原

{

Item item = ItemModel.GetItem(preTransform.name);

this.CreateNewItem(item, preTransform);

}

}

#endregion

private void CreateNewItem(Item item,Transform parent)

{

GameObject itemPrefab = Resources.Load<GameObject>("Prefabs/Item"); //找到Prefab

itemPrefab.GetComponent<ItemUI>().UpdateItem(item.Name); //将Prefab中的名字设为查找到的Item的名字

GameObject itemGo = Instantiate(itemPrefab); //实例化Prefab

itemGo.transform.SetParent(parent); //设置实例化后物体的父节点

itemGo.transform.localPosition = Vector3.zero; //实例化后物体的相对坐标为0

itemGo.transform.localScale = Vector3.one; //相对大小为1

ItemModel.StoreItem(parent.name, item); //存储数据

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class InputDetector : MonoBehaviour

{

void Update()

{

if (Input.GetMouseButtonDown(2))

{

int index = Random.Range(0, 6);

KnapsackManager.Instance.StoreItem(index);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Camera : MonoBehaviour

{

public Transform player;

//public float smoothTime = 0.3f;

//private Vector3 velocity = Vector3.zero;

private Vector3 offset = Vector3.zero;

void Start()

{

offset = transform.position - player.position;

}

void Update()

{

transform.position = player.transform.position + offset;

}

/*void Update()

{ //逐渐将向量变到期望的位置

transform.position = Vector3.SmoothDamp(transform.position,player.position,ref velocity,smoothTime);

}*/

}

<file_sep><<<<<<< HEAD

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class DeactivateMe : MonoBehaviour

{

void Awake()

{

gameObject.SetActive(false);

}

}

=======

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class DeactivateMe : MonoBehaviour

{

void Awake()

{

gameObject.SetActive(false);

}

}

>>>>>>> 1d8fe8c08b28c74b2f92cf002b742f23a92ee478

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.AI;

public class EnemyAnimation : MonoBehaviour

{

public float speedDampTime = 0.3f;

public float anglarSpeedDampTime = 0.3f;

private NavMeshAgent navAgent;

private Animator anim;

private EnemySight sight;

private PlayerHealth health;

void Awake()

{

navAgent = GetComponent<NavMeshAgent>();

anim = GetComponent<Animator>();

sight = GetComponent<EnemySight>();

health = GameObject.FindGameObjectWithTag(Tags.player).GetComponent<PlayerHealth>();

}

// Update is called once per frame

void Update()

{

if(navAgent.desiredVelocity == Vector3.zero)

{

anim.SetFloat("Speed", 0,speedDampTime,Time.deltaTime);

anim.SetFloat("AnglarSpeed", 0,anglarSpeedDampTime,Time.deltaTime);

}

else

{

float angle = Vector3.Angle(transform.forward, navAgent.desiredVelocity);

float angleRad = 0;

if(angle > 90)

{

anim.SetFloat("Speed", 0, speedDampTime, Time.deltaTime);

}

else

{//即目标方向在机器人朝向上的投影,求得实时移动速度,达到平滑过渡目的;走向过程中呈弧形,并慢慢加速

Vector3 projection = Vector3.Project(navAgent.desiredVelocity, transform.forward);

anim.SetFloat("Speed", projection.magnitude, speedDampTime, Time.deltaTime);

}

angleRad = angle * Mathf.Deg2Rad;//角度转换为弧度

//控制机器人的左右转向,Cross为以transform.forward和navAgent.desiredVelocity分别为拇指和食指的

//左手坐标系,返回中指指向的向量

Vector3 crossRes = Vector3.Cross(transform.forward, navAgent.desiredVelocity);

if(crossRes.y < 0)//若crossRes.y < 0,则右转

{

angleRad = -angleRad;

}

anim.SetFloat("AnglarSpeed", angleRad, anglarSpeedDampTime, Time.deltaTime);

navAgent.nextPosition = transform.position;

}

if (health.hp > 0)

{

anim.SetBool("PlayerInSight", sight.playerInSight);

}

else

{

anim.SetBool("PlayerInSight", false);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Player : MonoBehaviour

{

public float moveSpeed = 3;

public float rotateSpeed = 7;

public bool hasKey = false;

public GameObject ButtonUp;

public GameObject ButtonDown;

public GameObject ButtonRight;

public GameObject ButtonLeft;

private Animator anim;

private AudioSource audio;

private float h = 0;

private float v = 0;

private OnButtonPressed Up;

private OnButtonPressed Down;

private OnButtonPressed Right;

private OnButtonPressed Left;

void Awake()

{

anim = GetComponent<Animator>();

audio = GetComponent<AudioSource>();

Up = ButtonUp.GetComponent<OnButtonPressed>();

Down = ButtonDown.GetComponent<OnButtonPressed>();

Right = ButtonRight.GetComponent<OnButtonPressed>();

Left = ButtonLeft.GetComponent<OnButtonPressed>();

}

void Update()

{

if (Input.GetKey(KeyCode.LeftShift))

{

anim.SetBool("Sneak",true);

}

else

{

anim.SetBool("Sneak", false);

}

//float h = Input.GetAxis("Horizontal");

//float v = Input.GetAxis("Vertical");

if (Up.isDown && !Down.isDown)

{

v = 1.0f;

}

else if (!Up.isDown && Down.isDown)

{

v = -1.0f;

}

else { v = 0; }

if (Right.isDown&&!Left.isDown)

{

h = 1.0f;

}else if(!Right.isDown && Left.isDown)

{

h = -1.0f;

}

else { h = 0; }

if (Mathf.Abs(h) > 0.1 || Mathf.Abs(v) > 0.1)

{

float newSpeed = Mathf.Lerp(anim.GetFloat("Speed"), 5.6f, moveSpeed * Time.deltaTime);

anim.SetFloat("Speed", newSpeed);

Vector3 targetDir = new Vector3(h, 0, v);

Quaternion newRotation = Quaternion.LookRotation(targetDir, Vector3.up);

transform.rotation = Quaternion.Lerp(transform.rotation, newRotation, rotateSpeed * Time.deltaTime);

}

else

{

anim.SetFloat("Speed", 0);

}

if (anim.GetCurrentAnimatorStateInfo(0).IsName("Locomotion"))

{

PlayFootMusic();

}

else

{

StopFootMusic();

}

}

private void PlayFootMusic()

{

if (!audio.isPlaying)

{

audio.Play();

}

}

private void StopFootMusic()

{

if (audio.isPlaying)

{

audio.Stop();

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class FController : MonoBehaviour

{

public Transform player;

public float rotSpeed = 5f;

public Vector3 vc;

public GameObject blood;

// Start is called before the first frame update

void Start()

{

player = LevelManager.lm.player;

}

// Update is called once per frame

void Update()

{

Vector3 targetDir = player.position - transform.position;

float step = rotSpeed * Time.deltaTime;

Vector3 newDir = Vector3.RotateTowards(transform.forward, targetDir, step, 0);

transform.rotation = Quaternion.LookRotation(newDir);

transform.Translate(Vector3.forward * Time.deltaTime * 8);

}

public void Hurt()

{

Destroy(gameObject);

Instantiate(blood, transform.position, Quaternion.identity);

}

}

<file_sep>using System;

namespace 钢条切割问题_自底向上法_动态规划_

{

class Program

{

static void Main(string[] args)

{

int[] result = new int[11];//保存子问题的解

int[] p = { 0, 1, 5, 8, 9, 10, 17, 17, 20, 24, 30 }; //索引代表钢条的长度,值代表价格

Console.WriteLine(ButtomUp(0, p, result));

Console.WriteLine(ButtomUp(1, p, result));

Console.WriteLine(ButtomUp(2, p, result));

Console.WriteLine(ButtomUp(3, p, result));

Console.WriteLine(ButtomUp(4, p, result));

Console.WriteLine(ButtomUp(5, p, result));

Console.WriteLine(ButtomUp(6, p, result));

Console.WriteLine(ButtomUp(7, p, result));

Console.WriteLine(ButtomUp(8, p, result));

Console.WriteLine(ButtomUp(9, p, result));

Console.WriteLine(ButtomUp(10, p, result));

Console.ReadKey();

}

public static int ButtomUp(int n,int[] p,int[] result)

{

for (int i = 1; i <= n; i++)

{

//下面取得钢条长度为i时的最大收益

int tempMaxPrice = -1;

for (int j = 1; j <= i; j++)

{

int maxPrice = p[j] + result[i - j];

if (maxPrice > tempMaxPrice)

{

tempMaxPrice = maxPrice;

}

}

result[i] = tempMaxPrice;

}

return result[n];

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class RayCasting : MonoBehaviour

{

public float distance;

void Update()

{

RaycastHit hit;

Debug.DrawRay(transform.position, Vector3.down * distance);

if (Physics.Raycast(transform.position, Vector3.down, out hit, distance))

{

Destroy(hit.collider.gameObject);

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace _8_xml操作

{

class Skill

{

public int Id { get; set; }

public string Name { get; set; }

public string Lang { get; set; }

public int Damage { get; set; }

public override string ToString()

{

return string.Format("Id:{0},Name:{1},Lang:{2},Damage:{3}",Id,Name,Lang,Damage);

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Linq;

namespace 活动选择问题_动态规划思路_自底向上_

{

class Program

{

static void Main(string[] args)

{

int[] s = { 0, 1, 3, 0, 5, 3, 5, 6, 8, 8, 2, 12, 24 };

int[] f = { 0, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 24 };

List<int>[,] result = new List<int>[13, 13]; //即c[i,j],存储最后结果,默认值是null

for (int m = 0; m < 13; m++)

{

for(int n = 0; n < 13; n++)

{

result[m, n] = new List<int>(); //默认值是空list集合

}

}

for(int j = 0; j < 13; j++)

{

for (int i = 0; i < j-1; i++)

{

List<int> sij = new List<int>();

for(int number = 1;number < s.Length - 1; number++)

{

if (s[number] >= f[i] && f[number] <= s[j])

{

sij.Add(number); //找到符合条件的k活动,添加到sij列表中

}

}

if (sij.Count > 0)

{

int maxCount = 0;

List<int> tempList = new List<int>();

foreach(int number in sij)

{

int count = result[i, number].Count + result[number, j].Count + 1;

if (count > maxCount) //寻找conut的最大值

{

maxCount = count;

tempList = result[i, number].Union<int>(result[number, j]).ToList<int>(); //取两部分的并集

tempList.Add(number); //再加上number,即取三部分的并集

}

}

result[i, j] = tempList;

}

}

}

List<int> l = result[0, 12];

foreach(int temp in l)

{

Console.WriteLine(temp);

}

Console.ReadKey();

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace 二叉排序树_链式存储

{

class BSNode

{

public BSNode LeftChild { get; set; }

public BSNode RightChild { get; set; }

public BSNode Parent { get; set; }

public int Data { get; set; }

public BSNode()

{

}

public BSNode(int item)

{

this.Data = item;

}

}

}

<file_sep>using LitJson;

using System;

using System.Collections.Generic;

using System.IO;

namespace json操作

{

class Program

{

static void Main(string[] args)

{

//List<Skill> skillList = new List<Skill>();

//JsonData jsonData = JsonMapper.ToObject(File.ReadAllText("json技能信息.txt")); //使用JsonMapper解析json文本,JsonData代表一个数组或者一个对象,这里代表数组

//foreach (JsonData temp in jsonData) //这里temp代表一个对象

//{

// Skill skill = new Skill();

// JsonData idValue = temp["id"]; //通过字符串索引器取得键值对的值

// JsonData nameValue = temp["name"];

// JsonData damageValue = temp["damage"];

// int id = Int32.Parse(idValue.ToString());

// int damage = Int32.Parse(damageValue.ToString());

// skill.id = id;

// skill.damage = damage;

// skill.name = nameValue.ToString();

// skillList.Add(skill);

//}

//foreach(var temp in skillList)

//{

// Console.WriteLine(temp);

//}

//Skill[] skillArray = JsonMapper.ToObject<Skill[]>(File.ReadAllText("json技能信息.txt"));

//foreach(var temp in skillArray)

//{

// Console.WriteLine(temp);

//}

//List<Skill> skillList = JsonMapper.ToObject<List<Skill>>(File.ReadAllText("json技能信息.txt"));

//foreach (var temp in skillList)

//{

// Console.WriteLine(temp);

//}

//Player p = JsonMapper.ToObject<Player>(File.ReadAllText("player.txt"));

//Console.WriteLine(p);

//foreach(var temp in p.SkillList)

//{

// Console.WriteLine(temp);

//}

Player p = new Player();

p.Name = "花千骨";

p.Level = 100;

p.Age = 18;

string json = JsonMapper.ToJson(p);

Console.WriteLine(json);

Console.ReadKey();

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class AddTorqueExample : MonoBehaviour

{

public float amount = 50f;

private Rigidbody rb;

void Start()

{

rb = GetComponent<Rigidbody>();

}

void FixedUpdate()

{

float h = Input.GetAxis("Horizontal") * amount * Time.deltaTime;

float v = Input.GetAxis("Vertical") * amount * Time.deltaTime;

rb.AddTorque(transform.up * h);

rb.AddTorque(transform.right * v);

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.SceneManagement;

public class Lift : MonoBehaviour

{

public Transform outerLeft;

public Transform innerLeft;

public Transform outerRight;

public Transform innerRight;

public float liftUpTime = 3f;

private float liftUpTimer = 0;

private bool isIn = false;

private float gameWinTimer = 0;

// Update is called once per frame

void Update()

{

innerLeft.position = new Vector3(outerLeft.position.x, innerLeft.position.y, innerLeft.position.z);

innerRight.position = new Vector3(outerRight.position.x, innerRight.position.y, innerRight.position.z);

if (isIn)

{

liftUpTimer += Time.deltaTime;

if(liftUpTimer > liftUpTime)

{

transform.Translate(Vector3.up * Time.deltaTime);

gameWinTimer += Time.deltaTime;

if(gameWinTimer > 1f)

{

SceneManager.LoadScene(0);

}

}

}

}

void OnTriggerStay(Collider other)

{

if (other.tag == Tags.player)

{

isIn = true;

}

}

void OnTriggerExit(Collider other)

{

if (other.tag == Tags.player)

{

isIn = false;

liftUpTimer = 0;

}

}

}

<file_sep><<<<<<< HEAD

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

public class ControlSound : MonoBehaviour

{

public AudioSource sound;

public Slider sd;

public void Con_Sound()

{

sound.volume = sd.value;

}

}

=======

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

public class ControlSound : MonoBehaviour

{

public AudioSource sound;

public Slider sd;

public void Con_Sound()

{

sound.volume = sd.value;

}

}

>>>>>>> 1d8fe8c08b28c74b2f92cf002b742f23a92ee478

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class BloodParticle : MonoBehaviour

{

public ParticleSystem ptc;

public void StopPtc()

{

ptc.Stop();

GetComponent<BoxCollider>().enabled = false;

Destroy(gameObject, 2);

LevelManager.lm.MakePlane();

}

}

<file_sep>using System;

using System.Collections;

using System.Collections.Generic;

namespace _003_队列

{

class Program

{

static void Main(string[] args)

{

//Queue<int> queue = new Queue<int>();

//IQueue<int> queue = new SeqQueue<int>();

IQueue<int> queue = new LinkQueue<int>();

queue.Enqueue(12); //队首

queue.Enqueue(45);

queue.Enqueue(67);

queue.Enqueue(89); //队尾

Console.WriteLine(queue.Count);

int i = queue.Dequeue(); //取得队首元素并删除

Console.WriteLine(i);

Console.WriteLine(queue.Count);

Console.WriteLine(queue.Peek()); //取得队首元素不删除

Console.WriteLine(queue.Count);

queue.Clear();

Console.WriteLine(queue.Count);

Console.ReadKey();

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace json操作

{

class Skill

{

public int id;

public int damage;

public string name;

public override string ToString()

{

return string.Format("Id:{0},Damage:{1},Name:{2}", id, damage, name);

}

}

}

<file_sep>using System;

using System.Collections;

using System.Collections.Generic;

namespace _002_栈

{

class Program

{

static void Main(string[] args)

{

//Stack<char> stack = new Stack<char>();

//使用自定义的顺序栈:

//IStackDS<char> stack = new SeqStack<char>();

//使用自定义的链栈:

IStackDS<char> stack = new LinkStack<char>();

stack.Push('a');

stack.Push('b');

stack.Push('c');

Console.WriteLine(stack.Count);

Console.WriteLine(stack.Pop()); //找到栈顶元素并删除

Console.WriteLine(stack.Count);

Console.WriteLine(stack.Peek()); //找到栈顶元素但不删除

Console.WriteLine(stack.Count);

stack.Clear();

Console.WriteLine(stack.Count);

Console.ReadKey();

}

}

}

<file_sep>using System;

namespace 钱币找零问题_贪心算法

{

class Program

{

static void Main(string[] args)

{

int[] count = { 3, 0, 2, 1, 0, 3, 5 };

int[] amount = { 1, 2, 5, 10, 20, 50, 100 };

int[] result = Change(320, count, amount);

foreach (var i in result)

{

Console.Write(i + " ");

}

Console.ReadKey();

}

public static int[] Change(int k,int[] count,int[] amount) //k为要换的钱数

{

if (k == 0) return new int[amount.Length + 1];

int total = 0;

int index = amount.Length - 1; //此时要换面额钱币的索引

int[] result = new int[amount.Length + 1]; //存储每个面额的钱币要换多少张

while (true)

{

if(k <= 0 || index <= -1) break;

if(k > count[index] * amount[index]) //该面额的钱不够换时

{

result[index] = count[index];

k -= count[index] * amount[index];

}

else //够换时

{

result[index] = k / amount[index];

k -= result[index] * amount[index];

}

index--;

}

result[amount.Length] = k; //result数组的最后一位存储还剩多少钱无法换

return result;

}

}

}

<file_sep>using System;

namespace _01背包问题_自底向上法_动态规划_

{

class Program

{

static void Main(string[] args)

{

int m; //背包容量

int[] w = { 0, 3, 4, 5 }; //每个物品重量

int[] p = { 0, 4, 5, 6 }; //每个物品价值

Console.WriteLine(BottomUp(10, 3, w, p));

Console.WriteLine(BottomUp(3, 3, w, p));

Console.WriteLine(BottomUp(4, 3, w, p));

Console.WriteLine(BottomUp(5, 3, w, p));

Console.WriteLine(BottomUp(7, 3, w, p));

Console.ReadKey();

}

public static int[,] result = new int[11, 4];

public static int BottomUp(int m, int i, int[] w, int[] p)

{

if (result[m, i] != 0) return result[m, i];

for (int tempM = 1; tempM <= m; tempM++)

{

for(int tempI = 1;tempI <= i; tempI++)

{

if (result[tempM, tempI] != 0) continue;

if (w[tempI] > tempM)

{

result[tempM, tempI] = result[tempM, tempI - 1];

}

else

{

int maxValue1 = result[tempM - w[tempI], tempI - 1] + p[tempI];

int maxValue2 = result[tempM, tempI - 1];

if(maxValue1 > maxValue2)

{

result[tempM, tempI] = maxValue1;

}

else

{

result[tempM, tempI] = maxValue2;

}

}

}

}

return result[m, i];

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace 观察者设计模式_猫捉老鼠

{

class Cat

{

private string name;

private string color;

public Cat(string name,string color)

{

this.name = name;

this.color = color;

}

//public void CatComing(Mouse mouse1,Mouse mouse2)

public void CatComing()

{

Console.WriteLine(color + "的猫" + name + "过来了,喵喵喵");

//mouse1.RunAway();

//mouse2.RunAway();

if (catCome != null)

catCome();

}

public event Action catCome; //声明一个事件

}

}

<file_sep>using System;

namespace 观察者设计模式_猫捉老鼠

{

class Program

{

static void Main(string[] args)

{

Cat cat = new Cat("加菲猫", "黄色");

Mouse mouse1 = new Mouse("米奇", "黑色",cat);

//cat.catCome += mouse1.RunAway;

Mouse mouse2 = new Mouse("唐老鸭", "红色",cat);

//cat.catCome += mouse2.RunAway;

//cat.CatComing(mouse1,mouse2);

cat.CatComing();

//cat.catCome(); //事件不能在类的外部触发,只能在类的内部触发

Console.ReadKey();

}

}

}

<file_sep>using UnityEngine;

using System.Collections;

public class Move : MonoBehaviour

{

void Update()

{

transform.Translate(Input.GetAxis("Horizontal"), 0, Input.GetAxis("Vertical"));

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

public class TextShow : MonoBehaviour

{

public Text text;

void Start()

{

text.text = "WASD to Move\nZ to Switch\nShift to Sneak";

}

// Update is called once per frame

void Update()

{

}

}

<file_sep>using System;

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

using UnityEngine.SceneManagement;

using UnityEngine.Networking;

using System.IO;

public class LoadGame : MonoBehaviour {

public Slider processView;

// Use this for initialization

void Start () {

LoadGameMethod();

}

// Update is called once per frame

void Update () {

}

public void LoadGameMethod()

{

StartCoroutine(LoadResourceCorotine());

StartCoroutine(StartLoading_4(2));

}

private IEnumerator StartLoading_4(int scene)

{

int displayProgress = 0;

int toProgress = 0;

AsyncOperation op = SceneManager.LoadSceneAsync(scene);

op.allowSceneActivation = false;

while (op.progress < 0.9f)

{

toProgress = (int)op.progress * 100;

while (displayProgress < toProgress)

{

++displayProgress;

SetLoadingPercentage(displayProgress);

yield return new WaitForEndOfFrame();

}

}

toProgress = 100;

while (displayProgress < toProgress)

{

++displayProgress;

SetLoadingPercentage(displayProgress);

yield return new WaitForEndOfFrame();

}

op.allowSceneActivation = true;

}

IEnumerator LoadResourceCorotine()

{

UnityWebRequest request = UnityWebRequest.Get(@"http://localhost/fish.lua.txt");

yield return request.SendWebRequest();

string str = request.downloadHandler.text;

File.WriteAllText(@"F:\GameResources\CatchFish\PlayerGamePackage\fish.lua.txt",str);

UnityWebRequest request1 = UnityWebRequest.Get(@"http://localhost/fishDispose.lua.txt");

yield return request1.SendWebRequest();

string str1 = request1.downloadHandler.text;

File.WriteAllText(@"F:\GameResources\CatchFish\PlayerGamePackage\fishDispose.lua.txt", str1);

}

private void SetLoadingPercentage(float v)

{

processView.value = v / 100;

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Door : MonoBehaviour

{

public bool requireKey = false;

private int count = 0;

private Animator anim;

private AudioSource audio;

void Awake()

{

anim = GetComponent<Animator>();

audio = GetComponent<AudioSource>();

}

void Update()

{

anim.SetBool("Close", count <= 0);

if (anim.IsInTransition(0))

{

if (!audio.isPlaying)

{

audio.Play();

}

}

}

void OnTriggerEnter(Collider other)

{

if (requireKey)

{

if (other.tag == Tags.player)

{

Player player = other.GetComponent<Player>();

if (player.hasKey)

{

count++;

}

}

}

else

{

if (other.tag == Tags.player)

{

count++;

}else if(other.tag == Tags.enemy && other.GetComponent<Collider>().isTrigger == false)

{

count++;

}

}

}

void OnTriggerExit(Collider other)

{

if (requireKey)

{

if (other.tag == Tags.player)

{

Player player = other.GetComponent<Player>();

if (player.hasKey)

{

count--;

}

}

}

else

{

if (other.tag == Tags.player)

{

count--;

}

else if (other.tag == Tags.enemy && other.GetComponent<Collider>().isTrigger == false)

{

count--;

}

}

}

}

<file_sep>using System;

using System.Net.Sockets;

using System.Text;

namespace tcpclient

{

class Program

{

static void Main(string[] args)

{

TcpClient client = new TcpClient("192.168.3.11", 7788); //当创建tcpclient对象时,就会自动连接server

NetworkStream stream = client.GetStream(); //通过网络流进行数据交换

while (true)

{

string message = Console.ReadLine();

byte[] data = Encoding.UTF8.GetBytes(message);

stream.Write(data, 0, data.Length); //write用来写入数据

}

stream.Close();

client.Close();

Console.ReadKey();

}

}

}

<file_sep>using System;

namespace 最大子数组问题_分治法

{

class Program

{

struct SubArray //最大子数组的结构体

{

public int startIndex;

public int endIndex;

public int total;

}

static void Main(string[] args)

{

int[] priceArray = { 100, 113, 110, 85, 105, 102, 86, 63, 81, 101, 94, 106, 101, 79, 94, 90, 97 };

int[] pf = new int[priceArray.Length - 1]; //价格波动表

for (int i = 1; i < priceArray.Length; i++)

{

pf[i - 1] = priceArray[i] - priceArray[i - 1];

}

SubArray subArray = GetMaxSubArray(0, pf.Length - 1, pf);

Console.WriteLine(subArray.startIndex);

Console.WriteLine(subArray.endIndex);

Console.ReadKey();

}

static SubArray GetMaxSubArray(int low,int high,int[] array) //取得数组从low到high索引的最大子数组

{

if (low == high)

{

SubArray subarray ;

subarray.startIndex = low;

subarray.endIndex = high;

subarray.total = array[low];

return subarray;

}

int mid = (low + high) / 2;

SubArray subArray1 = GetMaxSubArray(low, mid, array); //从低区间找最大子数组

SubArray subArray2 = GetMaxSubArray(mid + 1, high, array); //从高区间找最大子数组

//从【low,mid】找到最大子数组【i,mid】

int total1 = array[mid];

int startIndex = mid;

int totalTemp = 0;

for (int i = mid; i >= low; i--)

{

totalTemp += array[i];

if (totalTemp > total1)

{

total1 = totalTemp;

startIndex = i;

}

}

//从【mid+1,high】找到最大子数组【mid+1,j】

int total2 = array[mid + 1];

int endIndex = mid + 1;

totalTemp = 0;

for (int j = mid +1; j <=high; j++)

{

totalTemp += array[j];

if (totalTemp > total2)

{

total2 = totalTemp;

endIndex = j;

}

}

//比较三种情况

SubArray subArray3;

subArray3.startIndex = startIndex;

subArray3.endIndex = endIndex;

subArray3.total = total1 + total2;

if (subArray1.total >= subArray2.total&&subArray1.total >= subArray3.total)

{

return subArray1;

}

else if (subArray2.total >= subArray1.total && subArray2.total >= subArray3.total)

{

return subArray2;

}

else

{

return subArray3;

}

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class VolumetricLightController : MonoBehaviour

{

public VolumetricLight vLight;

public float targetScatter;

// Update is called once per frame

void Update()

{

vLight.ScatteringCoef = Mathf.Lerp(vLight.ScatteringCoef, targetScatter, 0.1f);

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class AlermLight : MonoBehaviour

{

public static AlermLight _instance;

public bool alermOn = false;

public float animationSpeed = 1;

private float lowIntensity = 0;

private float highIntensity = 0.5f;

private float targetIntensity;

private Light light;

// Start is called before the first frame update

void Awake()

{

targetIntensity = highIntensity;

alermOn = false;

_instance = this;

light = GetComponent<Light>();

}

// Update is called once per frame

void Update()

{

if (alermOn)

{

light.intensity = Mathf.Lerp(light.intensity, targetIntensity, Time.deltaTime * animationSpeed);

if(Mathf.Abs(light.intensity - targetIntensity) < 0.05f)

{

if(targetIntensity == highIntensity)

{

targetIntensity = lowIntensity;

}else if(targetIntensity == lowIntensity)

{

targetIntensity = highIntensity;

}

}

}

else

{

light.intensity = Mathf.Lerp(light.intensity, 0, Time.deltaTime * animationSpeed);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class CCTVCam : MonoBehaviour

{

void OnTriggerStay(Collider other)

{

if(other.tag == Tags.player)

{

GameController._instance.SeePlayer(other.transform);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Laser : MonoBehaviour

{

public bool isFlicker = false;

public float onTime = 3;

public float offTime = 3;

private float timer = 0;

private Renderer renderer;

void Start()

{

renderer = GetComponent<Renderer>();

}

void Update()

{

if (isFlicker)

{

timer += Time.deltaTime;

if (renderer.enabled)

{

if(timer >= onTime)

{

renderer.enabled = false;

timer = 0;

}

}

if (!renderer.enabled)

{

if (timer >= offTime)

{

renderer.enabled = true;

timer = 0;

}

}

}

}

void OnTriggerStay(Collider other)

{

if (other.tag == Tags.player)

{

GameController._instance.SeePlayer(other.transform);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Keycard : MonoBehaviour

{

public AudioClip musicPickup;

void OnTriggerEnter(Collider other)

{

if(other.tag == Tags.player)

{

Player player = other.GetComponent<Player>();

player.hasKey = true;

AudioSource.PlayClipAtPoint(musicPickup, transform.position);

Destroy(gameObject);

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class VolumetricLightTrigger : MonoBehaviour

{

public VolumetricLightController vLightCon;

void OnTriggerEnter()

{

vLightCon.targetScatter = 1.0f;

}

void OnTriggerExit()

{

vLightCon.targetScatter = 0.0f;

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class LevelManager : MonoBehaviour

{

public static LevelManager lm;

public GameObject plane;

private Vector3 pos = Vector3.zero;

public Vector3 origin = new Vector3(0, -1.41f, 0);

void Awake()

{

lm = this;

}

void Start()

{

MakePlane();

}

public void MakePlane()

{

origin = origin + Vector3.forward * Random.Range(7f,15f);

Instantiate(plane, origin, plane.transform.rotation);

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using DG.Tweening;

using UnityEngine.UI;

using UnityEngine.SceneManagement;

public class View : MonoBehaviour

{

public Ctrl ctrl;

public RectTransform logoName;

public RectTransform menuUI;

public RectTransform gameUI;

public GameObject restartButton;

public GameObject gameOverUI;

public GameObject settingUI;

public GameObject rankUI;

public Text score;

public Text highScore;

public Text gameOverScore;

public Text rankScore;

public Text rankHighScore;

public Text rankNumbersGame;

private GameObject mute;

void Awake()

{

ctrl = GameObject.FindGameObjectWithTag("Ctrl").GetComponent<Ctrl>();

mute = transform.Find("Canvas/SettingUI/AudioButton/Mute").gameObject;

}

public void ShowMenu()

{

logoName.gameObject.SetActive(true);

logoName.DOMoveY(770.05f, 0.5f);

menuUI.gameObject.SetActive(true);

menuUI.DOMoveY(103.3f, 0.5f);

}

public void HideMenu()

{

logoName.DOMoveY(1050.9f, 0.5f)

.OnComplete(delegate { logoName.gameObject.SetActive(false); }); //移动完成时执行

menuUI.DOMoveY(-103.3f, 0.5f)

.OnComplete(delegate { menuUI.gameObject.SetActive(false); });

}

public void UpdateGameUI(int score, int highScore)

{

this.score.text = score.ToString();

this.highScore.text = highScore.ToString();

}

public void ShowGameUI(int score = 0,int highScore = 0)

{

this.score.text = score.ToString();

this.highScore.text = highScore.ToString();

gameUI.gameObject.SetActive(true);

gameUI.DOMoveY(781.66f, 0.5f);

}

public void HideGameUI()

{

gameUI.DOMoveY(1040.3f, 0.5f)

.OnComplete(delegate { gameUI.gameObject.SetActive(false); });

}

public void ShowRestartButton()

{

restartButton.SetActive(true);

}

public void ShowGameOverUI(int score = 0)

{

gameOverUI.SetActive(true);

gameOverScore.text = score.ToString();

}

public void HideGameOverUI()

{

gameOverUI.SetActive(false);

}

public void OnHomeButtonClick()

{

ctrl.audioManager.PlayCursor();

SceneManager.LoadScene(SceneManager.GetActiveScene().buildIndex); //加载当前场景

}

public void OnSettingButtonClick()

{

ctrl.audioManager.PlayCursor();

settingUI.SetActive(true);

}

public void SetMuteActive(bool isActive)

{

mute.SetActive(isActive);

}

public void OnSettingUIClick()

{

ctrl.audioManager.PlayCursor();

settingUI.SetActive(false);

}

//public void OnRankButtonClick()

//{

// ctrl.audioManager.PlayCursor();

// rankUI.SetActive(true);

//}

public void ShowRankUI(int score,int highScore,int numbersGame)

{

this.rankScore.text = score.ToString();

this.rankHighScore.text = highScore.ToString();

this.rankNumbersGame.text = numbersGame.ToString();

rankUI.SetActive(true);

}

public void OnRankUIClick()

{

ctrl.audioManager.PlayCursor();

rankUI.SetActive(false);

}

}

<file_sep>using System;

namespace 二叉排序树_链式存储

{

class Program

{

static void Main(string[] args)

{

BSTree tree = new BSTree();

int[] data = { 62, 58, 88, 47, 73, 99, 35, 51, 93, 37 }; //添加的顺序要按照层序遍历

foreach (var t in data)

{

tree.Add(t);

}

tree.MiddleTraversal();

Console.WriteLine();

Console.WriteLine(tree.Find(99));

Console.WriteLine(tree.Find(100));

tree.Delete(35);

tree.MiddleTraversal();

Console.WriteLine();

Console.ReadKey();

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class Ball : MonoBehaviour

{

void OnTriggerEnter(Collider other)

{

if(other.gameObject.tag == "Enemy")

{

other.gameObject.GetComponent<FController>().Hurt();

}

}

}

<file_sep>using System;

using System.Collections.Generic;

using System.Text;

namespace 二叉树_顺序结构存储

{ //如果节点是空的,则这个节点所在的数组位置,设置为-1

class BiTree<T>

{

private T[] data;

private int count = 0;

public BiTree(int capacity) //这个参数是容量

{

data = new T[capacity];

}

public bool Add(T item)

{

if (count >= data.Length)

{

return false;

}

data[count] = item;

count++;

return true;

}

public void FirstTraversal()

{

FirstTraversal(0);

}

private void FirstTraversal(int index)

{

if (index >= count) return;

int number = index + 1; //得到要遍历的这个节点的编号

if (data[index].Equals(-1)) return;

Console.Write(data[index] + " ");

int leftNumber = number * 2; //得到左子节点的编号

int rightNumber = number * 2 + 1; //得到右子节点的编号

FirstTraversal(leftNumber - 1);

FirstTraversal(rightNumber - 1);

}

public void MiddleTraversal()

{

MiddleTraversal(0);

}

private void MiddleTraversal(int index)

{

if (index >= count) return;

int number = index + 1; //得到要遍历的这个节点的编号

if (data[index].Equals(-1)) return;

int leftNumber = number * 2; //得到左子节点的编号

int rightNumber = number * 2 + 1; //得到右子节点的编号

MiddleTraversal(leftNumber - 1);

Console.Write(data[index] + " ");

MiddleTraversal(rightNumber - 1);

}

public void LastTraversal()

{

LastTraversal(0);

}

private void LastTraversal(int index)

{

if (index >= count ) return;

int number = index + 1; //得到要遍历的这个节点的编号

if (data[index].Equals(-1)) return;

int leftNumber = number * 2; //得到左子节点的编号

int rightNumber = number * 2 + 1; //得到右子节点的编号

LastTraversal(leftNumber - 1);

LastTraversal(rightNumber - 1);

Console.Write(data[index] + " ");

}

public void LayerTraversal()

{

for (int i = 0; i < count; i++)

{

if (data[i].Equals(-1)) continue;

Console.Write(data[i] + " ");

}

Console.WriteLine();

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using UnityEngine.UI;

using UnityEngine.SceneManagement;

public class Player : MonoBehaviour

{

public Rigidbody myRig;

private float keyTime = 0;

public Scrollbar myBar;

public Text score;

private float myScore = 0;

// Update is called once per frame

void Update()

{

if (Input.GetKey(KeyCode.Space))

{

keyTime += Time.deltaTime;

}

if (Input.GetKeyUp(KeyCode.Space))

{

myRig.AddForce(new Vector3(0,1,1) * 1000f * keyTime);

keyTime = 0;

}

myBar.size = keyTime;

if(transform.position.y < -20)

{

SceneManager.LoadScene(0);

}

}

private void OnTriggerEnter(Collider other)

{

//Destroy(other.gameObject);

other.GetComponent<BloodParticle>().StopPtc();

myScore++;

score.text = myScore.ToString();

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class EnemyShooting : MonoBehaviour

{

public float minDamage = 30;

private Animator anim;

private bool haveShoot = false; //表示一次射击中是否计算过伤害

private PlayerHealth health;

void Awake()

{

anim = GetComponent<Animator>();

health = GameObject.FindGameObjectWithTag(Tags.player).GetComponent<PlayerHealth>();

}

// Update is called once per frame

void Update()

{

if(anim.GetFloat("Shot") > 0.5)

{

Shooting();

}

else

{

haveShoot = false;

}

}

private void Shooting()

{

if(haveShoot == false)

{

//计算伤害

float damage = minDamage + 90 - 9 * (transform.position - health.transform.position).magnitude;

health.TakeDamage(damage);

haveShoot = true;

}

}

}

<file_sep>using System;

using System.Collections.Generic;

namespace 活动选择问题_贪心算法_递归解决

{

class Program

{

static void Main(string[] args)

{

List<int> list = ActivitySelection(1, 11, 0, 24);

foreach (var temp in list)

{

Console.WriteLine(temp);

}

Console.ReadKey();

}

static int[] s = { 0, 1, 3, 0, 5, 3, 5, 6, 8, 8, 2, 12 };

static int[] f = { 0, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14 };

//参数分别为遍历的开始和结束活动的编号、开始和结束的时间

public static List<int> ActivitySelection(int startActivityNumber,int endActivityNumber,int startTime,int endTime)

{

if (startActivityNumber > endActivityNumber || startTime >= endTime)

{

return new List<int>();

}

//找到结束时间最早的活动

int tempNumber = 0;

for(int number = startActivityNumber;number <= endActivityNumber; number++)

{

if (s[number] >= startTime && f[number] <= endTime)

{

tempNumber = number;

break;

}

}

List<int> list = ActivitySelection(tempNumber + 1, endActivityNumber, f[tempNumber], endTime);

list.Add(tempNumber); //list即是满足条件的活动编号

return list;

}

}

}

<file_sep>using System;

using System.Net;

using System.Net.Sockets;

using System.Text;

namespace _6_tcplistener

{

class Program

{

static void Main(string[] args)

{

TcpListener listener = new TcpListener(IPAddress.Parse("172.16.31.10"), 7788); //TcpListener对socket进行了一层封装,会自己创建socket对象

listener.Start(); //开始监听

Console.WriteLine("开始监听");

TcpClient client = listener.AcceptTcpClient(); //等待客户端连接

//Console.WriteLine("一个客户端连接过来");

NetworkStream stream = client.GetStream(); //得到网络流,可以取得客户端发送过来的数据

byte[] data = new byte[1024];

while (true) {

int length = stream.Read(data, 0, 1024); //从网络流中读取数据存在data数组中,length为实际读取的字节数

string message = Encoding.UTF8.GetString(data, 0, length);

Console.WriteLine("收到了消息:" + message);

}

stream.Close();

client.Close();

listener.Stop();

Console.ReadKey();

}

}

}

<file_sep>using System.Collections;

using System.Collections.Generic;

using UnityEngine;

using XLua;

using System.IO;

using UnityEngine.Networking;

public class HotFixScript : MonoBehaviour

{

private LuaEnv luaEnv;

public static Dictionary<string, GameObject> prefabDict = new Dictionary<string, GameObject>();

// Start is called before the first frame update

void Awake()

{

luaEnv = new LuaEnv();