Instructions to use TIGER-Lab/SWE-Next-14B with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- Transformers

How to use TIGER-Lab/SWE-Next-14B with Transformers:

# Use a pipeline as a high-level helper from transformers import pipeline pipe = pipeline("text-generation", model="TIGER-Lab/SWE-Next-14B") messages = [ {"role": "user", "content": "Who are you?"}, ] pipe(messages)# Load model directly from transformers import AutoTokenizer, AutoModelForCausalLM tokenizer = AutoTokenizer.from_pretrained("TIGER-Lab/SWE-Next-14B") model = AutoModelForCausalLM.from_pretrained("TIGER-Lab/SWE-Next-14B") messages = [ {"role": "user", "content": "Who are you?"}, ] inputs = tokenizer.apply_chat_template( messages, add_generation_prompt=True, tokenize=True, return_dict=True, return_tensors="pt", ).to(model.device) outputs = model.generate(**inputs, max_new_tokens=40) print(tokenizer.decode(outputs[0][inputs["input_ids"].shape[-1]:])) - Inference

- Notebooks

- Google Colab

- Kaggle

- Local Apps

- vLLM

How to use TIGER-Lab/SWE-Next-14B with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "TIGER-Lab/SWE-Next-14B" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "TIGER-Lab/SWE-Next-14B", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/TIGER-Lab/SWE-Next-14B

- SGLang

How to use TIGER-Lab/SWE-Next-14B with SGLang:

Install from pip and serve model

# Install SGLang from pip: pip install sglang # Start the SGLang server: python3 -m sglang.launch_server \ --model-path "TIGER-Lab/SWE-Next-14B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "TIGER-Lab/SWE-Next-14B", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker images

docker run --gpus all \ --shm-size 32g \ -p 30000:30000 \ -v ~/.cache/huggingface:/root/.cache/huggingface \ --env "HF_TOKEN=<secret>" \ --ipc=host \ lmsysorg/sglang:latest \ python3 -m sglang.launch_server \ --model-path "TIGER-Lab/SWE-Next-14B" \ --host 0.0.0.0 \ --port 30000 # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:30000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "TIGER-Lab/SWE-Next-14B", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }' - Docker Model Runner

How to use TIGER-Lab/SWE-Next-14B with Docker Model Runner:

docker model run hf.co/TIGER-Lab/SWE-Next-14B

SWE-Next: Scalable Real-World Software Engineering Tasks for Agents

SWE-Next-14B

SWE-Next-14B is a repository-level software engineering agent fine-tuned from Qwen/Qwen2.5-Coder-14B-Instruct on the released SWE-Next SFT Trajectories. The model is trained with full-parameter supervised fine-tuning on execution-grounded trajectories collected from real merged pull requests and validated repository environments.

Introduction

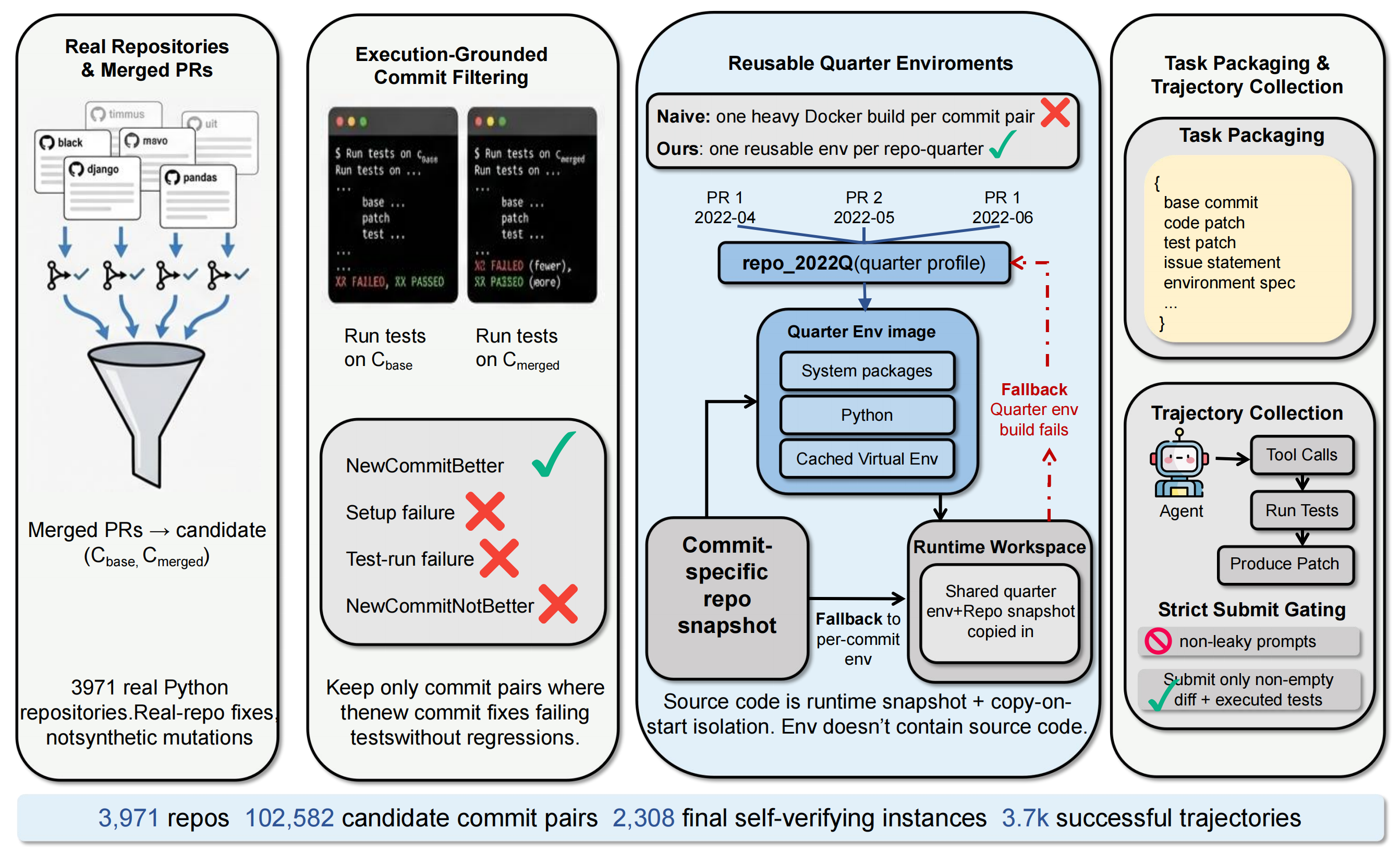

SWE-Next introduces reusable repo-quarter profiles, which reuse the same environment across nearby commits in time while keeping each task run separate and reproducible. Using only 30 hours and 639GB of environment storage, SWE-Next processes 3,971 seed repositories and 102,582 candidate commit pairs mined from real merged PRs to construct a dataset of 2,308 self-verifying instances. SWE-Next improves downstream pass@1 on SWE-Bench Verified and SWE-Bench Lite with fewer or comparable training trajectories, making large-scale executable data collection far more practical and accessible for research.

Model Overview

This model is trained on 3,693 selected SFT trajectories derived from the SWE-Next collection. The training data emphasizes clean repository-level repair traces and recovery-style debugging trajectories rather than isolated code-completion examples.

Training recipe summary:

- Base model:

Qwen/Qwen2.5-Coder-14B-Instruct - Finetuning: full-parameter SFT

- Context length: 32,768

- Learning rate: 1e-5

- Scheduler: cosine

- Dataset:

TIGER-Lab/SWE-Next-SFT-Trajectories

Usage

For full usage details, please refer to the official SWE-Next GitHub repository. The repository provides the complete setup and evaluation workflow for released models, including:

- environment and dependency installation,

- dataset and trajectory downloads,

- training configurations for the 7B and 14B models,

- vLLM serving commands and repository-level evaluation scripts.

In particular, the GitHub repo contains the exact commands used to serve SWE-Next-14B and evaluate it on SWE-Bench-style tasks under the SWE-Next execution interface.

Relationship to the SWE-Next Release

This repo contains the released 14B model checkpoint. Related artifacts are available separately:

- Base task dataset:

TIGER-Lab/SWE-Next - SFT trajectories:

TIGER-Lab/SWE-Next-SFT-Trajectories - Companion model:

TIGER-Lab/SWE-Next-7B - Project code:

github.com/TIGER-AI-Lab/SWE-Next

Citation

@misc{liang2026swenextscalablerealworldsoftware,

title={SWE-Next: Scalable Real-World Software Engineering Tasks for Agents},

author={Jiarong Liang and Zhiheng Lyu and Zijie Liu and Xiangchao Chen and Ping Nie and Kai Zou and Wenhu Chen},

year={2026},

eprint={2603.20691},

archivePrefix={arXiv},

primaryClass={cs.SE},

url={https://arxiv.org/abs/2603.20691},

}

- Downloads last month

- 7

Model tree for TIGER-Lab/SWE-Next-14B

Base model

Qwen/Qwen2.5-14B