Spaces:

Runtime error

Runtime error

Update README.md

Browse files

README.md

CHANGED

|

@@ -1,258 +1,258 @@

|

|

| 1 |

-

---

|

| 2 |

-

title: MomaskWorking

|

| 3 |

-

app_file: app.py

|

| 4 |

-

sdk: gradio

|

| 5 |

-

sdk_version:

|

| 6 |

-

---

|

| 7 |

-

# MoMask: Generative Masked Modeling of 3D Human Motions (CVPR 2024)

|

| 8 |

-

### [[Project Page]](https://ericguo5513.github.io/momask) [[Paper]](https://arxiv.org/abs/2312.00063) [[Huggingface Demo]](https://huggingface.co/spaces/MeYourHint/MoMask) [[Colab Demo]](https://github.com/camenduru/MoMask-colab)

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

If you find our code or paper helpful, please consider starring our repository and citing:

|

| 12 |

-

```

|

| 13 |

-

@inproceedings{guo2024momask,

|

| 14 |

-

title={Momask: Generative masked modeling of 3d human motions},

|

| 15 |

-

author={Guo, Chuan and Mu, Yuxuan and Javed, Muhammad Gohar and Wang, Sen and Cheng, Li},

|

| 16 |

-

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

|

| 17 |

-

pages={1900--1910},

|

| 18 |

-

year={2024}

|

| 19 |

-

}

|

| 20 |

-

```

|

| 21 |

-

|

| 22 |

-

## :postbox: News

|

| 23 |

-

📢 **2024-08-02** --- The [WebUI demo 🤗](https://huggingface.co/spaces/MeYourHint/MoMask) is now running smoothly on a CPU. No GPU is required to use MoMask.

|

| 24 |

-

|

| 25 |

-

📢 **2024-02-26** --- 🔥🔥🔥 Congrats! MoMask is accepted to CVPR 2024.

|

| 26 |

-

|

| 27 |

-

📢 **2024-01-12** --- Now you can use MoMask in Blender as an add-on. Thanks to [@makeinufilm](https://twitter.com/makeinufilm) for sharing the [tutorial](https://medium.com/@makeinufilm/notes-on-how-to-set-up-the-momask-environment-and-how-to-use-blenderaddon-6563f1abdbfa).

|

| 28 |

-

|

| 29 |

-

📢 **2023-12-30** --- For easy WebUI BVH visulization, you could try this website [bvh2vrma](https://vrm-c.github.io/bvh2vrma/) from this [github](https://github.com/vrm-c/bvh2vrma?tab=readme-ov-file).

|

| 30 |

-

|

| 31 |

-

📢 **2023-12-29** --- Thanks to Camenduru for supporting the [🤗Colab](https://github.com/camenduru/MoMask-colab) demo.

|

| 32 |

-

|

| 33 |

-

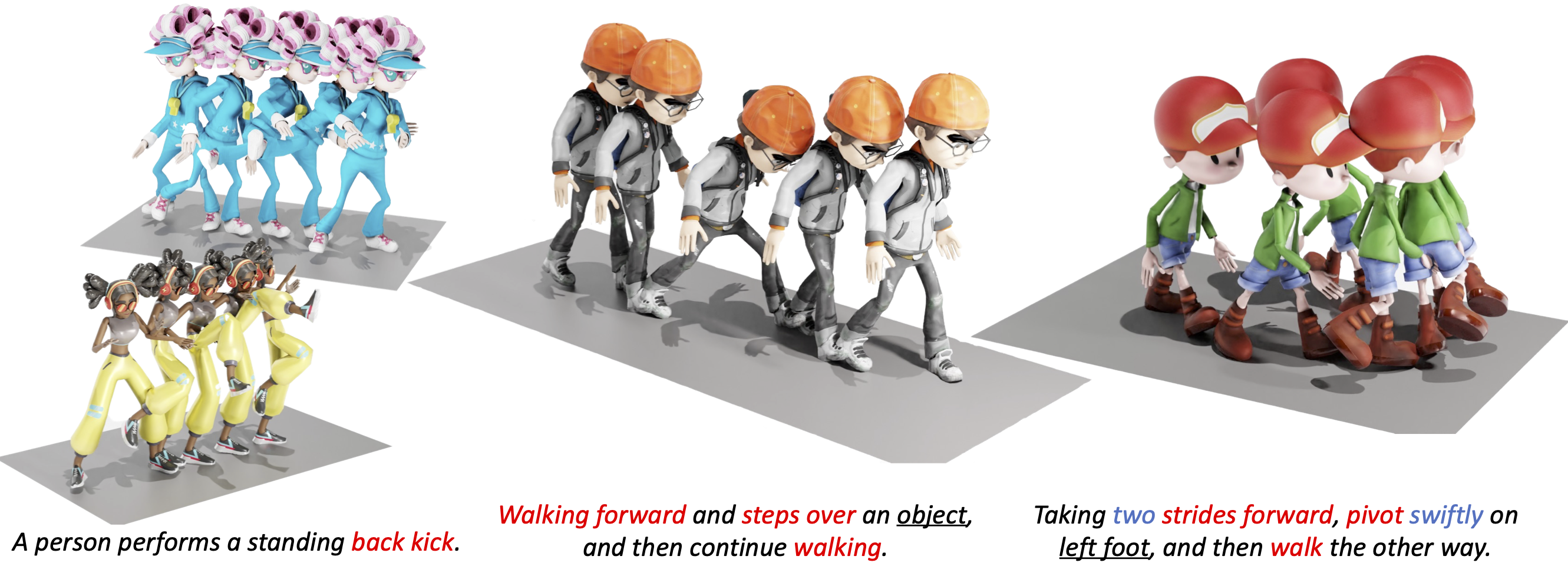

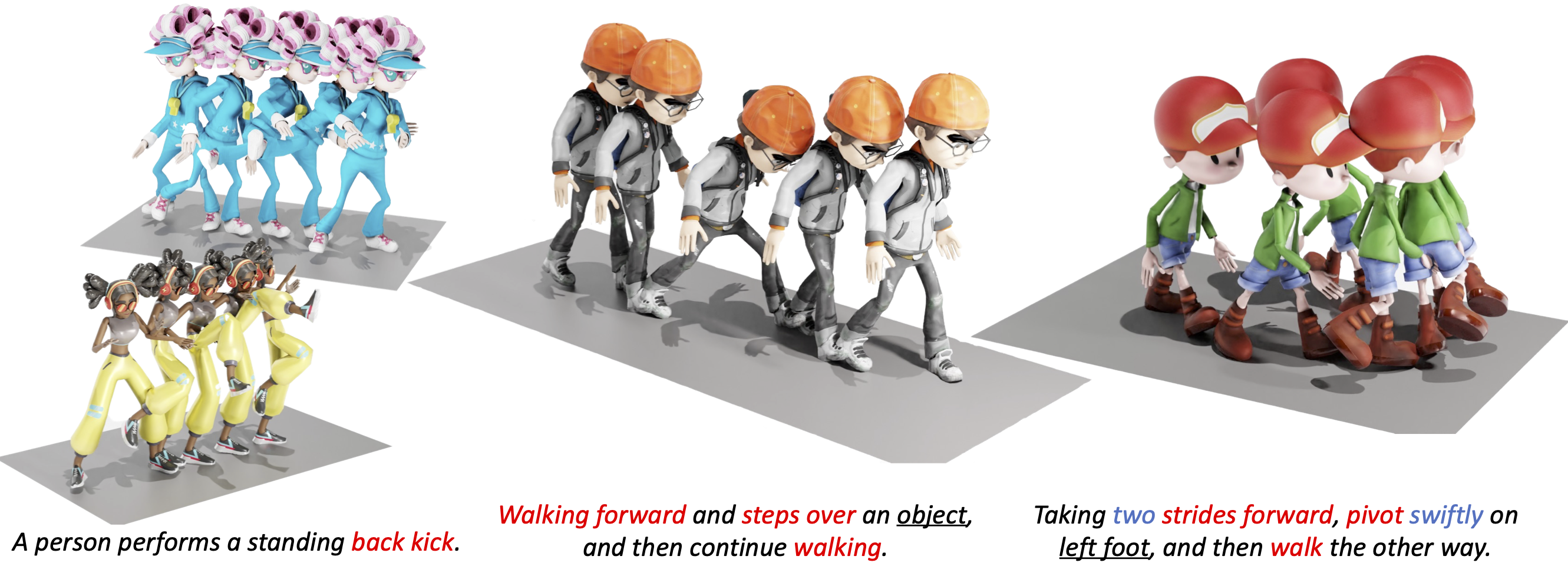

📢 **2023-12-27** --- Release WebUI demo. Try now on [🤗HuggingFace](https://huggingface.co/spaces/MeYourHint/MoMask)!

|

| 34 |

-

|

| 35 |

-

📢 **2023-12-19** --- Release scripts for temporal inpainting.

|

| 36 |

-

|

| 37 |

-

📢 **2023-12-15** --- Release codes and models for momask. Including training/eval/generation scripts.

|

| 38 |

-

|

| 39 |

-

📢 **2023-11-29** --- Initialized the webpage and git project.

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

## :round_pushpin: Get You Ready

|

| 43 |

-

|

| 44 |

-

<details>

|

| 45 |

-

|

| 46 |

-

### 1. Conda Environment

|

| 47 |

-

```

|

| 48 |

-

conda env create -f environment.yml

|

| 49 |

-

conda activate momask

|

| 50 |

-

pip install git+https://github.com/openai/CLIP.git

|

| 51 |

-

```

|

| 52 |

-

We test our code on Python 3.7.13 and PyTorch 1.7.1

|

| 53 |

-

|

| 54 |

-

#### Alternative: Pip Installation

|

| 55 |

-

<details>

|

| 56 |

-

We provide an alternative pip installation in case you encounter difficulties setting up the conda environment.

|

| 57 |

-

|

| 58 |

-

```

|

| 59 |

-

pip install -r requirements.txt

|

| 60 |

-

```

|

| 61 |

-

We test this installation on Python 3.10

|

| 62 |

-

|

| 63 |

-

</details>

|

| 64 |

-

|

| 65 |

-

### 2. Models and Dependencies

|

| 66 |

-

|

| 67 |

-

#### Download Pre-trained Models

|

| 68 |

-

```

|

| 69 |

-

bash prepare/download_models.sh

|

| 70 |

-

```

|

| 71 |

-

|

| 72 |

-

#### Download Evaluation Models and Gloves

|

| 73 |

-

For evaluation only.

|

| 74 |

-

```

|

| 75 |

-

bash prepare/download_evaluator.sh

|

| 76 |

-

bash prepare/download_glove.sh

|

| 77 |

-

```

|

| 78 |

-

|

| 79 |

-

#### Troubleshooting

|

| 80 |

-

To address the download error related to gdown: "Cannot retrieve the public link of the file. You may need to change the permission to 'Anyone with the link', or have had many accesses". A potential solution is to run `pip install --upgrade --no-cache-dir gdown`, as suggested on https://github.com/wkentaro/gdown/issues/43. This should help resolve the issue.

|

| 81 |

-

|

| 82 |

-

#### (Optional) Download Manually

|

| 83 |

-

Visit [[Google Drive]](https://drive.google.com/drive/folders/1sHajltuE2xgHh91H9pFpMAYAkHaX9o57?usp=drive_link) to download the models and evaluators mannually.

|

| 84 |

-

|

| 85 |

-

### 3. Get Data

|

| 86 |

-

|

| 87 |

-

You have two options here:

|

| 88 |

-

* **Skip getting data**, if you just want to generate motions using *own* descriptions.

|

| 89 |

-

* **Get full data**, if you want to *re-train* and *evaluate* the model.

|

| 90 |

-

|

| 91 |

-

**(a). Full data (text + motion)**

|

| 92 |

-

|

| 93 |

-

**HumanML3D** - Follow the instruction in [HumanML3D](https://github.com/EricGuo5513/HumanML3D.git), then copy the result dataset to our repository:

|

| 94 |

-

```

|

| 95 |

-

cp -r ../HumanML3D/HumanML3D ./dataset/HumanML3D

|

| 96 |

-

```

|

| 97 |

-

**KIT**-Download from [HumanML3D](https://github.com/EricGuo5513/HumanML3D.git), then place result in `./dataset/KIT-ML`

|

| 98 |

-

|

| 99 |

-

####

|

| 100 |

-

|

| 101 |

-

</details>

|

| 102 |

-

|

| 103 |

-

## :rocket: Demo

|

| 104 |

-

<details>

|

| 105 |

-

|

| 106 |

-

### (a) Generate from a single prompt

|

| 107 |

-

```

|

| 108 |

-

python gen_t2m.py --gpu_id 1 --ext exp1 --text_prompt "A person is running on a treadmill."

|

| 109 |

-

```

|

| 110 |

-

### (b) Generate from a prompt file

|

| 111 |

-

An example of prompt file is given in `./assets/text_prompt.txt`. Please follow the format of `<text description>#<motion length>` at each line. Motion length indicates the number of poses, which must be integeter and will be rounded by 4. In our work, motion is in 20 fps.

|

| 112 |

-

|

| 113 |

-

If you write `<text description>#NA`, our model will determine a length. Note once there is **one** NA, all the others will be **NA** automatically.

|

| 114 |

-

|

| 115 |

-

```

|

| 116 |

-

python gen_t2m.py --gpu_id 1 --ext exp2 --text_path ./assets/text_prompt.txt

|

| 117 |

-

```

|

| 118 |

-

|

| 119 |

-

|

| 120 |

-

A few more parameters you may be interested:

|

| 121 |

-

* `--repeat_times`: number of replications for generation, default `1`.

|

| 122 |

-

* `--motion_length`: specify the number of poses for generation, only applicable in (a).

|

| 123 |

-

|

| 124 |

-

The output files are stored under folder `./generation/<ext>/`. They are

|

| 125 |

-

* `numpy files`: generated motions with shape of (nframe, 22, 3), under subfolder `./joints`.

|

| 126 |

-

* `video files`: stick figure animation in mp4 format, under subfolder `./animation`.

|

| 127 |

-

* `bvh files`: bvh files of the generated motion, under subfolder `./animation`.

|

| 128 |

-

|

| 129 |

-

We also apply naive foot ik to the generated motions, see files with suffix `_ik`. It sometimes works well, but sometimes will fail.

|

| 130 |

-

|

| 131 |

-

</details>

|

| 132 |

-

|

| 133 |

-

## :dancers: Visualization

|

| 134 |

-

<details>

|

| 135 |

-

|

| 136 |

-

All the animations are manually rendered in blender. We use the characters from [mixamo](https://www.mixamo.com/#/). You need to download the characters in T-Pose with skeleton.

|

| 137 |

-

|

| 138 |

-

### Retargeting

|

| 139 |

-

For retargeting, we found rokoko usually leads to large error on foot. On the other hand, [keemap.rig.transfer](https://github.com/nkeeline/Keemap-Blender-Rig-ReTargeting-Addon/releases) shows more precise retargetting. You could watch the [tutorial](https://www.youtube.com/watch?v=EG-VCMkVpxg) here.

|

| 140 |

-

|

| 141 |

-

Following these steps:

|

| 142 |

-

* Download keemap.rig.transfer from the github, and install it in blender.

|

| 143 |

-

* Import both the motion files (.bvh) and character files (.fbx) in blender.

|

| 144 |

-

* `Shift + Select` the both source and target skeleton. (Do not need to be Rest Position)

|

| 145 |

-

* Switch to `Pose Mode`, then unfold the `KeeMapRig` tool at the top-right corner of the view window.

|

| 146 |

-

* For `bone mapping file`, direct to `./assets/mapping.json`(or `mapping6.json` if it doesn't work), and click `Read In Bone Mapping File`. This file is manually made by us. It works for most characters in mixamo.

|

| 147 |

-

* (Optional) You could manually fill in the bone mapping and adjust the rotations by your own, for your own character. `Save Bone Mapping File` can save the mapping configuration in local file, as specified by the mapping file path.

|

| 148 |

-

* Adjust the `Number of Samples`, `Source Rig`, `Destination Rig Name`.

|

| 149 |

-

* Clik `Transfer Animation from Source Destination`, wait a few seconds.

|

| 150 |

-

|

| 151 |

-

We didn't tried other retargetting tools. Welcome to comment if you find others are more useful.

|

| 152 |

-

|

| 153 |

-

### Scene

|

| 154 |

-

|

| 155 |

-

We use this [scene](https://drive.google.com/file/d/16SbrnG9JsJ2w7UwCFmh10PcBdl6HxlrA/view?usp=drive_link) for animation.

|

| 156 |

-

|

| 157 |

-

|

| 158 |

-

</details>

|

| 159 |

-

|

| 160 |

-

## :clapper: Temporal Inpainting

|

| 161 |

-

<details>

|

| 162 |

-

We conduct mask-based editing in the m-transformer stage, followed by the regeneration of residual tokens for the entire sequence. To load your own motion, provide the path through `--source_motion`. Utilize `-msec` to specify the mask section, supporting either ratio or frame index. For instance, `-msec 0.3,0.6` with `max_motion_length=196` is equivalent to `-msec 59,118`, indicating the editing of the frame section [59, 118].

|

| 163 |

-

|

| 164 |

-

```

|

| 165 |

-

python edit_t2m.py --gpu_id 1 --ext exp3 --use_res_model -msec 0.4,0.7 --text_prompt "A man picks something from the ground using his right hand."

|

| 166 |

-

```

|

| 167 |

-

|

| 168 |

-

Note: Presently, the source motion must adhere to the format of a HumanML3D dim-263 feature vector. An example motion vector data from the HumanML3D test set is available in `example_data/000612.npy`. To process your own motion data, you can utilize the `process_file` function from `utils/motion_process.py`.

|

| 169 |

-

|

| 170 |

-

</details>

|

| 171 |

-

|

| 172 |

-

## :space_invader: Train Your Own Models

|

| 173 |

-

<details>

|

| 174 |

-

|

| 175 |

-

|

| 176 |

-

**Note**: You have to train RVQ **BEFORE** training masked/residual transformers. The latter two can be trained simultaneously.

|

| 177 |

-

|

| 178 |

-

### Train RVQ

|

| 179 |

-

You may also need to download evaluation models to run the scripts.

|

| 180 |

-

```

|

| 181 |

-

python train_vq.py --name rvq_name --gpu_id 1 --dataset_name t2m --batch_size 256 --num_quantizers 6 --max_epoch 50 --quantize_dropout_prob 0.2 --gamma 0.05

|

| 182 |

-

```

|

| 183 |

-

|

| 184 |

-

### Train Masked Transformer

|

| 185 |

-

```

|

| 186 |

-

python train_t2m_transformer.py --name mtrans_name --gpu_id 2 --dataset_name t2m --batch_size 64 --vq_name rvq_name

|

| 187 |

-

```

|

| 188 |

-

|

| 189 |

-

### Train Residual Transformer

|

| 190 |

-

```

|

| 191 |

-

python train_res_transformer.py --name rtrans_name --gpu_id 2 --dataset_name t2m --batch_size 64 --vq_name rvq_name --cond_drop_prob 0.2 --share_weight

|

| 192 |

-

```

|

| 193 |

-

|

| 194 |

-

* `--dataset_name`: motion dataset, `t2m` for HumanML3D and `kit` for KIT-ML.

|

| 195 |

-

* `--name`: name your model. This will create to model space as `./checkpoints/<dataset_name>/<name>`

|

| 196 |

-

* `--gpu_id`: GPU id.

|

| 197 |

-

* `--batch_size`: we use `512` for rvq training. For masked/residual transformer, we use `64` on HumanML3D and `16` for KIT-ML.

|

| 198 |

-

* `--num_quantizers`: number of quantization layers, `6` is used in our case.

|

| 199 |

-

* `--quantize_drop_prob`: quantization dropout ratio, `0.2` is used.

|

| 200 |

-

* `--vq_name`: when training masked/residual transformer, you need to specify the name of rvq model for tokenization.

|

| 201 |

-

* `--cond_drop_prob`: condition drop ratio, for classifier-free guidance. `0.2` is used.

|

| 202 |

-

* `--share_weight`: whether to share the projection/embedding weights in residual transformer.

|

| 203 |

-

|

| 204 |

-

All the pre-trained models and intermediate results will be saved in space `./checkpoints/<dataset_name>/<name>`.

|

| 205 |

-

</details>

|

| 206 |

-

|

| 207 |

-

## :book: Evaluation

|

| 208 |

-

<details>

|

| 209 |

-

|

| 210 |

-

### Evaluate RVQ Reconstruction:

|

| 211 |

-

HumanML3D:

|

| 212 |

-

```

|

| 213 |

-

python eval_t2m_vq.py --gpu_id 0 --name rvq_nq6_dc512_nc512_noshare_qdp0.2 --dataset_name t2m --ext rvq_nq6

|

| 214 |

-

|

| 215 |

-

```

|

| 216 |

-

KIT-ML:

|

| 217 |

-

```

|

| 218 |

-

python eval_t2m_vq.py --gpu_id 0 --name rvq_nq6_dc512_nc512_noshare_qdp0.2_k --dataset_name kit --ext rvq_nq6

|

| 219 |

-

```

|

| 220 |

-

|

| 221 |

-

### Evaluate Text2motion Generation:

|

| 222 |

-

HumanML3D:

|

| 223 |

-

```

|

| 224 |

-

python eval_t2m_trans_res.py --res_name tres_nlayer8_ld384_ff1024_rvq6ns_cdp0.2_sw --dataset_name t2m --name t2m_nlayer8_nhead6_ld384_ff1024_cdp0.1_rvq6ns --gpu_id 1 --cond_scale 4 --time_steps 10 --ext evaluation

|

| 225 |

-

```

|

| 226 |

-

KIT-ML:

|

| 227 |

-

```

|

| 228 |

-

python eval_t2m_trans_res.py --res_name tres_nlayer8_ld384_ff1024_rvq6ns_cdp0.2_sw_k --dataset_name kit --name t2m_nlayer8_nhead6_ld384_ff1024_cdp0.1_rvq6ns_k --gpu_id 0 --cond_scale 2 --time_steps 10 --ext evaluation

|

| 229 |

-

```

|

| 230 |

-

|

| 231 |

-

* `--res_name`: model name of `residual transformer`.

|

| 232 |

-

* `--name`: model name of `masked transformer`.

|

| 233 |

-

* `--cond_scale`: scale of classifer-free guidance.

|

| 234 |

-

* `--time_steps`: number of iterations for inference.

|

| 235 |

-

* `--ext`: filename for saving evaluation results.

|

| 236 |

-

* `--which_epoch`: checkpoint name of `masked transformer`.

|

| 237 |

-

|

| 238 |

-

The final evaluation results will be saved in `./checkpoints/<dataset_name>/<name>/eval/<ext>.log`

|

| 239 |

-

|

| 240 |

-

</details>

|

| 241 |

-

|

| 242 |

-

## Acknowlegements

|

| 243 |

-

|

| 244 |

-

We sincerely thank the open-sourcing of these works where our code is based on:

|

| 245 |

-

|

| 246 |

-

[deep-motion-editing](https://github.com/DeepMotionEditing/deep-motion-editing), [Muse](https://github.com/lucidrains/muse-maskgit-pytorch), [vector-quantize-pytorch](https://github.com/lucidrains/vector-quantize-pytorch), [T2M-GPT](https://github.com/Mael-zys/T2M-GPT), [MDM](https://github.com/GuyTevet/motion-diffusion-model/tree/main) and [MLD](https://github.com/ChenFengYe/motion-latent-diffusion/tree/main)

|

| 247 |

-

|

| 248 |

-

## License

|

| 249 |

-

This code is distributed under an [MIT LICENSE](https://github.com/EricGuo5513/momask-codes/tree/main?tab=MIT-1-ov-file#readme).

|

| 250 |

-

|

| 251 |

-

Note that our code depends on other libraries, including SMPL, SMPL-X, PyTorch3D, and uses datasets which each have their own respective licenses that must also be followed.

|

| 252 |

-

|

| 253 |

-

### Misc

|

| 254 |

-

Contact cguo2@ualberta.ca for further questions.

|

| 255 |

-

|

| 256 |

-

## Star History

|

| 257 |

-

|

| 258 |

-

[](https://star-history.com/#EricGuo5513/momask-codes&Date)

|

|

|

|

| 1 |

+

---

|

| 2 |

+

title: MomaskWorking

|

| 3 |

+

app_file: app.py

|

| 4 |

+

sdk: gradio

|

| 5 |

+

sdk_version: 6.0.1

|

| 6 |

+

---

|

| 7 |

+

# MoMask: Generative Masked Modeling of 3D Human Motions (CVPR 2024)

|

| 8 |

+

### [[Project Page]](https://ericguo5513.github.io/momask) [[Paper]](https://arxiv.org/abs/2312.00063) [[Huggingface Demo]](https://huggingface.co/spaces/MeYourHint/MoMask) [[Colab Demo]](https://github.com/camenduru/MoMask-colab)

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

If you find our code or paper helpful, please consider starring our repository and citing:

|

| 12 |

+

```

|

| 13 |

+

@inproceedings{guo2024momask,

|

| 14 |

+

title={Momask: Generative masked modeling of 3d human motions},

|

| 15 |

+

author={Guo, Chuan and Mu, Yuxuan and Javed, Muhammad Gohar and Wang, Sen and Cheng, Li},

|

| 16 |

+

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

|

| 17 |

+

pages={1900--1910},

|

| 18 |

+

year={2024}

|

| 19 |

+

}

|

| 20 |

+

```

|

| 21 |

+

|

| 22 |

+

## :postbox: News

|

| 23 |

+

📢 **2024-08-02** --- The [WebUI demo 🤗](https://huggingface.co/spaces/MeYourHint/MoMask) is now running smoothly on a CPU. No GPU is required to use MoMask.

|

| 24 |

+

|

| 25 |

+

📢 **2024-02-26** --- 🔥🔥🔥 Congrats! MoMask is accepted to CVPR 2024.

|

| 26 |

+

|

| 27 |

+

📢 **2024-01-12** --- Now you can use MoMask in Blender as an add-on. Thanks to [@makeinufilm](https://twitter.com/makeinufilm) for sharing the [tutorial](https://medium.com/@makeinufilm/notes-on-how-to-set-up-the-momask-environment-and-how-to-use-blenderaddon-6563f1abdbfa).

|

| 28 |

+

|

| 29 |

+

📢 **2023-12-30** --- For easy WebUI BVH visulization, you could try this website [bvh2vrma](https://vrm-c.github.io/bvh2vrma/) from this [github](https://github.com/vrm-c/bvh2vrma?tab=readme-ov-file).

|

| 30 |

+

|

| 31 |

+

📢 **2023-12-29** --- Thanks to Camenduru for supporting the [🤗Colab](https://github.com/camenduru/MoMask-colab) demo.

|

| 32 |

+

|

| 33 |

+

📢 **2023-12-27** --- Release WebUI demo. Try now on [🤗HuggingFace](https://huggingface.co/spaces/MeYourHint/MoMask)!

|

| 34 |

+

|

| 35 |

+

📢 **2023-12-19** --- Release scripts for temporal inpainting.

|

| 36 |

+

|

| 37 |

+

📢 **2023-12-15** --- Release codes and models for momask. Including training/eval/generation scripts.

|

| 38 |

+

|

| 39 |

+

📢 **2023-11-29** --- Initialized the webpage and git project.

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

## :round_pushpin: Get You Ready

|

| 43 |

+

|

| 44 |

+

<details>

|

| 45 |

+

|

| 46 |

+

### 1. Conda Environment

|

| 47 |

+

```

|

| 48 |

+

conda env create -f environment.yml

|

| 49 |

+

conda activate momask

|

| 50 |

+

pip install git+https://github.com/openai/CLIP.git

|

| 51 |

+

```

|

| 52 |

+

We test our code on Python 3.7.13 and PyTorch 1.7.1

|

| 53 |

+

|

| 54 |

+

#### Alternative: Pip Installation

|

| 55 |

+

<details>

|

| 56 |

+

We provide an alternative pip installation in case you encounter difficulties setting up the conda environment.

|

| 57 |

+

|

| 58 |

+

```

|

| 59 |

+

pip install -r requirements.txt

|

| 60 |

+

```

|

| 61 |

+

We test this installation on Python 3.10

|

| 62 |

+

|

| 63 |

+

</details>

|

| 64 |

+

|

| 65 |

+

### 2. Models and Dependencies

|

| 66 |

+

|

| 67 |

+

#### Download Pre-trained Models

|

| 68 |

+

```

|

| 69 |

+

bash prepare/download_models.sh

|

| 70 |

+

```

|

| 71 |

+

|

| 72 |

+

#### Download Evaluation Models and Gloves

|

| 73 |

+

For evaluation only.

|

| 74 |

+

```

|

| 75 |

+

bash prepare/download_evaluator.sh

|

| 76 |

+

bash prepare/download_glove.sh

|

| 77 |

+

```

|

| 78 |

+

|

| 79 |

+

#### Troubleshooting

|

| 80 |

+

To address the download error related to gdown: "Cannot retrieve the public link of the file. You may need to change the permission to 'Anyone with the link', or have had many accesses". A potential solution is to run `pip install --upgrade --no-cache-dir gdown`, as suggested on https://github.com/wkentaro/gdown/issues/43. This should help resolve the issue.

|

| 81 |

+

|

| 82 |

+

#### (Optional) Download Manually

|

| 83 |

+

Visit [[Google Drive]](https://drive.google.com/drive/folders/1sHajltuE2xgHh91H9pFpMAYAkHaX9o57?usp=drive_link) to download the models and evaluators mannually.

|

| 84 |

+

|

| 85 |

+

### 3. Get Data

|

| 86 |

+

|

| 87 |

+

You have two options here:

|

| 88 |

+

* **Skip getting data**, if you just want to generate motions using *own* descriptions.

|

| 89 |

+

* **Get full data**, if you want to *re-train* and *evaluate* the model.

|

| 90 |

+

|

| 91 |

+

**(a). Full data (text + motion)**

|

| 92 |

+

|

| 93 |

+

**HumanML3D** - Follow the instruction in [HumanML3D](https://github.com/EricGuo5513/HumanML3D.git), then copy the result dataset to our repository:

|

| 94 |

+

```

|

| 95 |

+

cp -r ../HumanML3D/HumanML3D ./dataset/HumanML3D

|

| 96 |

+

```

|

| 97 |

+

**KIT**-Download from [HumanML3D](https://github.com/EricGuo5513/HumanML3D.git), then place result in `./dataset/KIT-ML`

|

| 98 |

+

|

| 99 |

+

####

|

| 100 |

+

|

| 101 |

+

</details>

|

| 102 |

+

|

| 103 |

+

## :rocket: Demo

|

| 104 |

+

<details>

|

| 105 |

+

|

| 106 |

+

### (a) Generate from a single prompt

|

| 107 |

+

```

|

| 108 |

+

python gen_t2m.py --gpu_id 1 --ext exp1 --text_prompt "A person is running on a treadmill."

|

| 109 |

+

```

|

| 110 |

+

### (b) Generate from a prompt file

|

| 111 |

+

An example of prompt file is given in `./assets/text_prompt.txt`. Please follow the format of `<text description>#<motion length>` at each line. Motion length indicates the number of poses, which must be integeter and will be rounded by 4. In our work, motion is in 20 fps.

|

| 112 |

+

|

| 113 |

+

If you write `<text description>#NA`, our model will determine a length. Note once there is **one** NA, all the others will be **NA** automatically.

|

| 114 |

+

|

| 115 |

+

```

|

| 116 |

+

python gen_t2m.py --gpu_id 1 --ext exp2 --text_path ./assets/text_prompt.txt

|

| 117 |

+

```

|

| 118 |

+

|

| 119 |

+

|

| 120 |

+

A few more parameters you may be interested:

|

| 121 |

+

* `--repeat_times`: number of replications for generation, default `1`.

|

| 122 |

+

* `--motion_length`: specify the number of poses for generation, only applicable in (a).

|

| 123 |

+

|

| 124 |

+

The output files are stored under folder `./generation/<ext>/`. They are

|

| 125 |

+

* `numpy files`: generated motions with shape of (nframe, 22, 3), under subfolder `./joints`.

|

| 126 |

+

* `video files`: stick figure animation in mp4 format, under subfolder `./animation`.

|

| 127 |

+

* `bvh files`: bvh files of the generated motion, under subfolder `./animation`.

|

| 128 |

+

|

| 129 |

+

We also apply naive foot ik to the generated motions, see files with suffix `_ik`. It sometimes works well, but sometimes will fail.

|

| 130 |

+

|

| 131 |

+

</details>

|

| 132 |

+

|

| 133 |

+

## :dancers: Visualization

|

| 134 |

+

<details>

|

| 135 |

+

|

| 136 |

+

All the animations are manually rendered in blender. We use the characters from [mixamo](https://www.mixamo.com/#/). You need to download the characters in T-Pose with skeleton.

|

| 137 |

+

|

| 138 |

+

### Retargeting

|

| 139 |

+

For retargeting, we found rokoko usually leads to large error on foot. On the other hand, [keemap.rig.transfer](https://github.com/nkeeline/Keemap-Blender-Rig-ReTargeting-Addon/releases) shows more precise retargetting. You could watch the [tutorial](https://www.youtube.com/watch?v=EG-VCMkVpxg) here.

|

| 140 |

+

|

| 141 |

+

Following these steps:

|

| 142 |

+

* Download keemap.rig.transfer from the github, and install it in blender.

|

| 143 |

+

* Import both the motion files (.bvh) and character files (.fbx) in blender.

|

| 144 |

+

* `Shift + Select` the both source and target skeleton. (Do not need to be Rest Position)

|

| 145 |

+

* Switch to `Pose Mode`, then unfold the `KeeMapRig` tool at the top-right corner of the view window.

|

| 146 |

+

* For `bone mapping file`, direct to `./assets/mapping.json`(or `mapping6.json` if it doesn't work), and click `Read In Bone Mapping File`. This file is manually made by us. It works for most characters in mixamo.

|

| 147 |

+

* (Optional) You could manually fill in the bone mapping and adjust the rotations by your own, for your own character. `Save Bone Mapping File` can save the mapping configuration in local file, as specified by the mapping file path.

|

| 148 |

+

* Adjust the `Number of Samples`, `Source Rig`, `Destination Rig Name`.

|

| 149 |

+

* Clik `Transfer Animation from Source Destination`, wait a few seconds.

|

| 150 |

+

|

| 151 |

+

We didn't tried other retargetting tools. Welcome to comment if you find others are more useful.

|

| 152 |

+

|

| 153 |

+

### Scene

|

| 154 |

+

|

| 155 |

+

We use this [scene](https://drive.google.com/file/d/16SbrnG9JsJ2w7UwCFmh10PcBdl6HxlrA/view?usp=drive_link) for animation.

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

</details>

|

| 159 |

+

|

| 160 |

+

## :clapper: Temporal Inpainting

|

| 161 |

+

<details>

|

| 162 |

+

We conduct mask-based editing in the m-transformer stage, followed by the regeneration of residual tokens for the entire sequence. To load your own motion, provide the path through `--source_motion`. Utilize `-msec` to specify the mask section, supporting either ratio or frame index. For instance, `-msec 0.3,0.6` with `max_motion_length=196` is equivalent to `-msec 59,118`, indicating the editing of the frame section [59, 118].

|

| 163 |

+

|

| 164 |

+

```

|

| 165 |

+

python edit_t2m.py --gpu_id 1 --ext exp3 --use_res_model -msec 0.4,0.7 --text_prompt "A man picks something from the ground using his right hand."

|

| 166 |

+

```

|

| 167 |

+

|

| 168 |

+

Note: Presently, the source motion must adhere to the format of a HumanML3D dim-263 feature vector. An example motion vector data from the HumanML3D test set is available in `example_data/000612.npy`. To process your own motion data, you can utilize the `process_file` function from `utils/motion_process.py`.

|

| 169 |

+

|

| 170 |

+

</details>

|

| 171 |

+

|

| 172 |

+

## :space_invader: Train Your Own Models

|

| 173 |

+

<details>

|

| 174 |

+

|

| 175 |

+

|

| 176 |

+

**Note**: You have to train RVQ **BEFORE** training masked/residual transformers. The latter two can be trained simultaneously.

|

| 177 |

+

|

| 178 |

+

### Train RVQ

|

| 179 |

+

You may also need to download evaluation models to run the scripts.

|

| 180 |

+

```

|

| 181 |

+

python train_vq.py --name rvq_name --gpu_id 1 --dataset_name t2m --batch_size 256 --num_quantizers 6 --max_epoch 50 --quantize_dropout_prob 0.2 --gamma 0.05

|

| 182 |

+

```

|

| 183 |

+

|

| 184 |

+

### Train Masked Transformer

|

| 185 |

+

```

|

| 186 |

+

python train_t2m_transformer.py --name mtrans_name --gpu_id 2 --dataset_name t2m --batch_size 64 --vq_name rvq_name

|

| 187 |

+

```

|

| 188 |

+

|

| 189 |

+

### Train Residual Transformer

|

| 190 |

+

```

|

| 191 |

+

python train_res_transformer.py --name rtrans_name --gpu_id 2 --dataset_name t2m --batch_size 64 --vq_name rvq_name --cond_drop_prob 0.2 --share_weight

|

| 192 |

+

```

|

| 193 |

+

|

| 194 |

+

* `--dataset_name`: motion dataset, `t2m` for HumanML3D and `kit` for KIT-ML.

|

| 195 |

+

* `--name`: name your model. This will create to model space as `./checkpoints/<dataset_name>/<name>`

|

| 196 |

+

* `--gpu_id`: GPU id.

|

| 197 |

+

* `--batch_size`: we use `512` for rvq training. For masked/residual transformer, we use `64` on HumanML3D and `16` for KIT-ML.

|

| 198 |

+

* `--num_quantizers`: number of quantization layers, `6` is used in our case.

|

| 199 |

+

* `--quantize_drop_prob`: quantization dropout ratio, `0.2` is used.

|

| 200 |

+

* `--vq_name`: when training masked/residual transformer, you need to specify the name of rvq model for tokenization.

|

| 201 |

+

* `--cond_drop_prob`: condition drop ratio, for classifier-free guidance. `0.2` is used.

|

| 202 |

+

* `--share_weight`: whether to share the projection/embedding weights in residual transformer.

|

| 203 |

+

|

| 204 |

+

All the pre-trained models and intermediate results will be saved in space `./checkpoints/<dataset_name>/<name>`.

|

| 205 |

+

</details>

|

| 206 |

+

|

| 207 |

+

## :book: Evaluation

|

| 208 |

+

<details>

|

| 209 |

+

|

| 210 |

+

### Evaluate RVQ Reconstruction:

|

| 211 |

+

HumanML3D:

|

| 212 |

+

```

|

| 213 |

+

python eval_t2m_vq.py --gpu_id 0 --name rvq_nq6_dc512_nc512_noshare_qdp0.2 --dataset_name t2m --ext rvq_nq6

|

| 214 |

+

|

| 215 |

+

```

|

| 216 |

+

KIT-ML:

|

| 217 |

+

```

|

| 218 |

+

python eval_t2m_vq.py --gpu_id 0 --name rvq_nq6_dc512_nc512_noshare_qdp0.2_k --dataset_name kit --ext rvq_nq6

|

| 219 |

+

```

|

| 220 |

+

|

| 221 |

+

### Evaluate Text2motion Generation:

|

| 222 |

+

HumanML3D:

|

| 223 |

+

```

|

| 224 |

+

python eval_t2m_trans_res.py --res_name tres_nlayer8_ld384_ff1024_rvq6ns_cdp0.2_sw --dataset_name t2m --name t2m_nlayer8_nhead6_ld384_ff1024_cdp0.1_rvq6ns --gpu_id 1 --cond_scale 4 --time_steps 10 --ext evaluation

|

| 225 |

+

```

|

| 226 |

+

KIT-ML:

|

| 227 |

+

```

|

| 228 |

+

python eval_t2m_trans_res.py --res_name tres_nlayer8_ld384_ff1024_rvq6ns_cdp0.2_sw_k --dataset_name kit --name t2m_nlayer8_nhead6_ld384_ff1024_cdp0.1_rvq6ns_k --gpu_id 0 --cond_scale 2 --time_steps 10 --ext evaluation

|

| 229 |

+

```

|

| 230 |

+

|

| 231 |

+

* `--res_name`: model name of `residual transformer`.

|

| 232 |

+

* `--name`: model name of `masked transformer`.

|

| 233 |

+

* `--cond_scale`: scale of classifer-free guidance.

|

| 234 |

+

* `--time_steps`: number of iterations for inference.

|

| 235 |

+

* `--ext`: filename for saving evaluation results.

|

| 236 |

+

* `--which_epoch`: checkpoint name of `masked transformer`.

|

| 237 |

+

|

| 238 |

+

The final evaluation results will be saved in `./checkpoints/<dataset_name>/<name>/eval/<ext>.log`

|

| 239 |

+

|

| 240 |

+

</details>

|

| 241 |

+

|

| 242 |

+

## Acknowlegements

|

| 243 |

+

|

| 244 |

+

We sincerely thank the open-sourcing of these works where our code is based on:

|

| 245 |

+

|

| 246 |

+

[deep-motion-editing](https://github.com/DeepMotionEditing/deep-motion-editing), [Muse](https://github.com/lucidrains/muse-maskgit-pytorch), [vector-quantize-pytorch](https://github.com/lucidrains/vector-quantize-pytorch), [T2M-GPT](https://github.com/Mael-zys/T2M-GPT), [MDM](https://github.com/GuyTevet/motion-diffusion-model/tree/main) and [MLD](https://github.com/ChenFengYe/motion-latent-diffusion/tree/main)

|

| 247 |

+

|

| 248 |

+

## License

|

| 249 |

+

This code is distributed under an [MIT LICENSE](https://github.com/EricGuo5513/momask-codes/tree/main?tab=MIT-1-ov-file#readme).

|

| 250 |

+

|

| 251 |

+

Note that our code depends on other libraries, including SMPL, SMPL-X, PyTorch3D, and uses datasets which each have their own respective licenses that must also be followed.

|

| 252 |

+

|

| 253 |

+

### Misc

|

| 254 |

+

Contact cguo2@ualberta.ca for further questions.

|

| 255 |

+

|

| 256 |

+

## Star History

|

| 257 |

+

|

| 258 |

+

[](https://star-history.com/#EricGuo5513/momask-codes&Date)

|