Datasets:

Update README.md

Browse files

README.md

CHANGED

|

@@ -393,4 +393,68 @@ configs:

|

|

| 393 |

path: SupViT/val-*

|

| 394 |

- split: test

|

| 395 |

path: SupViT/test-*

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 396 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 393 |

path: SupViT/val-*

|

| 394 |

- split: test

|

| 395 |

path: SupViT/test-*

|

| 396 |

+

tags:

|

| 397 |

+

- probex

|

| 398 |

+

- model-j

|

| 399 |

+

- weight-space-learning

|

| 400 |

+

- model-zoo

|

| 401 |

+

- hyperparameters

|

| 402 |

+

- stable-diffusion

|

| 403 |

+

- vit

|

| 404 |

+

- resnet

|

| 405 |

+

size_categories:

|

| 406 |

+

- 10K<n<100K

|

| 407 |

---

|

| 408 |

+

|

| 409 |

+

# Model-J Dataset

|

| 410 |

+

|

| 411 |

+

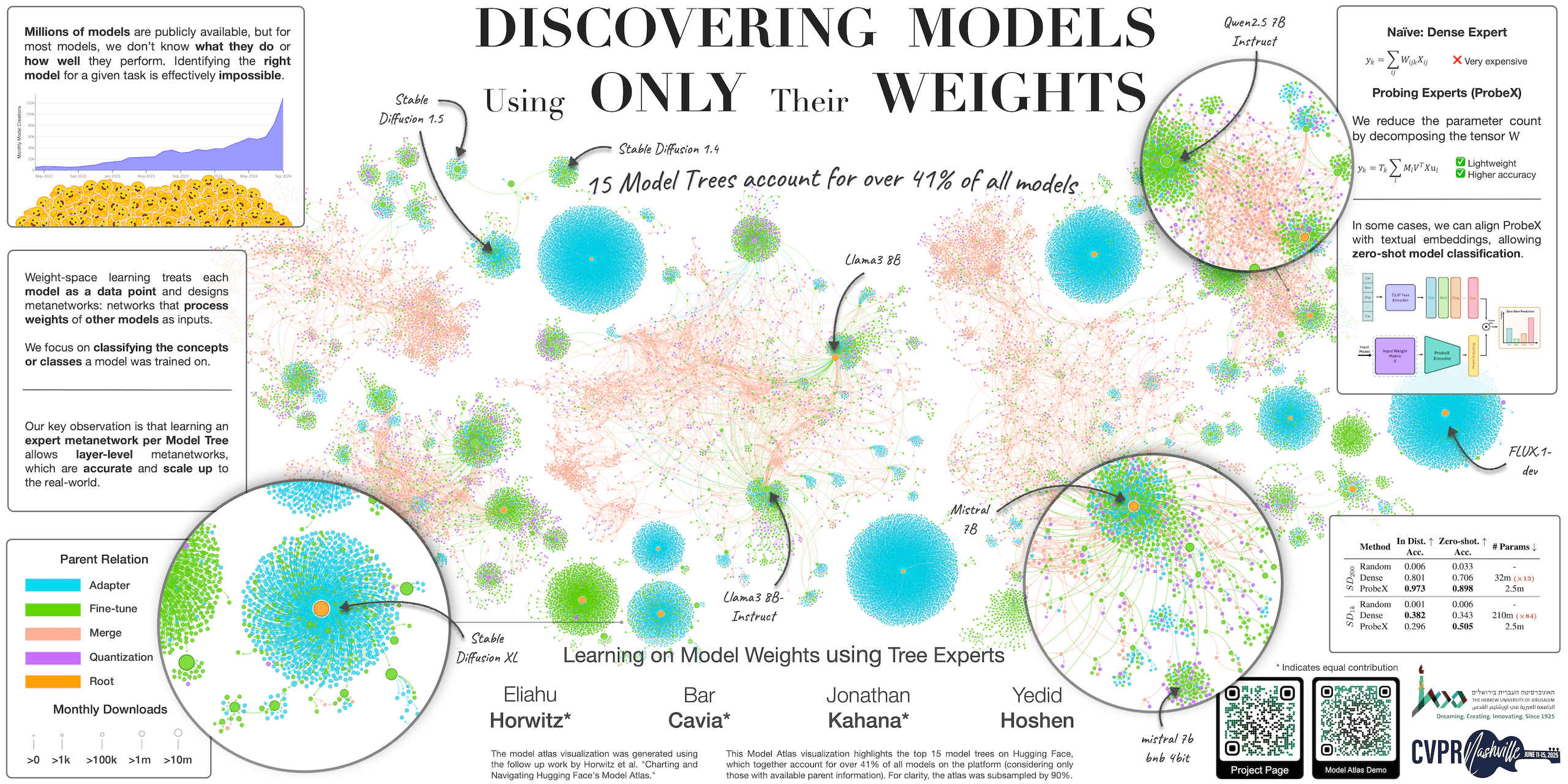

This dataset contains the hyperparameters, metadata, and Hugging Face links for all models in the **Model-J** dataset, introduced in:

|

| 412 |

+

|

| 413 |

+

**Learning on Model Weights using Tree Experts** (CVPR 2025) by Eliahu Horwitz*, Bar Cavia*, Jonathan Kahana*, Yedid Hoshen

|

| 414 |

+

|

| 415 |

+

<p align="center">

|

| 416 |

+

🌐 <a href="https://horwitz.ai/probex" target="_blank">Project</a> | 📃 <a href="https://arxiv.org/abs/2410.13569" target="_blank">Paper</a> | 💻 <a href="https://github.com/eliahuhorwitz/ProbeX" target="_blank">GitHub</a> | 🤗 <a href="https://huggingface.co/ProbeX" target="_blank">Models</a>

|

| 417 |

+

</p>

|

| 418 |

+

|

| 419 |

+

|

| 420 |

+

|

| 421 |

+

## Overview

|

| 422 |

+

|

| 423 |

+

Model-J is a large-scale dataset of trained neural networks designed for research on learning from model weights. It contains **14,004** models spanning 6 subsets, each with train/val/test splits. Every row in this dataset provides the full training hyperparameters, performance metrics, and a direct link to the corresponding model weights on Hugging Face.

|

| 424 |

+

|

| 425 |

+

## Subsets

|

| 426 |

+

|

| 427 |

+

### Discriminative (one model per HF repo)

|

| 428 |

+

|

| 429 |

+

| Subset | Base Model | Train | Val | Test | Total |

|

| 430 |

+

|---|---|---|---|---|---|

|

| 431 |

+

| **DINO** | `facebook/dino-vitb16` | 701 | 100 | 201 | 1,002 |

|

| 432 |

+

| **MAE** | `facebook/vit-mae-base` | 701 | 100 | 201 | 1,002 |

|

| 433 |

+

| **SupViT** | `google/vit-base-patch16-224` | 698 | 99 | 201 | 998 |

|

| 434 |

+

| **ResNet** | `microsoft/resnet-18` | 701 | 100 | 201 | 1,002 |

|

| 435 |

+

|

| 436 |

+

Each discriminative model is a full fine-tuned classifier hosted in its own Hugging Face repository. The `hf_model_id` and `hf_model_url` columns point directly to the model.

|

| 437 |

+

|

| 438 |

+

### Generative (bundled LoRA models in a single HF repo)

|

| 439 |

+

|

| 440 |

+

| Subset | Train | Val | Test | Val Holdout | Test Holdout | Total |

|

| 441 |

+

|---|---|-----|------|-------------|--------------|---|

|

| 442 |

+

| **SD_200** | 3,500 | 251 | 499 | 249 | 501 | 5,000 |

|

| 443 |

+

| **SD_1k** | 3,500 | 251 | 499 | 249 | 501 | 5,000 |

|

| 444 |

+

|

| 445 |

+

|

| 446 |

+

Each generative model is a LoRA adapter. All models within a subset are bundled into a single Hugging Face repository ([SD_1k](https://huggingface.co/ProbeX/Model-J__SD_1k), [SD_200](https://huggingface.co/ProbeX/Model-J__SD_200)). The `hf_model_path` column provides the path to each model's weights within the repo. Each model's directory also contains its training images.

|

| 447 |

+

|

| 448 |

+

## Citation

|

| 449 |

+

If you find this useful for your research, please use the following.

|

| 450 |

+

|

| 451 |

+

```

|

| 452 |

+

@InProceedings{Horwitz_2025_CVPR,

|

| 453 |

+

author = {Horwitz, Eliahu and Cavia, Bar and Kahana, Jonathan and Hoshen, Yedid},

|

| 454 |

+

title = {Learning on Model Weights using Tree Experts},

|

| 455 |

+

booktitle = {Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR)},

|

| 456 |

+

month = {June},

|

| 457 |

+

year = {2025},

|

| 458 |

+

pages = {20468-20478}

|

| 459 |

+

}

|

| 460 |

+

```

|