Replace with clean markdown card

Browse files

README.md

CHANGED

|

@@ -13,13 +13,12 @@ tags:

|

|

| 13 |

|

| 14 |

# SyncNet

|

| 15 |

|

| 16 |

-

Synchronization Network (SyncNet) from Li, Y et al (2017) .

|

| 17 |

|

| 18 |

-

> **Architecture-only repository.**

|

| 19 |

> `braindecode.models.SyncNet` class. **No pretrained weights are

|

| 20 |

-

> distributed here**

|

| 21 |

-

> data

|

| 22 |

-

> separately.

|

| 23 |

|

| 24 |

## Quick start

|

| 25 |

|

|

@@ -38,159 +37,44 @@ model = SyncNet(

|

|

| 38 |

)

|

| 39 |

```

|

| 40 |

|

| 41 |

-

The signal-shape arguments above are

|

| 42 |

-

|

| 43 |

|

| 44 |

## Documentation

|

| 45 |

-

|

| 46 |

-

-

|

| 47 |

-

<https://braindecode.org/stable/generated/braindecode.models.SyncNet.html>

|

| 48 |

-

- Interactive browser with live instantiation:

|

| 49 |

<https://huggingface.co/spaces/braindecode/model-explorer>

|

| 50 |

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/syncnet.py#L14>

|

| 51 |

|

| 52 |

-

## Architecture description

|

| 53 |

-

|

| 54 |

-

The block below is the rendered class docstring (parameters,

|

| 55 |

-

references, architecture figure where available).

|

| 56 |

-

|

| 57 |

-

<div class='bd-doc'><main>

|

| 58 |

-

<p>Synchronization Network (SyncNet) from Li, Y et al (2017) [Li2017]_.</p>

|

| 59 |

-

<span style="display:inline-block;padding:2px 8px;border-radius:4px;background:#E69F00;color:white;font-size:11px;font-weight:600;margin-right:4px;">Interpretability</span>

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

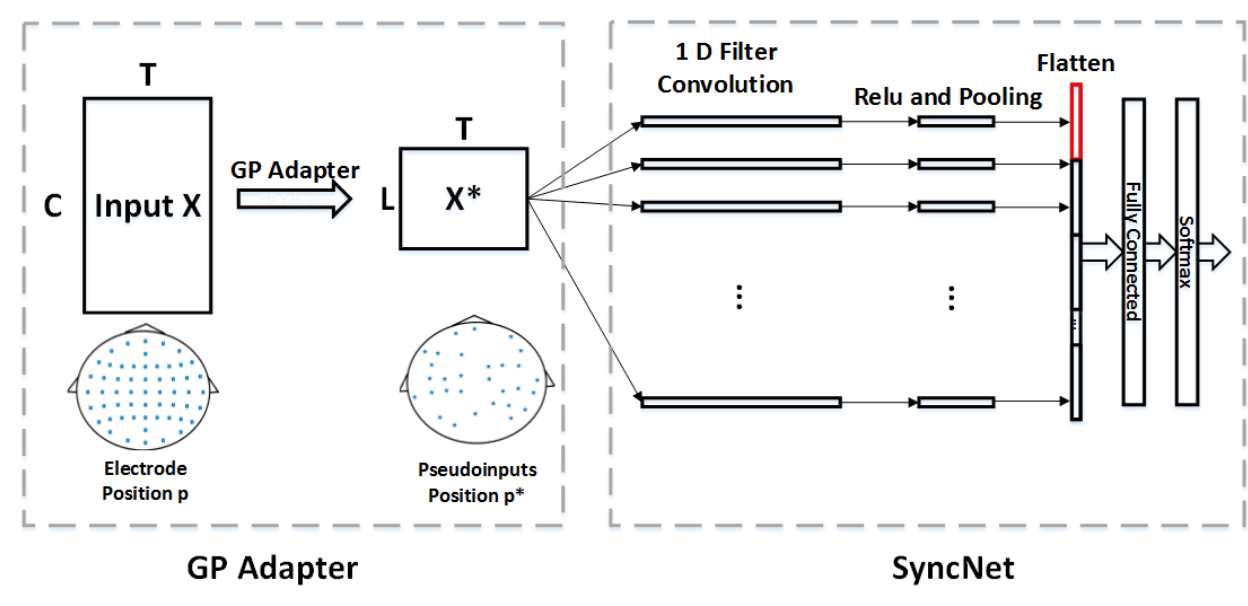

.. figure:: https://braindecode.org/dev/_static/model/SyncNet.png

|

| 64 |

-

:align: center

|

| 65 |

-

:alt: SyncNet Architecture

|

| 66 |

-

|

| 67 |

-

SyncNet uses parameterized 1-dimensional convolutional filters inspired by

|

| 68 |

-

the Morlet wavelet to extract features from EEG signals. The filters are

|

| 69 |

-

dynamically generated based on learnable parameters that control the

|

| 70 |

-

oscillation and decay characteristics.

|

| 71 |

-

|

| 72 |

-

The filter for channel ``c`` and filter ``k`` is defined as:

|

| 73 |

-

|

| 74 |

-

.. math::

|

| 75 |

-

|

| 76 |

-

f_c^{(k)}(\\tau) = amplitude_c^{(k)} \\cos(\\omega^{(k)} \\tau + \\phi_c^{(k)}) \\exp(-\\beta^{(k)} \\tau^2)

|

| 77 |

-

|

| 78 |

-

where:

|

| 79 |

-

- :math:`amplitude_c^{(k)}` is the amplitude parameter (channel-specific).

|

| 80 |

-

- :math:`\\omega^{(k)}` is the frequency parameter (shared across channels).

|

| 81 |

-

- :math:`\\phi_c^{(k)}` is the phase shift (channel-specific).

|

| 82 |

-

- :math:`\\beta^{(k)}` is the decay parameter (shared across channels).

|

| 83 |

-

- :math:`\\tau` is the time index.

|

| 84 |

-

|

| 85 |

-

Parameters

|

| 86 |

-

----------

|

| 87 |

-

num_filters : int, optional

|

| 88 |

-

Number of filters in the convolutional layer. Default is 1.

|

| 89 |

-

filter_width : int, optional

|

| 90 |

-

Width of the convolutional filters. Default is 40.

|

| 91 |

-

pool_size : int, optional

|

| 92 |

-

Size of the pooling window. Default is 40.

|

| 93 |

-

activation : nn.Module, optional

|

| 94 |

-

Activation function to apply after pooling. Default is ``nn.ReLU``.

|

| 95 |

-

ampli_init_values : tuple of float, optional

|

| 96 |

-

The initialization range for amplitude parameter using uniform

|

| 97 |

-

distribution. Default is (-0.05, 0.05).

|

| 98 |

-

omega_init_values : tuple of float, optional

|

| 99 |

-

The initialization range for omega parameters using uniform

|

| 100 |

-

distribution. Default is (0, 1).

|

| 101 |

-

beta_init_values : tuple of float, optional

|

| 102 |

-

The initialization range for beta (decay) parameters using uniform

|

| 103 |

-

distribution. Default is (0, 0.05).

|

| 104 |

-

phase_init_values : tuple of float, optional

|

| 105 |

-

The initialization mean and standard deviation for phase

|

| 106 |

-

parameters using normal distribution. Default is (0, 0.05).

|

| 107 |

-

|

| 108 |

-

|

| 109 |

-

Notes

|

| 110 |

-

-----

|

| 111 |

-

This implementation is not guaranteed to be correct! it has not been checked

|

| 112 |

-

by original authors. The modifications are based on derivated code from

|

| 113 |

-

[CodeICASSP2025]_.

|

| 114 |

-

|

| 115 |

-

|

| 116 |

-

References

|

| 117 |

-

----------

|

| 118 |

-

.. [Li2017] Li, Y., Dzirasa, K., Carin, L., & Carlson, D. E. (2017).

|

| 119 |

-

Targeting EEG/LFP synchrony with neural nets. Advances in neural

|

| 120 |

-

information processing systems, 30.

|

| 121 |

-

.. [CodeICASSP2025] Code from Baselines for EEG-Music Emotion Recognition

|

| 122 |

-

Grand Challenge at ICASSP 2025.

|

| 123 |

-

https://github.com/SalvoCalcagno/eeg-music-challenge-icassp-2025-baselines

|

| 124 |

-

|

| 125 |

-

.. rubric:: Hugging Face Hub integration

|

| 126 |

-

|

| 127 |

-

When the optional ``huggingface_hub`` package is installed, all models

|

| 128 |

-

automatically gain the ability to be pushed to and loaded from the

|

| 129 |

-

Hugging Face Hub. Install with::

|

| 130 |

-

|

| 131 |

-

pip install braindecode[hub]

|

| 132 |

-

|

| 133 |

-

**Pushing a model to the Hub:**

|

| 134 |

-

|

| 135 |

-

.. code::

|

| 136 |

-

from braindecode.models import SyncNet

|

| 137 |

-

|

| 138 |

-

# Train your model

|

| 139 |

-

model = SyncNet(n_chans=22, n_outputs=4, n_times=1000)

|

| 140 |

-

# ... training code ...

|

| 141 |

-

|

| 142 |

-

# Push to the Hub

|

| 143 |

-

model.push_to_hub(

|

| 144 |

-

repo_id="username/my-syncnet-model",

|

| 145 |

-

commit_message="Initial model upload",

|

| 146 |

-

)

|

| 147 |

-

|

| 148 |

-

**Loading a model from the Hub:**

|

| 149 |

-

|

| 150 |

-

.. code::

|

| 151 |

-

from braindecode.models import SyncNet

|

| 152 |

-

|

| 153 |

-

# Load pretrained model

|

| 154 |

-

model = SyncNet.from_pretrained("username/my-syncnet-model")

|

| 155 |

-

|

| 156 |

-

# Load with a different number of outputs (head is rebuilt automatically)

|

| 157 |

-

model = SyncNet.from_pretrained("username/my-syncnet-model", n_outputs=4)

|

| 158 |

-

|

| 159 |

-

**Extracting features and replacing the head:**

|

| 160 |

|

| 161 |

-

|

| 162 |

-

import torch

|

| 163 |

|

| 164 |

-

|

| 165 |

-

# Extract encoder features (consistent dict across all models)

|

| 166 |

-

out = model(x, return_features=True)

|

| 167 |

-

features = out["features"]

|

| 168 |

|

| 169 |

-

# Replace the classification head

|

| 170 |

-

model.reset_head(n_outputs=10)

|

| 171 |

|

| 172 |

-

|

| 173 |

|

| 174 |

-

|

| 175 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 176 |

|

| 177 |

-

config = model.get_config() # all __init__ params

|

| 178 |

-

with open("config.json", "w") as f:

|

| 179 |

-

json.dump(config, f)

|

| 180 |

|

| 181 |

-

|

| 182 |

|

| 183 |

-

|

| 184 |

-

|

| 185 |

-

saved to the Hub and restored when loading.

|

| 186 |

|

| 187 |

-

See :ref:`load-pretrained-models` for a complete tutorial.</main>

|

| 188 |

-

</div>

|

| 189 |

|

| 190 |

## Citation

|

| 191 |

|

| 192 |

-

|

| 193 |

-

*References* section above) and braindecode:

|

| 194 |

|

| 195 |

```bibtex

|

| 196 |

@article{aristimunha2025braindecode,

|

|

|

|

| 13 |

|

| 14 |

# SyncNet

|

| 15 |

|

| 16 |

+

Synchronization Network (SyncNet) from Li, Y et al (2017) [Li2017].

|

| 17 |

|

| 18 |

+

> **Architecture-only repository.** Documents the

|

| 19 |

> `braindecode.models.SyncNet` class. **No pretrained weights are

|

| 20 |

+

> distributed here.** Instantiate the model and train it on your own

|

| 21 |

+

> data.

|

|

|

|

| 22 |

|

| 23 |

## Quick start

|

| 24 |

|

|

|

|

| 37 |

)

|

| 38 |

```

|

| 39 |

|

| 40 |

+

The signal-shape arguments above are illustrative defaults — adjust to

|

| 41 |

+

match your recording.

|

| 42 |

|

| 43 |

## Documentation

|

| 44 |

+

- Full API reference: <https://braindecode.org/stable/generated/braindecode.models.SyncNet.html>

|

| 45 |

+

- Interactive browser (live instantiation, parameter counts):

|

|

|

|

|

|

|

| 46 |

<https://huggingface.co/spaces/braindecode/model-explorer>

|

| 47 |

- Source on GitHub: <https://github.com/braindecode/braindecode/blob/master/braindecode/models/syncnet.py#L14>

|

| 48 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 49 |

|

| 50 |

+

## Architecture

|

|

|

|

| 51 |

|

| 52 |

+

|

|

|

|

|

|

|

|

|

|

| 53 |

|

|

|

|

|

|

|

| 54 |

|

| 55 |

+

## Parameters

|

| 56 |

|

| 57 |

+

| Parameter | Type | Description |

|

| 58 |

+

|---|---|---|

|

| 59 |

+

| `num_filters` | int, optional | Number of filters in the convolutional layer. Default is 1. |

|

| 60 |

+

| `filter_width` | int, optional | Width of the convolutional filters. Default is 40. |

|

| 61 |

+

| `pool_size` | int, optional | Size of the pooling window. Default is 40. |

|

| 62 |

+

| `activation` | nn.Module, optional | Activation function to apply after pooling. Default is `nn.ReLU`. |

|

| 63 |

+

| `ampli_init_values` | tuple of float, optional | The initialization range for amplitude parameter using uniform distribution. Default is (-0.05, 0.05). |

|

| 64 |

+

| `omega_init_values` | tuple of float, optional | The initialization range for omega parameters using uniform distribution. Default is (0, 1). |

|

| 65 |

+

| `beta_init_values` | tuple of float, optional | The initialization range for beta (decay) parameters using uniform distribution. Default is (0, 0.05). |

|

| 66 |

+

| `phase_init_values` | tuple of float, optional | The initialization mean and standard deviation for phase parameters using normal distribution. Default is (0, 0.05). |

|

| 67 |

|

|

|

|

|

|

|

|

|

|

| 68 |

|

| 69 |

+

## References

|

| 70 |

|

| 71 |

+

1. Li, Y., Dzirasa, K., Carin, L., & Carlson, D. E. (2017). Targeting EEG/LFP synchrony with neural nets. Advances in neural information processing systems, 30.

|

| 72 |

+

2. Code from Baselines for EEG-Music Emotion Recognition Grand Challenge at ICASSP 2025. https://github.com/SalvoCalcagno/eeg-music-challenge-icassp-2025-baselines

|

|

|

|

| 73 |

|

|

|

|

|

|

|

| 74 |

|

| 75 |

## Citation

|

| 76 |

|

| 77 |

+

Cite the original architecture paper (see *References* above) and braindecode:

|

|

|

|

| 78 |

|

| 79 |

```bibtex

|

| 80 |

@article{aristimunha2025braindecode,

|